TL;DR

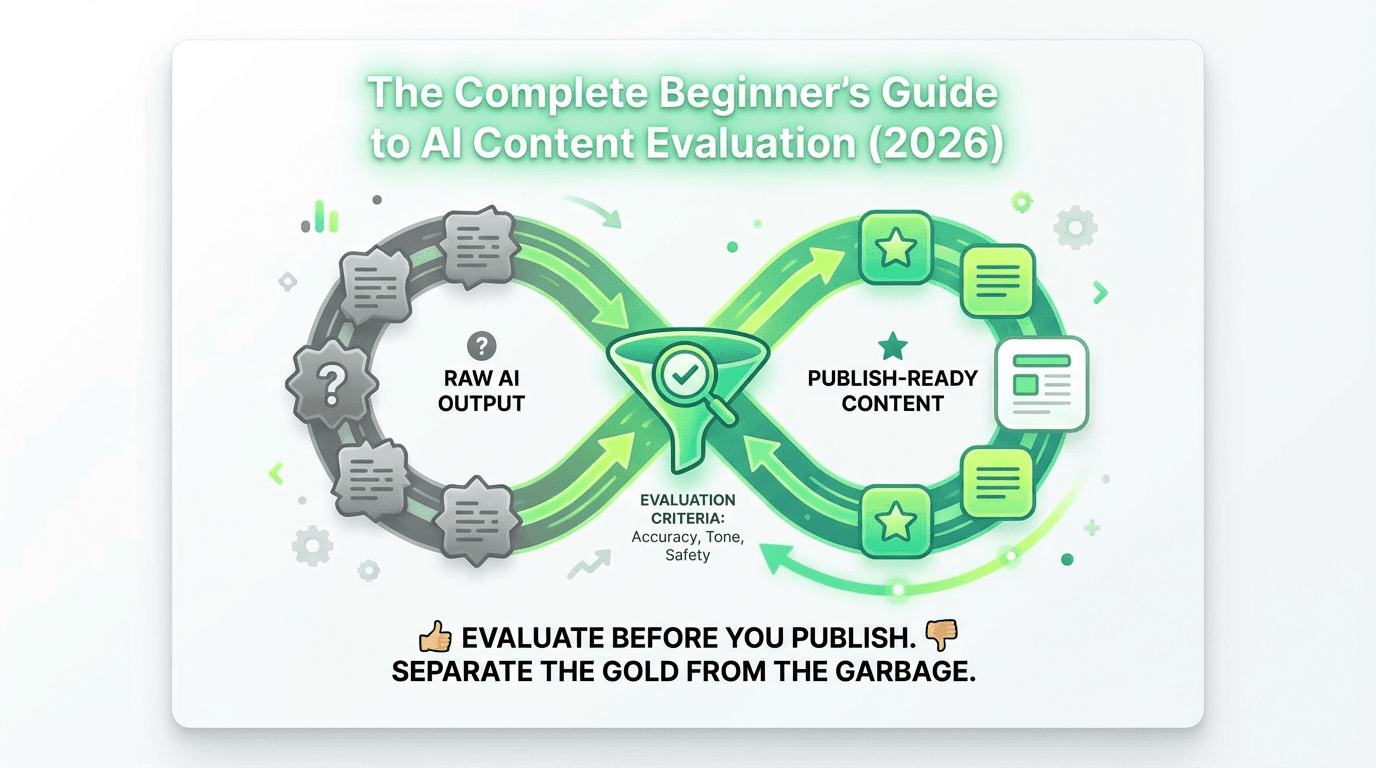

AI content evaluation is quality control for AI-generated drafts.

Use a small rubric. Prioritize accuracy, relevance, clarity, safety, and brand voice.

Choose a method based on risk and volume.

Run a simple loop: define criteria, create a golden set, score consistently, then improve prompts and workflow.

Sanity-check with TRAAP and watch for hallucinations, outdated info, bias, off-brand tone, and generic writing.

By 2026, over 70% of businesses are using AI tools to generate everything from blog posts and social media captions to product descriptions and customer emails. But here's the problem: not all AI-generated content is created equal.

You've probably experienced it yourself. You feed a prompt into ChatGPT, Claude, or your favorite AI writing tool, and out comes... something. Sometimes it's brilliant. Other times it's generic, factually questionable, or completely off-brand. The difference between content that drives results and content that damages your credibility comes down to one critical skill: evaluation.

In this comprehensive guide, you'll learn exactly how to evaluate AI-generated content like a pro—even if you've never done it before. We'll cover evaluation methods, practical frameworks, quality criteria, and actionable best practices that you can implement today. Whether you're a content creator, marketer, educator, or business owner, this guide will give you the confidence to harness AI's power while maintaining the quality your audience expects.

Evals are not just for engineering workflows. With LLMs, it is equally important, if not more important, to have evals at every gate and every layer so LLM outputs are handled with rigor and diligence. Here’s a video of evals 101. While it is engineering-focused, we are going to adapt and apply many of the core principles to content marketing.

What is AI Content Evaluation?

AI content evaluation is the systematic process of assessing the quality, accuracy, and appropriateness of content generated by artificial intelligence systems. Think of it as quality control for your outputs—a way to separate the gold from the garbage before it reaches your audience.

At its core, evaluation involves checking generated content against specific criteria to determine whether it meets your standards. These criteria might include factual accuracy, brand voice consistency, readability, originality, and relevance to your intended purpose. The assessment process requires evaluating multiple aspects across different tasks and contexts. Increasingly, organizations are turning to specialized ai tools for content marketing to streamline and standardize this evaluation process.

Why Evaluation is Critical in 2026

The stakes have never been higher. Search engines like Google have become increasingly sophisticated at detecting low-quality generated content. Users are more discerning than ever, quickly abandoning content that feels generic or unhelpful. And the legal landscape around AI-generated material—from copyright concerns to disclosure requirements—continues to evolve.

Here's what makes evaluating AI outputs non-negotiable in 2026:

Quality control at scale: AI can produce content faster than any human team, but speed without quality is a recipe for disaster. Proper evaluation ensures you're publishing material worth reading and helps maintain performance standards across all your content.

Brand protection: Every piece of content you publish represents your brand. Generated content that's off-voice, inaccurate, or inappropriate can damage relationships you've spent years building.

Competitive advantage: While your competitors are publishing raw AI outputs, evaluated and refined content helps you stand out with higher quality metrics and better user experience.

Key Terms You Need to Know

Before we dive deeper, let's establish a common vocabulary:

LLM (Large Language Model): The AI models that generate text, like GPT-4, Claude, or Gemini. These language models are trained on vast amounts of training data and can produce human-like writing. Understanding how these systems work is fundamental to evaluating their output effectively.

Prompts and outputs: The prompt is your input (what you ask the AI to do), and the output is what the model generates in response. The quality of your prompts directly impacts the results you receive.

Evaluation criteria: The specific standards you use to judge content quality—things like accuracy, tone, structure, and relevance. These form the basis of any robust assessment framework.

Ground truth: Reference examples or verified correct answers that you use as a benchmark when evaluating AI outputs. This reference set provides the standard against which you measure performance.

Rubric: A structured scoring guide that defines your evaluation criteria and how to assess content against them. Rubrics help ensure consistency across different evaluators and evaluation tasks.

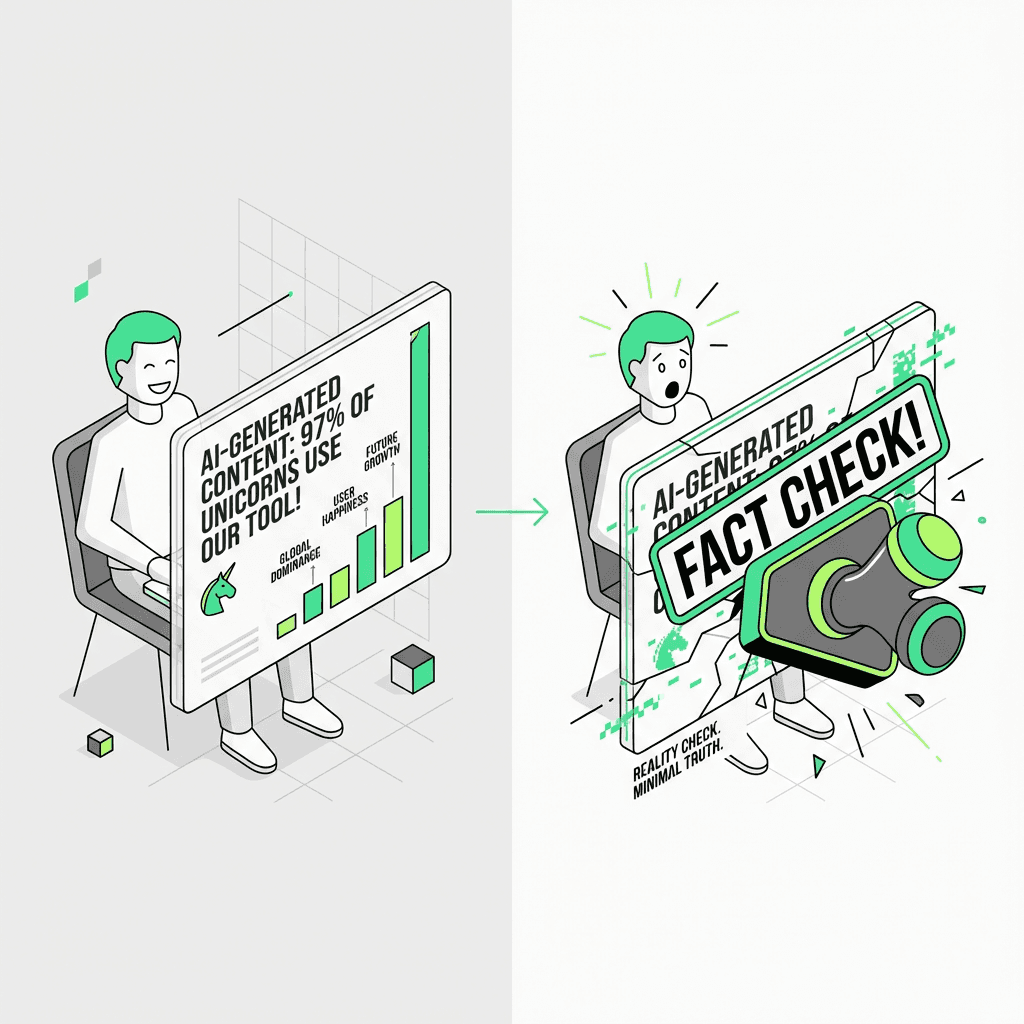

Hallucinations: When AI confidently presents false information as fact—one of the most dangerous quality issues in generated content. These errors occur because models predict text patterns rather than retrieving verified data.

Why AI Content Evaluation Matters

Let's get real about what's at stake when you publish unevaluated generated content.

The Risks of Unevaluated AI Content

Hallucinations and factual errors: AI models don't actually "know" things—they predict what text should come next based on patterns in their training data. This means they can confidently state complete falsehoods. Imagine publishing a blog post with incorrect statistics, made-up case studies, or false claims about your product. The damage to your credibility could be irreversible. Test results consistently show that even advanced LLMs produce factual errors without proper oversight.

Bias and offensive content: LLMs absorb bias present in their training datasets. Without proper evaluation, you might unknowingly publish material that's discriminatory, insensitive, or offensive to portions of your audience. Machine learning systems reflect the patterns in their training data, including problematic ones.

Brand voice inconsistencies: AI tends toward a neutral, somewhat formal tone. If your brand voice is playful, authoritative, or distinctly personal, raw outputs will feel jarringly off-brand. Human evaluators are essential for catching these nuances.

SEO and user experience issues: Google's algorithms are designed to reward helpful, original content that demonstrates expertise. Generic generated content that rehashes common information without adding unique value won't rank well—and even if it did, users would quickly bounce. Performance metrics clearly show the difference between optimized and unoptimized content.

The Business Impact

Poor content quality has real consequences:

Lost trust: 76% of consumers say they'll stop engaging with a brand after encountering inaccurate or misleading information

Wasted resources: Creating content that doesn't perform wastes the time and money you invested in AI tools and systems

Opportunity cost: Every piece of low-quality content is a missed opportunity to educate, engage, or convert your audience

Legal exposure: Depending on your industry, inaccurate generated material could create liability issues, especially in regulated sectors

The good news? All of these risks are manageable through proper evaluation methods and quality control processes.

Types of AI Content Evaluation Methods

There's no one-size-fits-all approach to evaluate generated content. The right method depends on your resources, scale, and quality requirements. Let's explore your options and examine examples of each approach.

1. Manual Evaluation (Human Review)

Manual evaluation means having human evaluators read, assess, and score AI-generated content based on defined criteria. This approach leverages human judgment to catch nuanced issues that automated systems might miss.

When to use it: Manual evaluation is ideal for high-stakes content like thought leadership articles, sales pages, legal documents, or any material where errors could have serious consequences. It's also essential when you're first establishing your process and need to understand common issues across different content types.

Pros:

Highest accuracy for nuanced quality judgments and context-specific assessment

Catches context-dependent issues that automated evaluators might miss

Allows for subjective assessments like brand voice and emotional resonance

Provides qualitative feedback for improvement across multiple tasks

Human evaluators can identify subtle bias and appropriateness issues

Cons:

Time-intensive and doesn't scale well beyond a certain volume

Subject to individual reviewer bias without proper calibration and training

More expensive than automated methods over time

Can create bottlenecks in high-volume workflows

Best for: Small content volumes (1-20 pieces per week), premium content, initial quality baseline establishment, and industries with strict compliance requirements.

2. Automated Evaluation (LLM-as-a-Judge)

Automated evaluation uses AI systems to evaluate other AI outputs. This "LLM-as-a-judge" approach involves creating prompts that instruct a language model to assess content against your criteria and provide scores or feedback based on specific performance metrics.

When to use it: Automated evaluation shines when you need to assess large volumes of generated content quickly and consistently. It's particularly valuable for ongoing quality monitoring and catching obvious issues before human review. This method excels at specific, well-defined tasks where criteria are clear.

Pros:

Incredibly fast—can evaluate hundreds of pieces in minutes

Highly scalable without additional human resources or training time

Consistent application of criteria (no reviewer fatigue)

Cost-effective for high-volume content operations

Can process and analyze data patterns across large datasets

Cons:

May miss subtle nuances or context-dependent issues

Requires upfront setup and prompt engineering

Can inherit bias from the evaluating model

Less effective for highly subjective criteria like creativity

Performance depends on the quality of your evaluation framework

Best for: Large-scale content operations (50+ pieces per week), initial quality filtering before human review, routine quality monitoring, and standardized content types with clear evaluation criteria and measurable metrics.

3. Hybrid Evaluation

The hybrid approach combines human judgment with automated evaluation to get the best of both worlds. This method uses machine learning for initial screening while reserving human expertise for complex assessment tasks.

When to use it: For most organizations, hybrid evaluation offers the optimal balance. Use automated systems as a first pass to catch obvious issues and flag potentially problematic content based on key metrics, then route flagged items to human evaluators for final assessment. This approach maximizes both efficiency and accuracy.

How it works: Set up automated evaluation to score all generated content using specific benchmarks. Pieces that score above your threshold (e.g., 85/100) can be published with minimal human oversight. Content scoring below the threshold gets human review. Content in the middle range might receive spot-checking. This tiered process ensures resources are allocated where they're most needed.

Best for: Medium to large content operations (20-100+ pieces per week) where you need both scale and quality assurance across different content types and tasks.

4. User-Based Evaluation

User-based evaluation involves testing generated content with your actual audience and measuring their responses. This real-world assessment provides performance data that internal evaluations can't capture.

Methods include:

A/B testing AI-generated content against human-written content to compare results

Tracking engagement metrics (time on page, bounce rate, conversions) as performance indicators

Collecting direct user feedback through surveys or ratings

Analyzing customer support inquiries related to generated content

Monitoring social media responses and sentiment scores

When to use it: User-based evaluation is essential for understanding real-world content performance. It's your ultimate quality check—if users engage with and value the content, it's working regardless of what your internal rubric says. These results provide the ground truth for refining your evaluation framework.

Best for: Validating your evaluation criteria, optimizing content strategy based on actual performance data, and making data-driven decisions about AI content use across different contexts and audience segments.

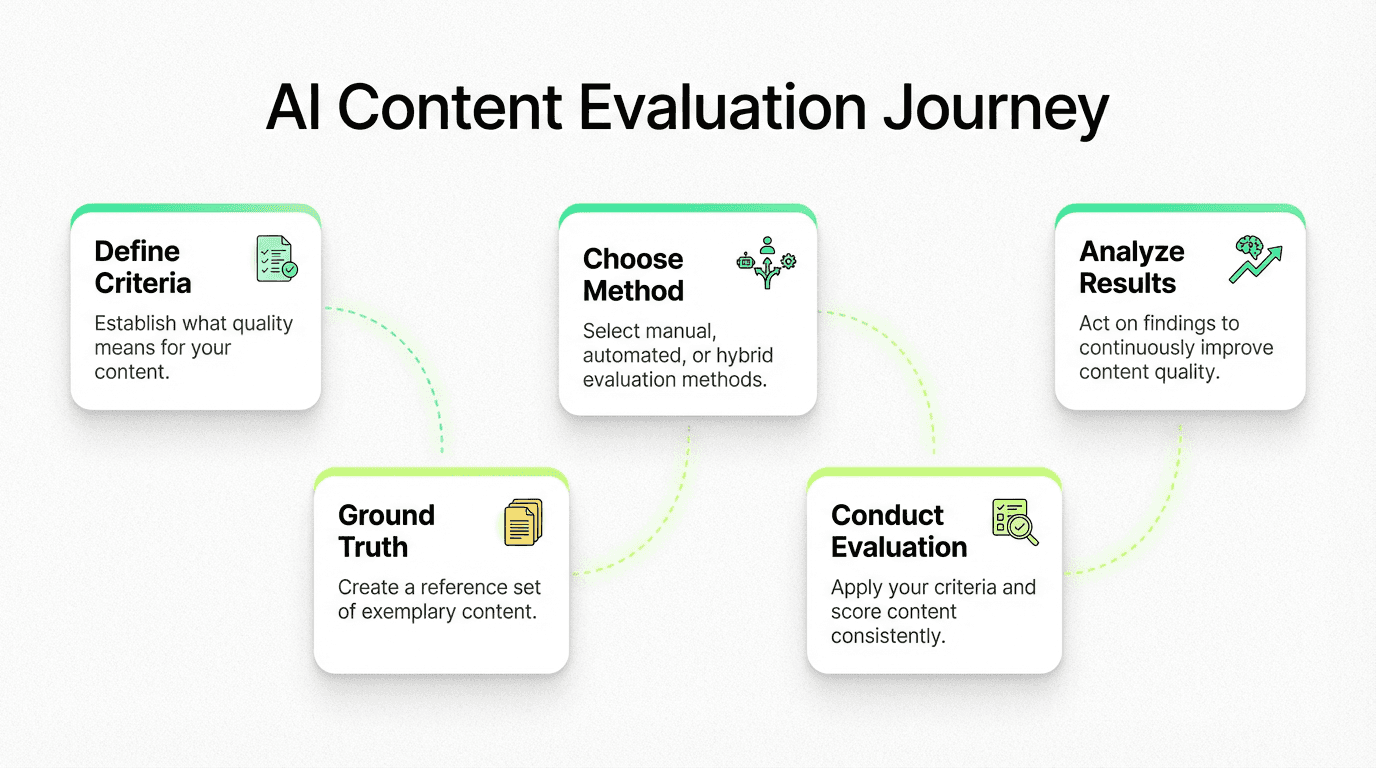

The 5-Step AI Content Evaluation Framework

Ready to start evaluating? Follow this practical framework to build a systematic evaluation process that scales with your needs.

Step 1: Define Your Evaluation Criteria

You can't evaluate quality without first defining what "quality" means for your specific use case and tasks. Start by identifying your core criteria—the non-negotiables that every piece of generated content must meet. These criteria serve as your benchmark for all assessments.

Core criteria for most content:

Accuracy: Is the information factually correct and up-to-date? Does it align with reference data and ground truth examples?

Relevance: Does it address the intended topic and audience needs? Is the context appropriate for the task?

Clarity: Is it easy to understand and well-structured? Can readers quickly extract key information?

Grammar and mechanics: Is it free from language errors and technical mistakes?

Safety: Is it free from offensive, biased, or harmful content? Have you tested for potential issues?

Feature-specific criteria vary based on your content type, goals, and the specific tasks you're evaluating:

Brand voice: Does it match your organization's tone and personality across different contexts?

SEO optimization: Does it target appropriate keywords naturally while maintaining quality metrics?

Originality: Does it provide unique insights rather than generic information? Does it generate new value?

Completeness: Does it thoroughly cover the topic with sufficient examples and supporting data?

Call-to-action effectiveness: Does it guide the reader toward the desired next step?

Formatting: Is the structure appropriate for the content type and platform?

Pro Tip: Start with 5-7 core criteria. You can always add more as your process matures. Overcomplicating your criteria at the outset leads to evaluator fatigue and inconsistent scoring.

Step 2: Establish Ground Truth

Ground truth is your reference standard—the "correct" answers or exemplary content that you measure AI outputs against. Without it, evaluation becomes subjective guesswork.

How to create reference examples:

Gather your best-performing content. Pull 10-20 pieces of existing content that represent your quality standard. These become your gold-standard benchmarks.

Annotate what makes them excellent. For each piece, note why it works—tone, structure, depth, accuracy, engagement metrics.

Create "golden datasets." Build a collection of prompt-output pairs where the output represents ideal quality. Include both positive examples (what great looks like) and negative examples (common failures to avoid).

Document your standards. Write clear descriptions of what each quality level looks like for each criterion. This reference set ensures evaluators have a shared understanding.

Your golden dataset doesn't need to be massive. Even 10-15 well-annotated examples per content type give evaluators a strong foundation for consistent assessment.

Step 3: Choose Your Evaluation Method

Use this decision framework to select the right approach for your situation:

Choose Manual Evaluation if:

You produce fewer than 20 pieces of content per week

Your content is high-stakes (legal, medical, financial)

You're just starting your evaluation process

Budget allows for dedicated reviewer time

Choose Automated Evaluation if:

You produce 50+ pieces per week

Your criteria are well-defined and objective

You need real-time quality monitoring

You have technical resources for setup

Choose Hybrid Evaluation if:

You produce 20-100+ pieces per week

You need both scale and nuance

Your content spans multiple types and risk levels

You want to optimize cost while maintaining quality

Budget considerations: Manual evaluation costs roughly $15-50 per piece depending on complexity and reviewer expertise. Automated evaluation requires upfront setup (typically 10-20 hours) but drops to pennies per piece at scale. Hybrid approaches optimize spend by reserving human attention for content that needs it most.

Automated evaluation is key for optimizing velocity. With competition increasing every day, and uncertainty around how search engines and AI platforms score content, the smartest marketers and AI practitioners are turning to AI evals and using LLMs as judges. Here’s a video from IBM:

Step 4: Conduct the Evaluation

For manual evaluation, follow this process:

Prepare the content. Remove any indicators of whether content is AI-generated or human-written to reduce bias in assessment.

Apply your rubric. Score each piece against every criterion using your defined scale.

Document findings. Note specific issues, not just scores. "Paragraph 3 contains an unverified statistic" is more actionable than "Accuracy: 3/5."

Review edge cases. Flag content that's difficult to score for team discussion and calibration.

For automated evaluation setup:

Craft your evaluation prompt. Define your criteria clearly in the prompt. Include examples of good and poor content so the evaluating model understands your standards.

Test with your golden dataset. Run your automated evaluator against content you've already manually scored. Compare results to identify gaps.

Calibrate thresholds. Determine score cutoffs for "publish," "needs review," and "reject" based on correlation with human judgments.

Monitor performance over time. Regularly spot-check automated scores against human evaluation to catch drift.

Calibration and consistency checks:

Have multiple reviewers score the same content independently, then compare results

Calculate inter-rater reliability (aim for 80%+ agreement)

Hold regular calibration sessions to discuss edge cases and align standards

Update your rubric and guidelines based on recurring disagreements

Step 5: Analyze and Act on Results

Evaluation data is only valuable if you act on it.

Interpreting evaluation scores:

Track average scores over time to identify trends in AI output quality

Compare scores across content types, topics, and AI models

Look for criteria where scores are consistently low—these indicate systematic issues

Identifying patterns and issues:

Do accuracy scores drop on technical topics? Your prompts may need more context.

Is brand voice consistently weak? Consider fine-tuning or providing style examples in prompts.

Are certain content types scoring higher than others? Double down on what works.

Making improvements:

Refine prompts based on common failure patterns

Update your evaluation criteria as your understanding of AI outputs matures

Share findings with your content team to improve the overall workflow

Document what works so you can replicate success across different tasks

The TRAAP Test for AI Content Evaluation

The TRAAP framework—originally developed for evaluating information sources—is remarkably effective for assessing AI-generated content. Here's how each element applies:

Timeliness: Is the information current? AI models have training data cutoffs, meaning they may present outdated statistics, reference defunct products, or miss recent developments. Always check dates, data points, and references against current sources.

Relevance: Does the content match your intended purpose and audience? AI outputs can drift off-topic or address a slightly different angle than what you need. Verify that every section serves your reader's actual questions and needs.

Authority: Can you verify the sources and claims? AI-generated content often cites non-existent studies, fabricates expert quotes, or attributes claims to the wrong sources. Every factual claim needs verification against authoritative references.

Accuracy: Is the information correct? Beyond outright hallucinations, watch for subtle inaccuracies—oversimplifications, misleading correlations, or technically correct but contextually wrong statements.

Purpose: What's the intent behind the content? Ensure the AI output aligns with your communication goals. Content meant to educate should educate. Content meant to persuade should persuade. AI sometimes defaults to a generic informational tone regardless of the intended purpose.

Applying TRAAP to AI-Generated Content

Practical example: You ask an AI to write a blog post about email marketing trends for 2026.

Timeliness check: Does it reference 2026 data, or is it rehashing 2023 statistics? Are the tools and platforms mentioned still active?

Relevance check: Does it address trends your specific audience cares about, or generic trends that apply to everyone?

Authority check: Are the statistics attributed to real reports? Do the "experts" quoted actually exist?

Accuracy check: Are the claimed open rates, conversion rates, and benchmarks in line with verified industry data?

Purpose check: Does it move readers toward your intended action (e.g., trying your email platform), or does it read like a Wikipedia article?

Tips for verification:

Cross-reference every statistic with the original source

Search for quoted experts to confirm they exist and actually said what's attributed to them

Check competitor content on the same topic for factual consistency

Use multiple AI models to cross-validate factual claims

When in doubt, consult a subject matter expert

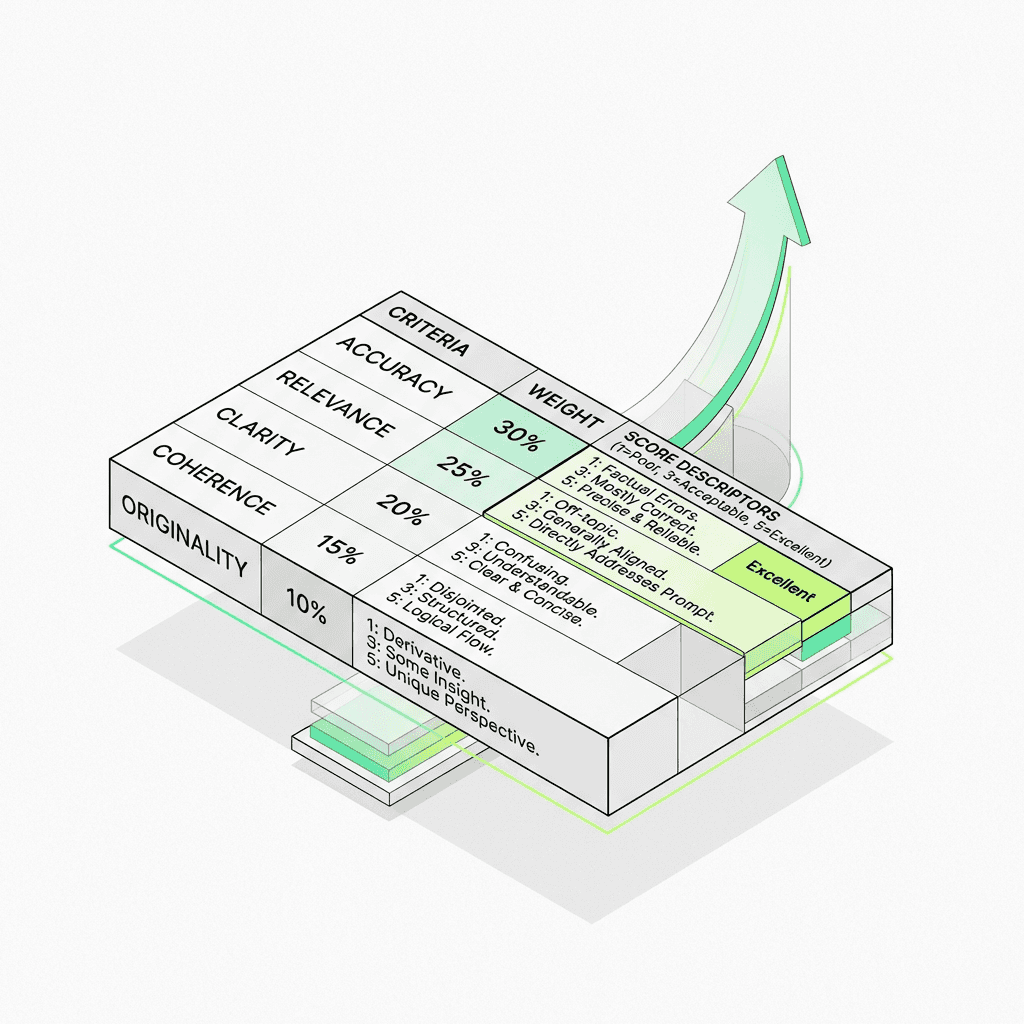

Creating an AI Content Evaluation Rubric

A well-designed rubric transforms evaluation from a subjective opinion into a repeatable, consistent process.

Essential Rubric Components

Core quality criteria form the backbone of your rubric. These typically include:

Factual accuracy (verified claims, correct data)

Relevance to topic and audience

Clarity and readability

Grammar and mechanics

Completeness of coverage

Originality and unique value

Scoring systems vary based on your needs:

Binary (Pass/Fail): Simple and fast. Best for non-negotiable criteria like factual accuracy or brand safety.

Scale (1-5 or 1-10): Provides granularity. Best for subjective criteria like tone and creativity.

Weighted scoring: Assigns different importance levels to different criteria. A factual error might carry 3x the weight of a minor formatting issue.

Clear guidelines for evaluators ensure consistency:

Define what each score level looks like with concrete examples

Specify what constitutes a "deal-breaker" that triggers automatic rejection

Include instructions for handling edge cases or ambiguous content

Sample Rubric Templates

Blog content rubric:

Criterion | Weight | 1 (Poor) | 3 (Acceptable) | 5 (Excellent) |

|---|---|---|---|---|

Accuracy | 30% | Multiple factual errors | Minor inaccuracies, easily corrected | All claims verified and sourced |

Relevance | 20% | Off-topic or misses audience needs | Covers topic but lacks depth | Directly addresses reader questions |

Brand Voice | 15% | Completely off-brand tone | Mostly on-brand with minor gaps | Perfectly matches brand guidelines |

Originality | 15% | Generic, no unique value | Some original insights included | Fresh perspective with unique data |

SEO | 10% | No keyword integration | Keywords present but forced | Natural keyword usage throughout |

Readability | 10% | Dense, hard to follow | Readable with some improvements needed | Clear, scannable, well-structured |

Adapt this template for marketing copy (emphasize persuasion, CTA clarity, and emotional resonance), educational content (prioritize accuracy, progression, and comprehension), or technical documentation (focus on precision, completeness, and usability).

Best Practices for Rubric Design

Keep it simple. A rubric with 20 criteria won't get used consistently. Aim for 5-8 core criteria.

One concept per criterion. "Accuracy and readability" should be two separate criteria—they require different assessments.

Include examples. Abstract descriptions lead to inconsistent scoring. Show evaluators what a "3" versus a "5" actually looks like.

Calibrate with your team. Have all evaluators score the same 5 pieces independently, then compare results and discuss differences until you reach alignment.

Iterate regularly. Update your rubric quarterly based on new patterns, changing standards, and evaluator feedback.

Common AI Content Quality Issues

Knowing what to look for makes evaluation faster and more effective. Here are the most frequent issues and how to catch them.

Hallucinations and false information: AI confidently invents statistics, studies, quotes, and even entire organizations. This is the single most dangerous quality issue because it erodes trust.

Outdated information: Models trained on older data present past information as current. Watch for outdated statistics, deprecated tools, former company names, and old pricing.

Bias and inappropriate content: Subtle stereotyping, cultural insensitivity, or one-sided perspectives can slip through. This is especially common in content about people, cultures, or controversial topics.

Generic or "middle-of-the-road" responses: AI defaults to safe, bland content that says nothing new. The result reads like a summary of the top 10 Google results—technically correct but adding zero unique value.

Lack of originality: AI recombines existing information rather than generating genuine insights. If your content sounds like everything else on the topic, it won't stand out or rank well.

Missing context or nuance: AI struggles with industry-specific context, regional differences, and audience-specific knowledge. It might give advice that's technically sound but impractical for your specific situation.

How to Catch These Issues

Red flags to watch for:

Suspiciously specific statistics without clear sources

Quotes attributed to people you can't verify

Content that reads like a textbook rather than addressing real-world problems

Claims that sound too good (or too neat) to be true

Uniform sentence structure and paragraph length throughout

Overuse of hedge words like "it's important to note" or "in today's landscape"

Verification techniques:

Google exact phrases to check for plagiarism or fabrication

Search for cited studies, reports, and statistics in academic databases

Ask subject matter experts to review technical claims

Use fact-checking tools and databases for key assertions

Cross-referencing strategies:

Compare AI output against 2-3 authoritative sources on the same topic

Run the same prompt through multiple AI models and compare outputs

Check industry-specific databases and reports for data validation

Verify company names, product names, and URLs still exist and are current

Tools for AI Content Evaluation

You don't need to build everything from scratch. Here are tools that can support your evaluation workflow.

Free evaluation tools:

Grammarly / LanguageTool: Catch grammar, spelling, and clarity issues in generated content

Hemingway Editor: Assess readability and sentence complexity

Google Fact Check Explorer: Cross-reference claims against fact-checked sources

Copyscape (limited free checks): Detect plagiarism or overly derivative content

Custom spreadsheets: Build your own scoring templates using the rubric frameworks in this guide

Paid platforms and services:

Metaflow AI: A platform for building your own unique eval workflows and agents

Originality.ai: Detects AI-generated content and checks for plagiarism

Content at Scale: Offers AI detection and content optimization

Surfer SEO / Clearscope: Evaluate content against SEO best practices and top-ranking pages

Custom LLM evaluation pipelines: Build automated scoring systems using API access to models like GPT-4 or Claude

Building custom evaluation workflows:

Use spreadsheet templates to track scores across content pieces

Set up automated evaluation prompts in your preferred AI platform

Create Slack or email notification workflows for content that fails quality checks

Build dashboards to monitor quality trends over time

The best tool is the one you'll actually use. Start with free options, prove the value, then invest in paid tools as your process matures.

Best Practices for Beginners

If you're just starting with AI content evaluation, these seven principles will set you up for success.

1. Start small and scale up. Don't try to evaluate everything at once. Begin with your highest-stakes content type—maybe blog posts or client-facing emails—and build your process there before expanding.

2. Document your process. Write down your evaluation steps, criteria, and standards from day one. Documentation turns tribal knowledge into a repeatable system that survives team changes.

3. Create evaluation guidelines. Give evaluators clear, specific instructions. "Check for accuracy" is vague. "Verify all statistics against their cited sources and flag any claims without sources" is actionable.

4. Calibrate with multiple reviewers. If more than one person evaluates content, hold calibration sessions where everyone scores the same pieces and compares results. This eliminates inconsistency.

5. Iterate and improve. Your first rubric won't be perfect. Review it monthly, adjust criteria weights based on what you're finding, and add new criteria as new issues emerge.

6. Maintain a feedback loop. Connect evaluation findings back to your content creation process. If evaluations consistently flag accuracy issues on technical topics, improve your prompts for those topics specifically.

7. Stay updated on AI capabilities. AI models improve rapidly. What was a common failure mode six months ago might be resolved today—and new issues may emerge. Revisit your evaluation criteria quarterly.

Real-World Examples

Example 1: Evaluating AI Blog Posts

Scenario: A SaaS company uses AI to draft 15 blog posts per week for their content marketing program. Traffic has stagnated despite increased publishing volume.

Evaluation criteria used: Accuracy (30%), originality (25%), SEO optimization (20%), readability (15%), brand voice (10%).

Results and actions taken: Evaluation revealed that 80% of posts scored below 2/5 on originality—the AI was producing content nearly identical to existing top-ranking articles. Accuracy scores were decent (3.5/5 average), but the content wasn't adding anything new.

The fix: The team revised their prompts to include proprietary data, customer quotes, and unique perspectives. They reduced publishing volume to 8 posts per week but saw a 40% increase in organic traffic within two months because the content actually provided unique value.

Example 2: Evaluating AI Product Descriptions

Scenario: An e-commerce brand generates product descriptions for 500+ SKUs using AI. Return rates have increased, and customer support tickets mention "misleading descriptions."

Evaluation approach: The team implemented automated evaluation with a focus on accuracy (matching product specs) and completeness (covering key features buyers care about). A sample of 50 descriptions was manually reviewed to calibrate the automated system.

Lessons learned: The AI was embellishing product capabilities—adding features that didn't exist or overstating performance specs. By adding product spec sheets to the prompt context and implementing automated accuracy checks against the product database, description accuracy improved from 65% to 94%, and related support tickets dropped by 60%.

Example 3: Evaluating AI Customer Support Responses

Scenario: A tech company uses AI to draft customer support responses, which agents review before sending. Response quality varies widely, and customer satisfaction scores have declined.

Hybrid evaluation method: Automated evaluation flags responses that score below threshold on empathy, accuracy, and completeness. Flagged responses go to a senior agent for review. All responses are tracked against customer satisfaction ratings to continuously calibrate the evaluation model.

Outcomes: Average response quality score improved from 3.1 to 4.3 out of 5. Agent review time decreased by 35% because the automated filter caught most issues before human review. Customer satisfaction scores returned to pre-AI levels within six weeks.

Frequently Asked Questions

How long does AI content evaluation take?

Manual evaluation typically takes 5-15 minutes per piece depending on length and complexity. Automated evaluation runs in seconds. For most teams, a hybrid approach averages 2-5 minutes per piece once the system is calibrated.

Do I need technical skills to evaluate AI content?

No. Manual evaluation requires subject matter knowledge and clear criteria—not coding skills. Automated evaluation does require some technical setup, but many platforms offer no-code solutions. Start with manual evaluation and add automation as you grow.

How often should I evaluate AI content?

Evaluate every piece before publishing until you have a reliable track record. Once you've established consistent quality with specific prompt templates, you can move to spot-checking (evaluate 20-30% of output) for those templates while continuing full evaluation for new content types.

What's the difference between AI evaluation and AI testing?

AI testing typically refers to evaluating the AI model itself—its capabilities, limitations, and performance benchmarks. AI content evaluation focuses specifically on the output—is this particular piece of content good enough to publish? Testing happens during model development; evaluation happens during content production.

Can AI evaluate its own content?

Yes, with caveats. LLM-as-a-judge approaches work well for objective criteria like grammar, structure, and format compliance. They're less reliable for subjective criteria like brand voice, emotional resonance, and cultural sensitivity. Use AI evaluation as a first pass, not a final check.

How do I measure ROI on evaluation efforts?

Track these metrics: content revision rates (should decrease over time), publishing errors caught before going live, audience engagement improvements, and time saved by catching issues early versus fixing them post-publication. Most teams see positive ROI within the first month.

What if I find major issues with AI content?

Don't panic. Document the issue, identify the root cause (bad prompt, model limitation, missing context), and fix the process. If problematic content was already published, correct or remove it promptly. Use the finding to update your evaluation criteria and prevent recurrence.

How is AI content evaluation different from traditional content review?

Traditional content review assumes a human author who understands context, has real expertise, and makes intentional choices. AI content evaluation must additionally check for fabricated information, training data artifacts, pattern-based rather than knowledge-based claims, and the absence of genuine expertise. The criteria overlap significantly, but AI content requires extra vigilance on accuracy and originality.

Next Steps: Building Your AI Evaluation Process

You now have a complete foundation for evaluating AI-generated content. Here's your action plan:

Week 1: Foundation

Define your top 5-7 evaluation criteria based on your content goals

Create a simple rubric using the templates in this guide

Evaluate 5 pieces of existing AI-generated content to test your rubric

Week 2: Calibration

Refine your rubric based on Week 1 findings

If working with a team, hold a calibration session

Establish your ground truth with 10-15 golden examples

Week 3: Implementation

Integrate evaluation into your content workflow

Begin tracking quality scores and patterns

Identify your most common quality issues

Week 4: Optimization

Use evaluation data to improve your AI prompts

Consider automating parts of your evaluation process

Set quality benchmarks and publishing thresholds

Resources for continued learning:

NIST AI Risk Management Framework for enterprise-level evaluation standards

Stanford HAI research for the latest in AI evaluation methodology

Industry-specific AI guidelines from relevant regulatory bodies

Conclusion

AI content evaluation isn't optional—it's the skill that separates teams producing valuable content from those flooding the internet with noise. The frameworks, rubrics, and processes in this guide give you everything you need to start evaluating with confidence.

Remember: you don't need a perfect system on day one. Start with clear criteria, evaluate consistently, and improve iteratively. The organizations seeing the best results from AI content aren't the ones generating the most—they're the ones evaluating the smartest.

Your next step is simple. Pick one content type, build a basic rubric using the templates above, and evaluate your next five pieces of AI-generated content. You'll be surprised how quickly patterns emerge—and how much your content quality improves once you start paying attention.

The AI content revolution rewards those who combine speed with quality. Start evaluating today.

Building a reliable evaluation process is one thing. Scaling it across your entire content operation—without drowning in spreadsheets and manual reviews—is another. That's exactly the problem Metaflow solves. Our platform gives you structured evaluation workflows, built-in rubrics, and AI-assisted quality checks so you can go from raw AI output to publish-ready content with confidence.