TLDR: AI-generated conversations now influence 58% of searches that never click through to websites, but most brands have zero visibility into how ChatGPT, Perplexity, and Gemini position them. This guide reviews 8 AI visibility tools and explains how to measure Share of AI Voice (your mentions divided by total category mentions in LLM outputs). Start with Otterly.AI for multi-LLM tracking, Profound for enterprise GEO programs, or Peec AI for budget-conscious startups.

Your reputation is being discussed, evaluated, and recommended right now in thousands of AI-generated conversations you'll never see.

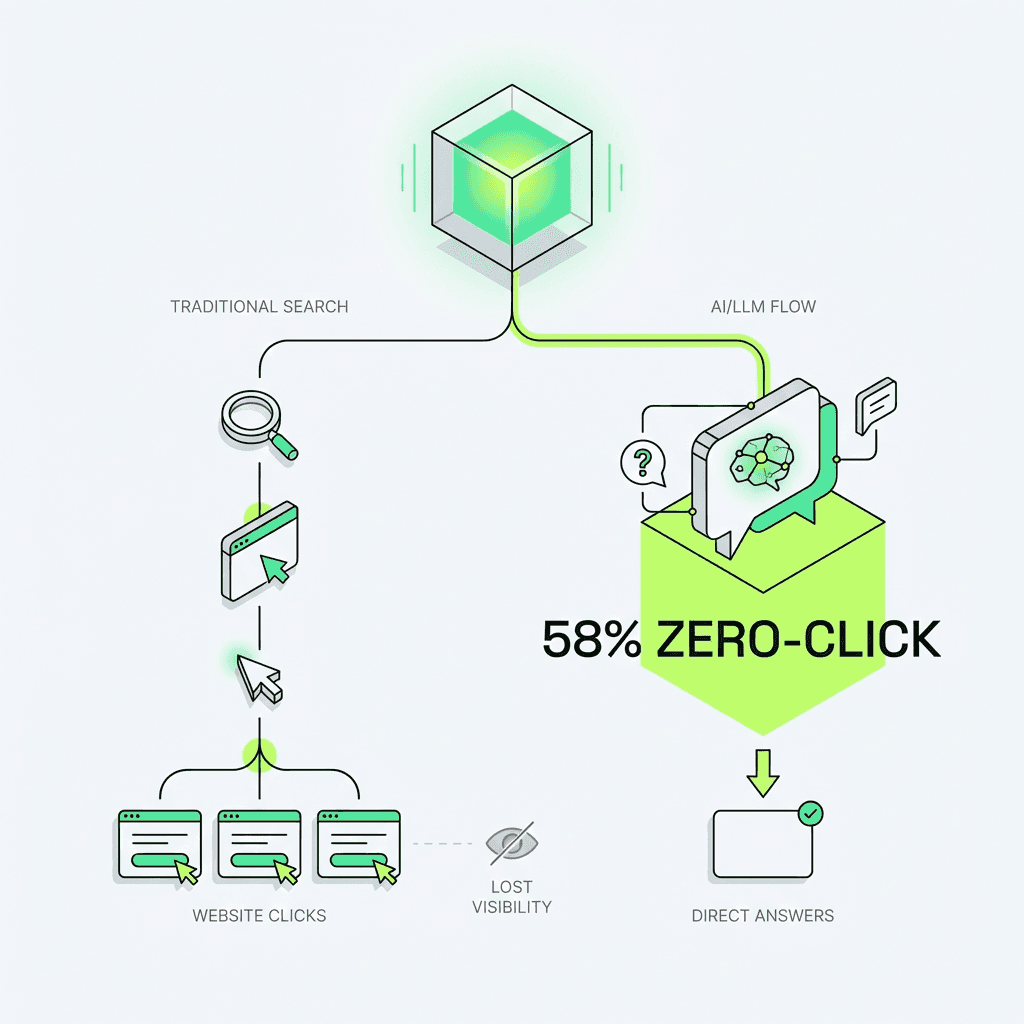

According to SparkToro's 2024 research, 58% of Google searches now end without a click. ChatGPT, Perplexity, Gemini, and Google's AI Overviews answer queries directly, synthesize recommendations, and shape purchase decisions before users reach your website. BrightEdge reports that 73% of marketers don't know how to optimize for generative engines or [AI search and SEO answer engine optimization (AEO)](https://metaflow.life/blog/ai-search-and-seo-the-rise-of-answer-engine-optimization-aeo).

Your Google Analytics dashboard is lying to you. It shows traffic, conversions, and attribution, but it's completely blind to where 58% of searches now happen. Your SEO KPIs framework needs to evolve to reflect that gap. While you're optimizing meta descriptions and building backlinks, competitors are dominating the AI layer, getting cited in hundreds of ChatGPT conversations you can't measure.

I've spent the last two years helping B2B SaaS companies navigate this shift including a Series B marketing platform that discovered 73% of their online mentions came from Reddit threads criticizing their pricing. The pattern is clear: the businesses winning in search aren't necessarily the ones ranking #1 on Google. They're the ones who understand that LLMs don't just retrieve information; they editorialize and choose what deserves to be cited, an effect that reflects entity based SEO realities. Right now, most companies have zero visibility into whether they're winning or losing that game.

This isn't vanity metrics. What matters is controlling your reputation management footprint before it calculates into millions of AI-generated answers that shape buyer perception without you ever knowing.

Why Best Brand Monitoring Tools Matter (The Zero-Click Problem)

Traditional monitoring tracks mentions across social media, news sites, and online platforms. That infrastructure was built for a world where discovery happened through search engines and social feeds, channels you could measure.

The AI layer breaks that model entirely, and to grasp the shift, revisit how search engines work versus how LLMs reason.

When someone asks ChatGPT "What's the best marketing automation platform for B2B SaaS?", the LLM doesn't just retrieve; it reasons. It synthesizes information from its training data, performs real-time web searches (depending on the model), evaluates authority signals, and constructs a narrative. Only 12-15% of companies in a given category get cited in these responses, according to Profound AI's internal research.

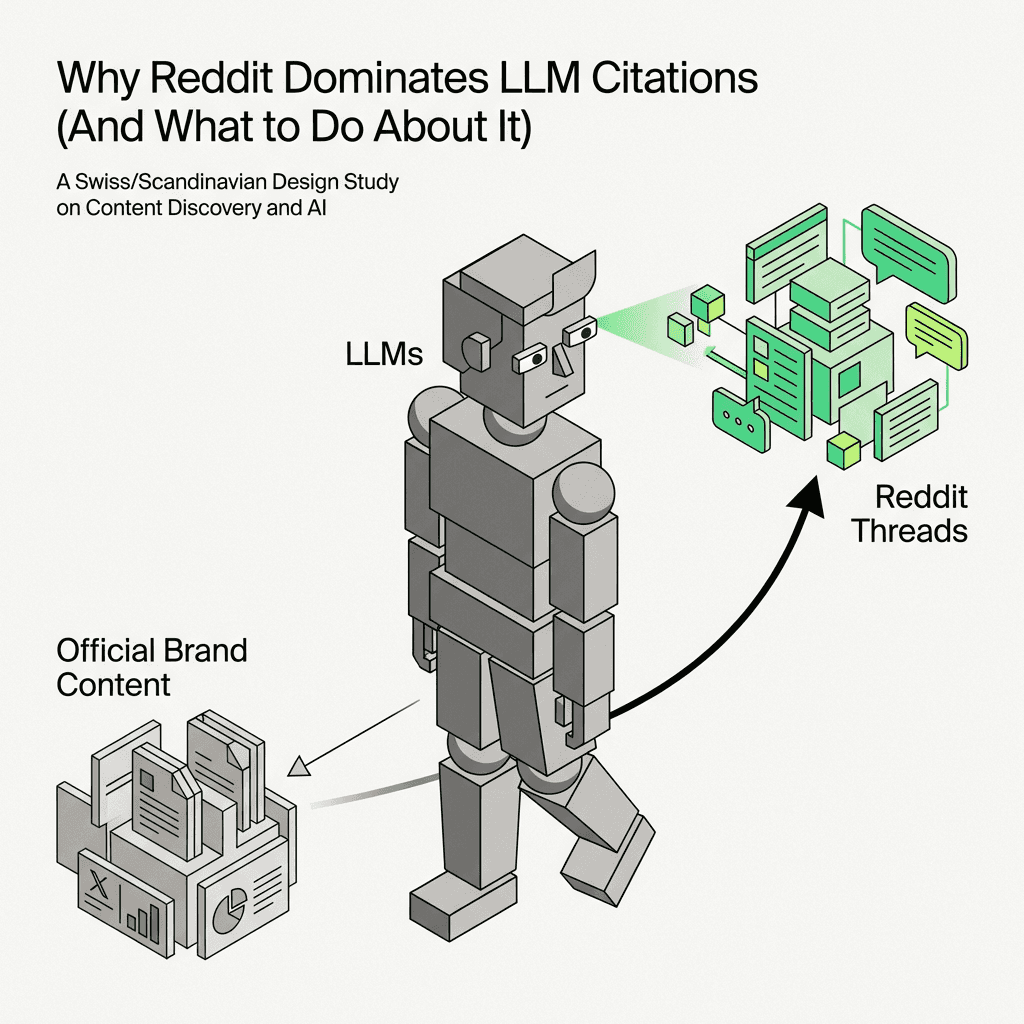

The businesses that get cited aren't always the market leaders. Nightwatch's analysis found that Reddit is cited 3.2x more frequently than company websites in product recommendation queries. Your carefully crafted positioning? Your case studies? Your thought leadership? An LLM will ignore all of it in favor of a Reddit thread from 2023.

Citation asymmetry is the disconnect between your traditional SEO footprint and your visibility. You can rank #1 for "marketing automation" on Google and still be invisible when 10,000 people ask ChatGPT for recommendations this month.

The problem compounds because LLMs are non-deterministic. Ask the same question twice, you get different answers. Ask across ChatGPT, Perplexity, and Gemini, and SE Ranking's research shows they cite different sources ~40% of the time. You're not optimizing for one algorithm anymore; you're optimizing for multiple reasoning engines with different retrieval logic, training data, query fan out SEO dynamics, and citation preferences.

How ChatGPT, Perplexity, and Gemini Choose Which Brands to Cite

Understanding these monitoring tools requires understanding how LLMs make citation decisions. This isn't a black box; it's a system with observable patterns.

Training Data vs. Real-Time Retrieval

ChatGPT has a knowledge cutoff (currently April 2023 for GPT-4) plus optional web browsing. Perplexity performs live web searches for every query. Google AI Overviews pull from SERP results. Claude relies primarily on training data. Each model has a fundamentally different relationship with "current" information.

Retrieval-Augmented Generation (RAG)

When LLMs need current data, they use RAG: search the web, retrieve relevant passages, synthesize an answer, cite sources. The quality of your citation depends on:

Semantic relevance: Does your material match query intent?

Authority signals: Domain authority, backlinks, recency

Structured clarity: A structured data strategy that helps the LLM easily extract and attribute information

Why Reddit Dominates LLM Citations (And What to Do About It)

LLMs favor conversational, experience-driven material. A detailed Reddit comment explaining why someone switched from HubSpot to ActiveCampaign carries more citation weight than a generic "Top 10 Marketing Tools" listicle. Why? Because it demonstrates earned authority: real usage, specific trade-offs, contextual nuance.

When I tracked Share of AI Voice for a fintech client using Otterly, we discovered they had 8% visibility versus competitors' 22%. The culprit? Reddit threads about their customer support response times dominated LLM citations, while their own marketing materials got ignored.

This is the shift from SEO to GEO (Generative Engine Optimization): you're no longer optimizing for crawlers that count keywords. You're optimizing for reasoning engines that evaluate credibility, synthesize perspectives, and construct narratives.

What you can do:

Monitor which Reddit threads mention your company (use Brand24 or manual searches)

Participate authentically in relevant subreddits (r/SaaS, r/marketing, industry-specific communities)

Create material that answers the same questions Reddit users ask, but with more depth

Track whether your Reddit engagement improves Share of AI Voice over 60-90 days

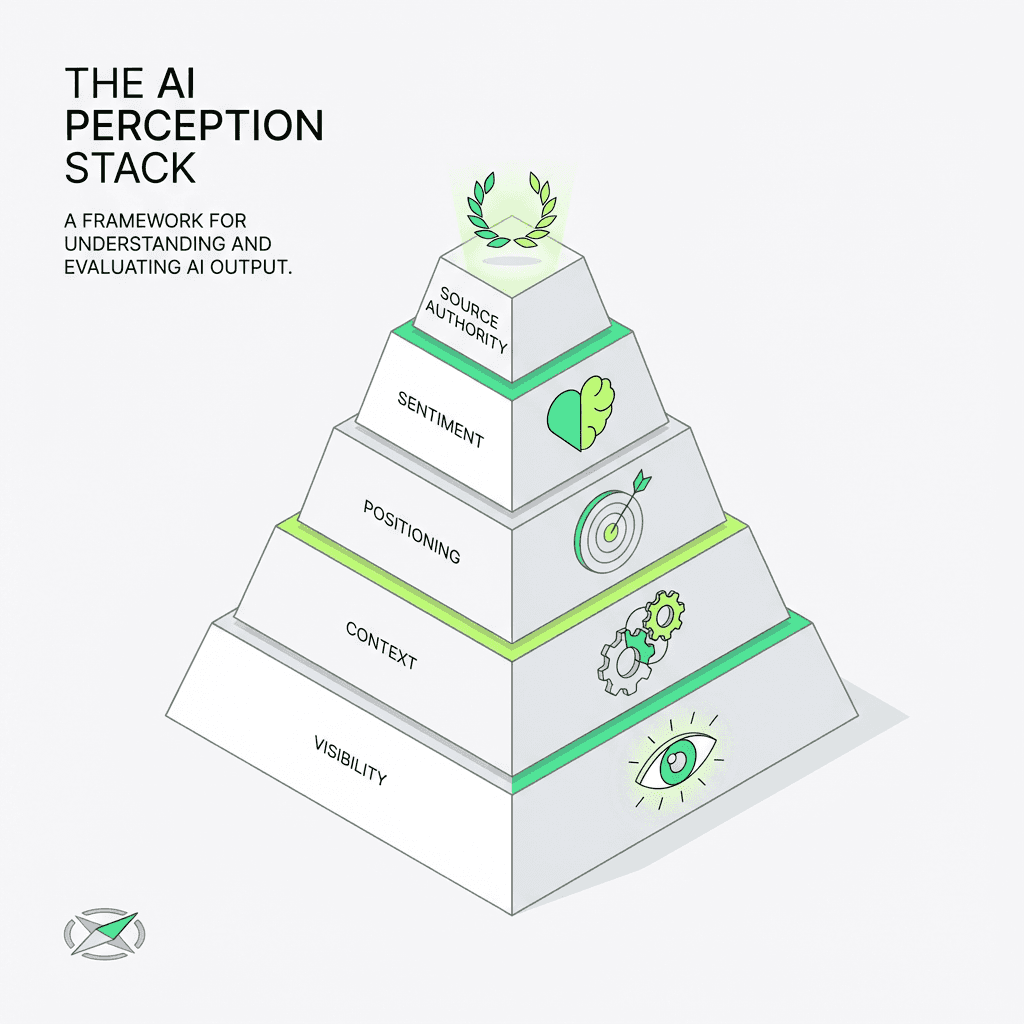

5 Metrics to Track Beyond Mentions (The AI Perception Stack)

Most monitoring software tells you if you're mentioned. The better question: how are you positioned, and is that perception driving pipeline or killing it?

Use this framework:

1. Visibility: Are you mentioned at all? (Binary: present or absent)

2. Context: What queries trigger your company? (Use cases, categories, problem spaces)

3. Positioning: Where do you rank in multi-company responses? (Share of AI Voice)

4. Sentiment: How are you described? (Recommended, neutral mention, criticized)

5. Source Authority: What sources feed LLM citations? (Your site, Reddit, reviews, news?)

Share of AI Voice: Your mentions divided by total category mentions in LLM outputs. The equivalent of traditional Share of Voice.

If ChatGPT recommends 5 CRM platforms and you're one of them, you have 20% Share of AI Voice for that query. Track this across 20-30 category queries, and you have a baseline.

But volume alone is misleading. Being cited from a Reddit thread complaining about your pricing isn't the same as being cited from a case study demonstrating ROI. Citation quality beats citation quantity.

The other critical insight: LLM outputs are non-deterministic. One query doesn't tell you anything. You need longitudinal tracking (same prompts over time) to detect real shifts in perception.

How to Measure Each Layer of the Stack

Visibility: Ask ChatGPT, Perplexity, and Gemini the same query 10 times each: "What are the best category platforms?" Count how many times you appear. Below 30% appearance rate means you have a visibility problem.

Context: Track which query types trigger your company:

Direct category queries: "best CRM software"

Use case queries: "CRM for startups"

Competitor displacement: "HubSpot alternatives"

Problem-solution: "how to automate lead nurturing"

Use Otterly or Profound to run 20-30 variations and map which contexts trigger citations.

Positioning: When you are cited, where do you rank? First mention carries more weight than fourth. Use ai search competitor analysis tools like Profound's citation source analysis or Otterly's competitor benchmarking to track your average position.

Sentiment: Review the exact language LLMs use. Are you "recommended for," "suitable for," or "criticized for"? Use Brand24's sentiment analysis or manually review outputs to identify trends in how customers and the audience perceive your business.

Source Authority: Use Profound to identify which URLs LLMs pull from. If Reddit dominates, you need a community strategy. If competitor review sites dominate, you need more third-party validation through customer reviews and feedback.

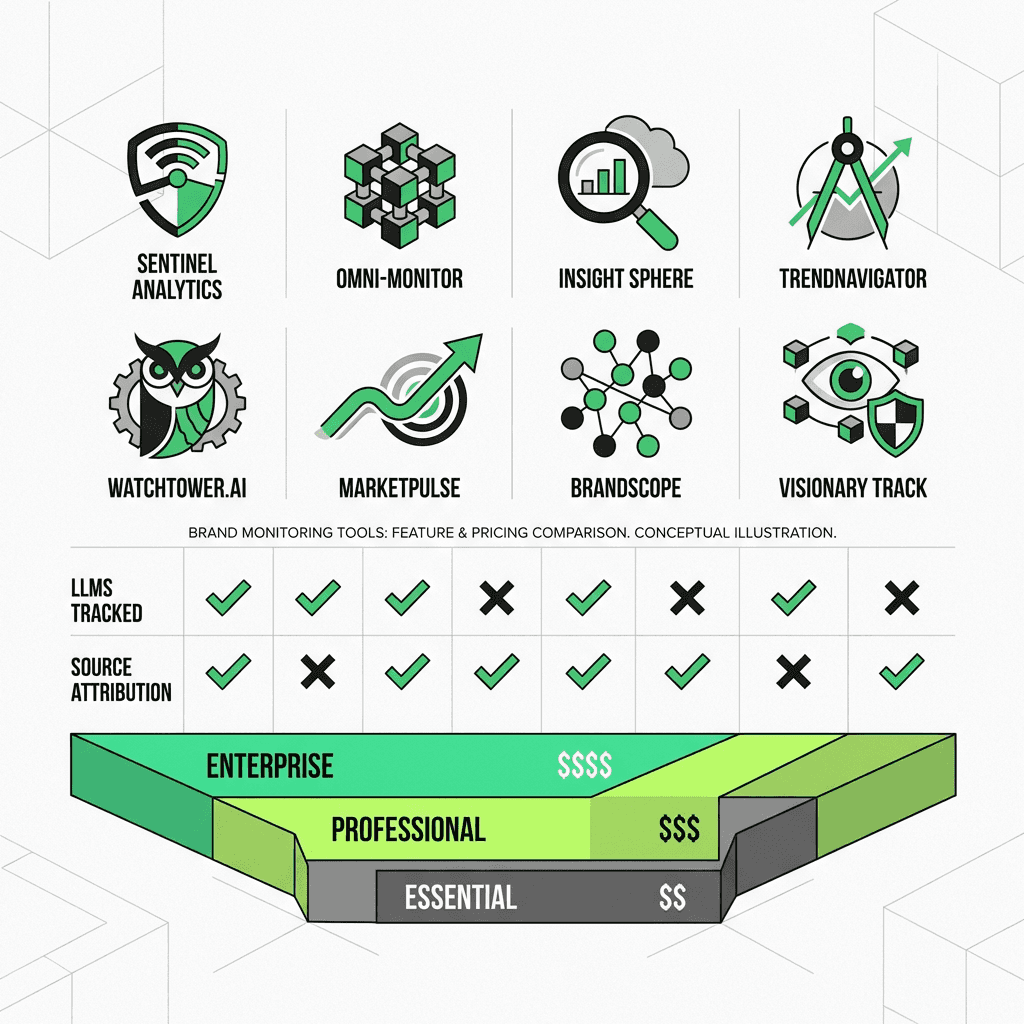

Best Brand Monitoring Tools Comparison

Tool | LLMs Tracked | Source Attribution | Competitor Benchmarking | Pricing Tier | Best For |

|---|---|---|---|---|---|

Otterly.AI | ChatGPT, Perplexity, Gemini, AI Overviews | Limited | Yes | Mid-tier | Multi-LLM tracking |

Profound | ChatGPT, Perplexity, Gemini, Shopping | Deep | Yes | Premium | Enterprise GEO programs |

SE Ranking | Google AI Overviews | Moderate | Limited | Affordable | SEO teams |

Peec AI | ChatGPT, Claude | No | No | Budget | Startups |

Knowatoa | Multiple | Yes | Yes (competitive focus) | Mid-tier | Competitor intelligence |

Brand24 | Multiple (shallow) | No | No | Mid-tier | Social + unified |

Gumloop | Custom | Custom | Custom | Variable | Technical teams |

ZipTie.Dev | API-based | API-based | Custom | Developer-tier | Engineering-led teams |

The 8 Best AI Brand Monitoring Tools (Tested & Ranked)

I've tested these platforms across early-stage startups and growth-stage B2B companies. What actually works, what doesn't, and when to use each one.

1. Otterly.AI

What it does: Multi-LLM tracking across ChatGPT, Perplexity, Gemini, and Google AI Overviews.

Best for: Companies that need broad coverage and competitor benchmarking.

Pros:

Tracks Share of AI Voice across multiple models

Longitudinal tracking detects trends over time

Clean dashboard with competitor positioning insights

Shows which queries trigger your company versus competitors

Real-time alerts for sentiment changes

Cons:

Pricing scales quickly for enterprise use cases (starts at ~$500/month for comprehensive tracking)

Limited source attribution; tells you you're cited, not always why

Doesn't track Reddit or community mentions separately

Verdict: The most comprehensive multi-LLM tracker. Start here if you're serious about online reputation management. When I used Otterly with a Series A SaaS client, we discovered they appeared in only 12% of category queries versus their main competitor's 34%. The data justified a complete GEO strategy overhaul.

2. Profound

What it does: LLM tracking plus GEO optimization, with agent analytics and shopping result tracking.

Best for: E-commerce and transactional queries (product recommendations, shopping).

Pros:

Deep citation source analysis shows which URLs LLMs pull from

Tracks shopping engines (Perplexity Shopping, ChatGPT plugins)

GEO recommendations tell you what to optimize

Best software for understanding why competitors get cited over you

Advanced analytics and performance reports

Cons:

Overkill for early-stage companies

Steeper learning curve

Premium pricing (contact for quote, typically $1,000+/month)

Verdict: The power solution for enterprise teams running serious GEO programs. Use Profound when you need to reverse-engineer competitor citations. One e-commerce client discovered their product pages lacked the product schema SEO that Perplexity Shopping prioritized. Fixing that increased their visibility by 40% in 60 days.

3. SE Ranking

What it does: AI Overviews citation auditing, SERP-focused.

Best for: SEO teams extending into search visibility.

Pros:

Integrates with existing SE Ranking SEO workflows

Strong Google AI Overview tracking

Affordable for existing SE Ranking users (add-on to existing plans)

Keyword tracking features

Cons:

Limited coverage of non-Google LLMs (ChatGPT, Perplexity)

More focused on SERP-based outputs than conversational chat

Doesn't track Reddit or community sources

Verdict: Great if you're already using SE Ranking for SEO. Not comprehensive enough as a standalone solution. Use this to understand Google AI Overviews specifically, but pair it with Otterly or Peec for conversational LLM tracking.

4. Peec AI

What it does: Conversational tracking (ChatGPT, Claude).

Best for: Startups and smaller teams on a budget.

Pros:

Simple, affordable entry point (starts at ~$99/month)

Focuses on conversational LLMs where most queries happen

Easy setup, minimal learning curve

Good for baseline visibility checks

Free trial available

Cons:

Doesn't track Perplexity or Google AI Overviews

Limited competitor benchmarking

No source attribution

Verdict: Start here if you're early-stage and just need baseline visibility. Peec won't tell you why you're cited or not cited, but it will tell you if you're showing up in ChatGPT responses. One pre-seed startup used Peec to discover they had zero visibility despite ranking on page one for their primary keyword. That insight justified their first GEO hire.

5. Knowatoa

What it does: Competitor analysis using the BISCUIT Framework (why LLMs recommend competitors over you).

Best for: Competitive intelligence and positioning gaps.

Pros:

Focuses on why competitors get cited, not just that they do

Useful for understanding citation logic

Helps identify gaps and positioning weaknesses

Insights into competitor campaigns and strategies

Cons:

Narrow use case; not full-spectrum tracking

Less useful for tracking your own company

Limited LLM coverage

Verdict: Use as a supplement to Otterly or Profound when you need to reverse-engineer competitor citations. Knowatoa excels at answering "Why does ChatGPT recommend Competitor X over us?" One client discovered their competitor dominated citations because they had 10x more comparison materials ("X vs Y" articles). Knowatoa surfaced that gap in 20 minutes.

6. Brand24

What it does: Traditional social and web listening plus mention tracking.

Best for: Teams already using Brand24 for social listening.

Pros:

Unified dashboard (social media, web, mentions)

Familiar interface for existing users

Sentiment analysis across channels

Affordable (starts at ~$99/month)

Social media monitoring features

Cons:

Tracking feels bolted-on, not native

Shallow LLM coverage

Better for awareness than GEO optimization

Verdict: Fine if you're already a Brand24 customer and want to add basic tracking. Not best-in-class for specific needs. Use Brand24 to monitor Reddit threads and social mentions that feed into LLM training data, but pair it with a dedicated solution for citation tracking.

7. Gumloop

What it does: Workflow automation for mention tracking (DIY approach).

Best for: Technical teams that want to build custom workflows.

Pros:

Flexible, customizable

Can integrate with internal platforms

Cost-effective if you have engineering resources

Cons:

Requires setup and maintenance

Not plug-and-play

No pre-built dashboards or benchmarks

Verdict: This DIY approach mirrors programmatic SEO thinking: automating at scale to cover large query sets. One engineering-led startup built a Gumloop workflow that queried ChatGPT, Perplexity, and Claude daily, logged results to a database, and triggered Slack alerts when competitor mentions spiked. Total cost: ~$50/month in API fees versus $500/month for Otterly.

8. ZipTie.Dev / Gumshoe.AI

What it does: Developer-focused LLM tracking via API.

Best for: Engineering-led teams building custom infrastructure.

Pros:

API-first (integrates with existing data pipelines)

Granular control over queries and tracking

Cost-effective at scale

Cons:

Not for non-technical users

Requires custom dashboard and reporting layer

No competitor benchmarking out of the box

Verdict: Use if you're building a proprietary GEO system and need raw data access. ZipTie.Dev provides programmatic access to LLM outputs, but you build everything else. One enterprise client used ZipTie to track 500+ category queries daily across 6 LLMs, feeding results into their internal BI tool, impossible with off-the-shelf solutions.

Example Queries to Track for AI Brand Monitoring

Track 20-30 variations across these query types:

Direct category queries:

"best category platforms"

"top category software"

"category solutions"

Use case queries:

"category for audience" (e.g., "CRM for startups")

"category for job to be done" (e.g., "marketing automation for lead nurturing")

"category with feature" (e.g., "project management with time tracking")

Competitor displacement:

"competitor alternatives"

"competitor vs your company"

"better than competitor"

Problem-solution:

"how to job to be done"

"best way to solve problem"

"problem solution"

Run each query 10 times per LLM (ChatGPT, Perplexity, Gemini) to account for non-deterministic outputs. Track monthly to detect trends and identify opportunities to improve your online reputation.

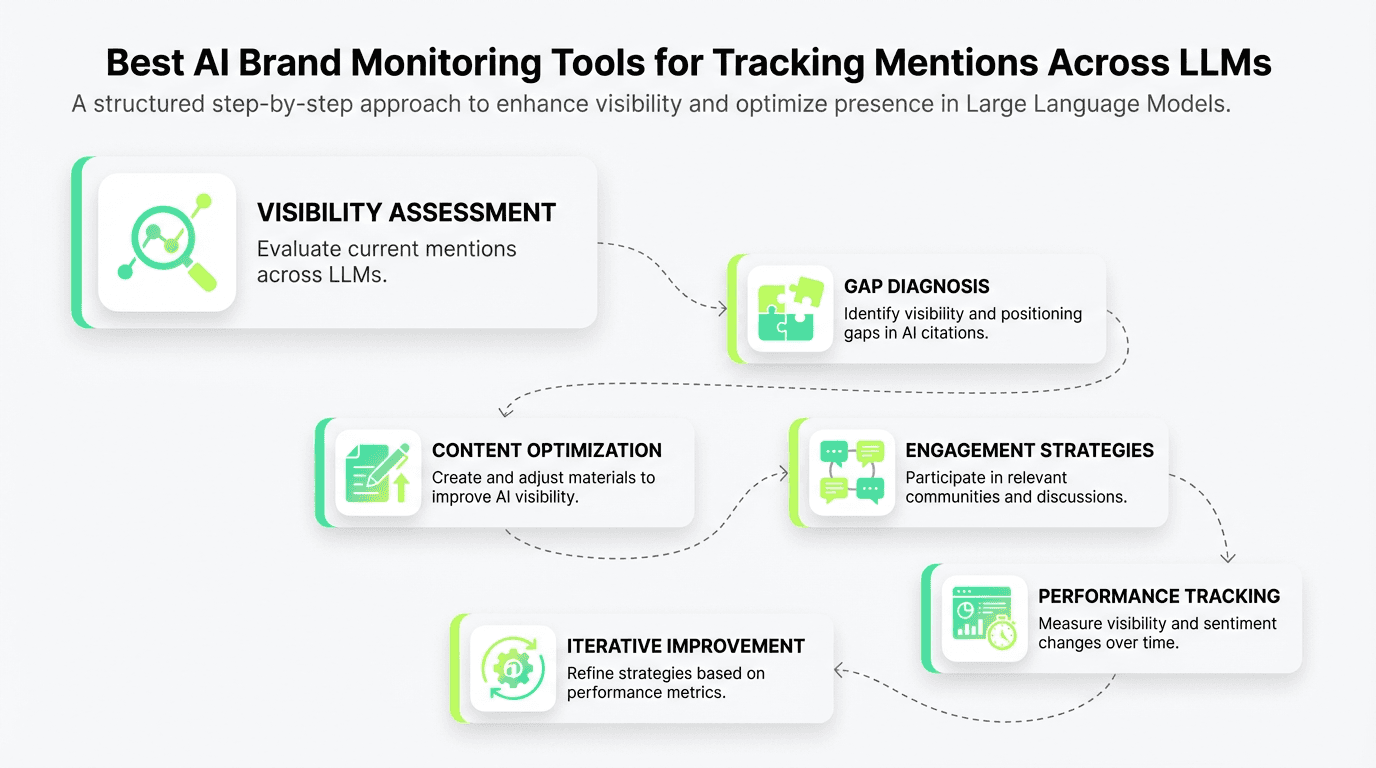

From AI Brand Monitoring Tools to GEO Action (Implementation Workflow)

Monitoring without GEO action is surveillance theater. You need a closed-loop system: monitor, diagnose, optimize, measure.

Month 1: Establish Baseline

Week 1-2: Set up tracking

Choose your software (Otterly for most teams, Peec if budget-constrained, Profound if enterprise)

Define 20-30 category queries to track

Run initial Share of AI Voice audit across ChatGPT, Perplexity, Gemini

Week 3-4: Diagnose gaps

Identify visibility gaps (queries where you don't appear)

Analyze positioning gaps (queries where competitors rank higher)

Review source attribution (what materials LLMs cite when they mention you)

Month 2-3: Optimize Content

Address visibility gaps:

Create material targeting queries where you're absent, and build an AI content pipeline to scale production

Focus on conversational, experience-driven formats (case studies, comparison articles, how-to guides)

Optimize existing materials for LLM extraction (add clear definitions, structured data, FAQ sections)

Strengthen social media presence with customer testimonials and reviews

Address positioning gaps:

Analyze why competitors get cited first (use Knowatoa or Profound)

Create comparison materials ("X vs Y" articles)

Build authority signals (Reddit participation, third-party reviews, earned media)

Engage with customers on social media platforms to generate authentic conversations

Address source authority gaps:

If Reddit dominates, participate authentically in relevant communities

If review sites dominate, invest in G2, Capterra, TrustRadius presence to collect customer feedback

If competitor blogs dominate, publish more authoritative thought leadership

Monitor social media conversations and respond to customer inquiries in real-time

Month 4+: Measure and Iterate

Track Share of AI Voice monthly:

Rerun the same 20-30 queries

Calculate visibility rate (% of queries where you appear)

Calculate average positioning (where you rank when cited)

Track sentiment shifts (are descriptions improving?)

Generate performance reports for stakeholders

Correlate with pipeline:

Tag deals by discovery source (ask "How did you first hear about us?")

Track whether influenced deals close faster or slower

Calculate CAC for discovered leads versus traditional channels

Analyze which campaigns drive the most engagement

Iterate strategy:

Double down on types that improve Share of AI Voice

Abandon tactics that don't move the needle after 90 days

Expand query tracking to adjacent categories as you gain visibility

Use insights from analytics to identify new opportunities in your industry

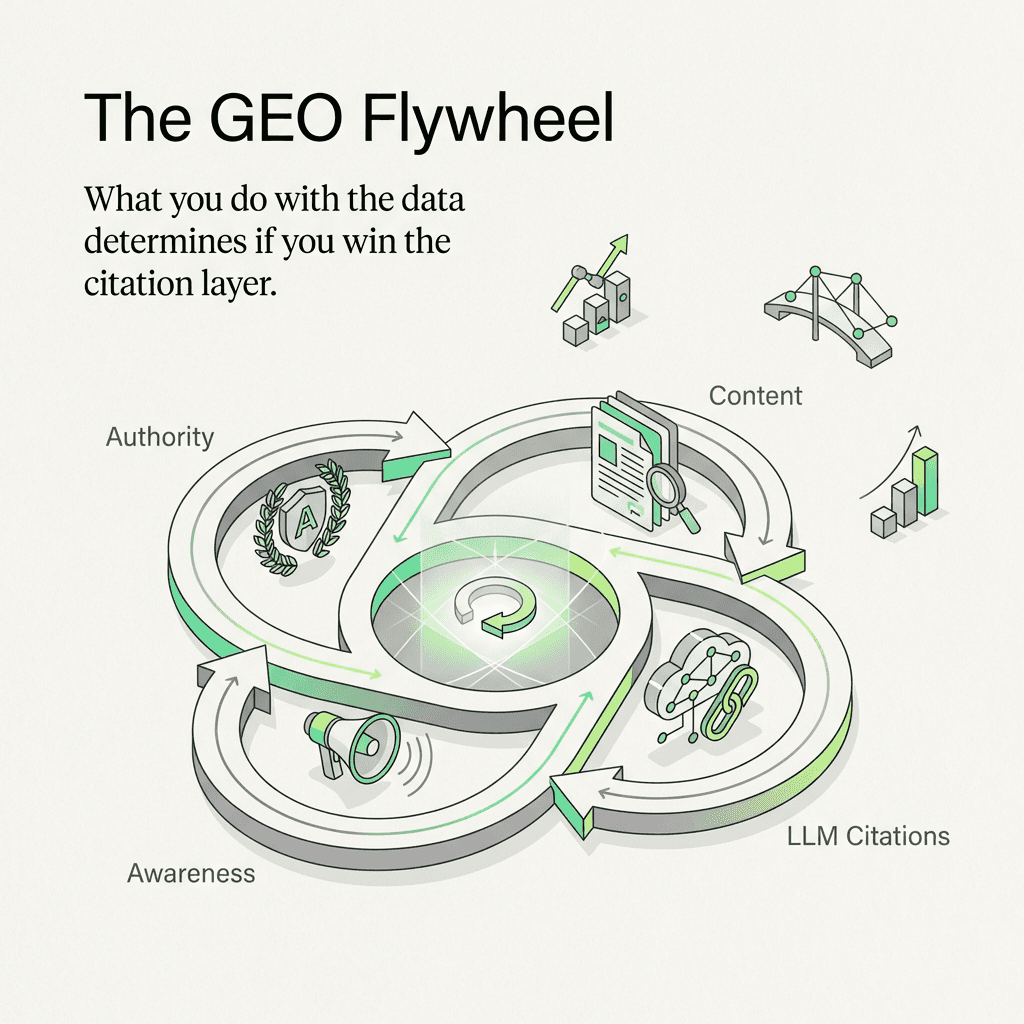

The GEO Flywheel

Better materials and AI content repurposing → Higher LLM citations → More awareness → More earned authority (Reddit, reviews) → Better training data footprint → Higher LLM citations

One Series B client went from 8% Share of AI Voice to 28% in six months using this workflow. They created 12 comparison articles, participated in 4 relevant subreddits, and optimized 30 existing blog posts for LLM extraction. Their pipeline grew from 3% to 19% of total ARR.

These monitoring solutions give you the data. What you do with it determines whether you win or lose the citation layer.

FAQ: AI Brand Monitoring Tools

How do best AI brand monitoring tools work?

These platforms query LLMs (ChatGPT, Perplexity, Gemini, Claude) with predefined prompts, capture the responses, and analyze whether your company appears, how it's positioned, and what sources are cited. Most software runs queries multiple times to account for non-deterministic outputs and tracks changes over time, providing real-time alerts and performance reports.

What's the difference between AI Overviews and LLM chat tracking?

AI Overviews (Google's SERP feature) pull from search results and appear above organic listings. LLM chat tracking monitors conversational platforms like ChatGPT and Perplexity, which synthesize answers from training data and real-time web searches. You need to monitor both; they use different citation logic and serve different audience needs.

Which solution should I start with?

Start with Otterly.AI if you need comprehensive multi-LLM tracking and competitor benchmarking. Start with Peec if you're early-stage and budget-constrained (focuses on ChatGPT and Claude only). Start with Profound if you're enterprise and need deep source attribution and GEO recommendations with advanced analytics features.

How much do these platforms cost?

Budget tier: Peec starts at ~$99/month (free trial available)

Mid-tier: Otterly.AI starts at ~$500/month, Brand24 at ~$99/month

Premium tier: Profound starts at ~$1,000+/month (contact for quote)

DIY options: Gumloop and ZipTie.Dev cost $50-200/month in API fees but require technical setup

How often should I check my online mentions?

Track Share of AI Voice monthly for the same 20-30 queries. Weekly tracking is overkill (LLM outputs are non-deterministic, so daily fluctuations are noise). Quarterly tracking is too slow to catch shifts before they impact pipeline. Set up real-time alerts for crisis management situations where immediate response is needed.

Can I improve my visibility without using a monitoring tool?

Yes, but you're flying blind. Manually query ChatGPT, Perplexity, and Gemini with your category keywords 10 times each (use AI keyword research to expand your list), log the results in a spreadsheet, and track monthly. This works for early validation but doesn't scale. Invest in a solution once you validate that visibility correlates with pipeline. Focus on building your online reputation through social media engagement, customer reviews, and authentic conversations across platforms to help improve your natural presence in LLM responses.

FAQs

What are AI brand monitoring tools?

AI brand monitoring tools measure how often (and in what context) LLMs and AI search experiences mention your company, products, and competitors. They typically run repeatable prompts across systems like ChatGPT, Perplexity, Gemini, and Google AI Overviews, then track visibility, positioning, and (sometimes) citations over time. This closes the gap left by traditional analytics in a zero-click world.

How do you track brand mentions in ChatGPT, Perplexity, and Gemini?

You track brand mentions by querying each model with a fixed set of category, use-case, and competitor prompts, running them multiple times to account for non-deterministic outputs, and logging whether your brand appears. The most reliable approach is longitudinal: re-run the same prompt set monthly and compare trends rather than treating a single run as "truth." Dedicated tools automate this and add competitor benchmarks and alerts.

What is Share of AI Voice and how do you calculate it?

Share of AI Voice is your brand's mentions divided by total category mentions across a defined prompt set and model set (e.g., "best CRM for startups" across ChatGPT + Perplexity + Gemini). If an LLM recommends five vendors and you're one of them, that's 20% for that prompt instance; aggregate across many prompts and reruns for a stable baseline. It's most useful when paired with positioning (rank order of mentions) and sentiment, not mentions alone.

Why do LLMs cite Reddit more than company websites?

LLMs often reward experience-driven, conversational sources that contain specific trade-offs, comparisons, and real user language (patterns common on Reddit and forums). In RAG-style answers, those threads can also match long-tail intent more precisely than polished product pages, so they get retrieved and echoed. The practical implication is you need both: strong first-party explainers and credible third-party discussion.

What's the difference between Google AI Overviews tracking and LLM chat tracking?

Google AI Overviews are SERP features that synthesize from search results, so they behave closer to SEO (indexing, rankings, and page-level eligibility). LLM chat tracking focuses on conversational outputs (e.g., ChatGPT/Perplexity/Gemini) that may blend training-data priors with retrieval and can vary more between runs and providers. Brands should monitor both because the sources, citation logic, and user intents differ.

Which metrics matter beyond "did we get mentioned"?

A practical AI perception stack is: visibility (are you present), context (which prompts trigger you), positioning (where you appear in lists), sentiment (how you're described), and source authority (what URLs or communities feed the answer). These metrics explain why Share of AI Voice moves and whether mentions help or hurt pipeline. "Citation quality" (who/what is cited when you're mentioned) often matters more than raw volume.

Which AI brand monitoring tool should I start with?

Start with Otterly.AI if you need multi-LLM coverage and competitor benchmarking across ChatGPT, Perplexity, Gemini, and AI Overviews. Choose Profound when you need deeper source attribution and an enterprise-grade GEO program with diagnostics for why competitors are cited. If you're budget-constrained and mainly want a baseline for conversational LLMs, Peec AI is a simpler entry point.

How often should you measure Share of AI Voice?

Monthly measurement is usually the best cadence because daily/weekly swings are often noise from model variability and shifting retrieval. Use the same 20-30 prompts, run each prompt multiple times per model, and compare month-over-month trends. Reserve real-time alerts for reputation or crisis scenarios.

Can you improve AI visibility without a dedicated monitoring platform?

Yes: build a prompt set (category, use case, alternatives, "vs" queries), query each LLM repeatedly, and track mentions and positioning in a spreadsheet. This works for early validation, but it becomes hard to maintain as prompt volume grows and you need consistent reruns, competitor baselines, and alerting. If you want a repeatable workflow, adding structured FAQs and clear definitions to key pages (like Metaflow recommends across its AEO content) can also make your material easier for LLMs to extract and cite.