TL;DR:

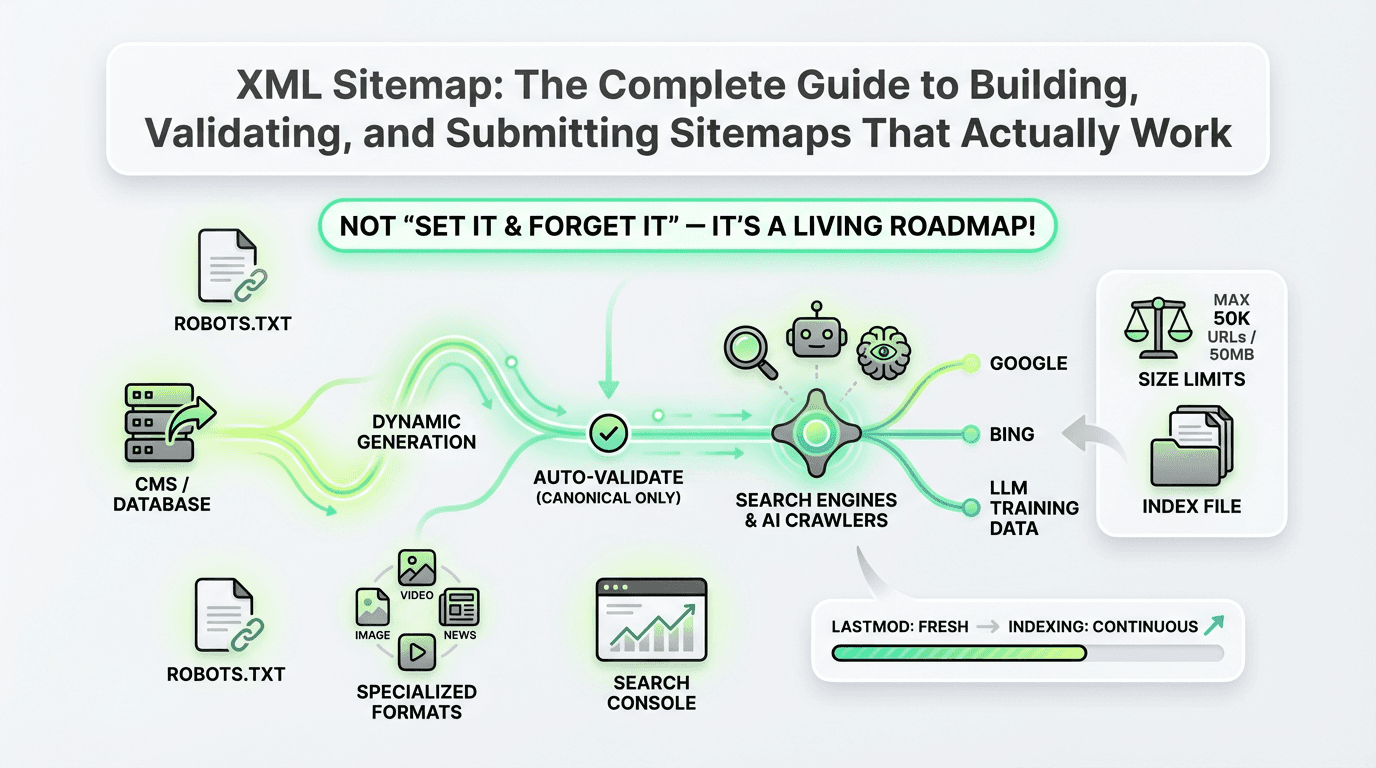

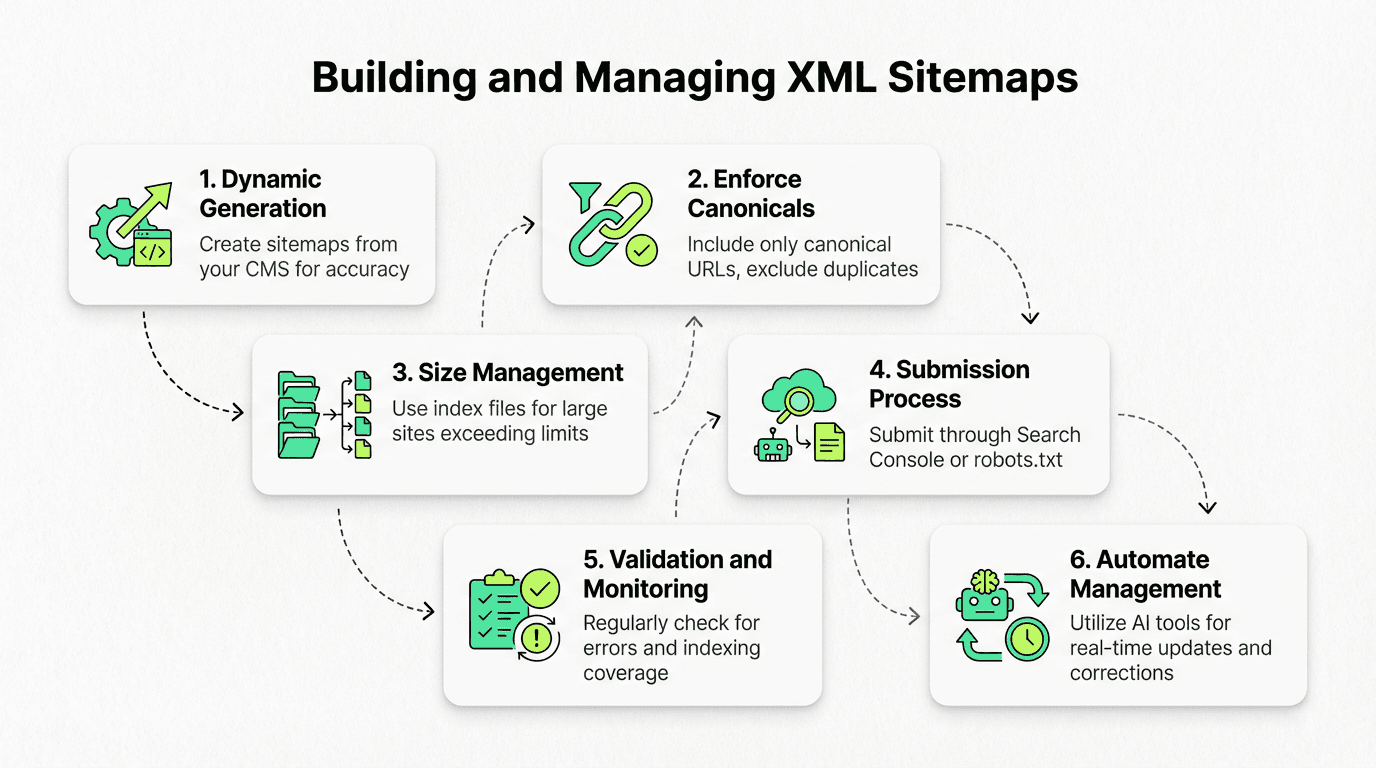

Sitemaps are structured files that help search engines discover, crawl, and index your most important URLs — submission is a hint, not a guarantee.

Build sitemaps dynamically from your CMS or database to enforce canonical-only URLs and accurate `lastmod` timestamps.

Respect size limits: Max 50,000 URLs or 50 MB per file; use an index file to segment large sites.

Submit via Google Search Console, Bing Webmaster Tools, or reference them in `robots.txt` for automatic discovery.

Validate regularly using a checker tool to catch 404s, redirects, and protocol violations.

AI and LLM crawlers increasingly rely on sitemaps to discover fresh, authoritative content — making accuracy more important than ever.

Automate management with tools like Metaflow AI to auto-generate, validate, and resubmit sitemaps whenever content changes — zero manual drift.

Avoid common mistakes: Don't include non-canonical URLs, ignore size limits, or let sitemaps go stale.

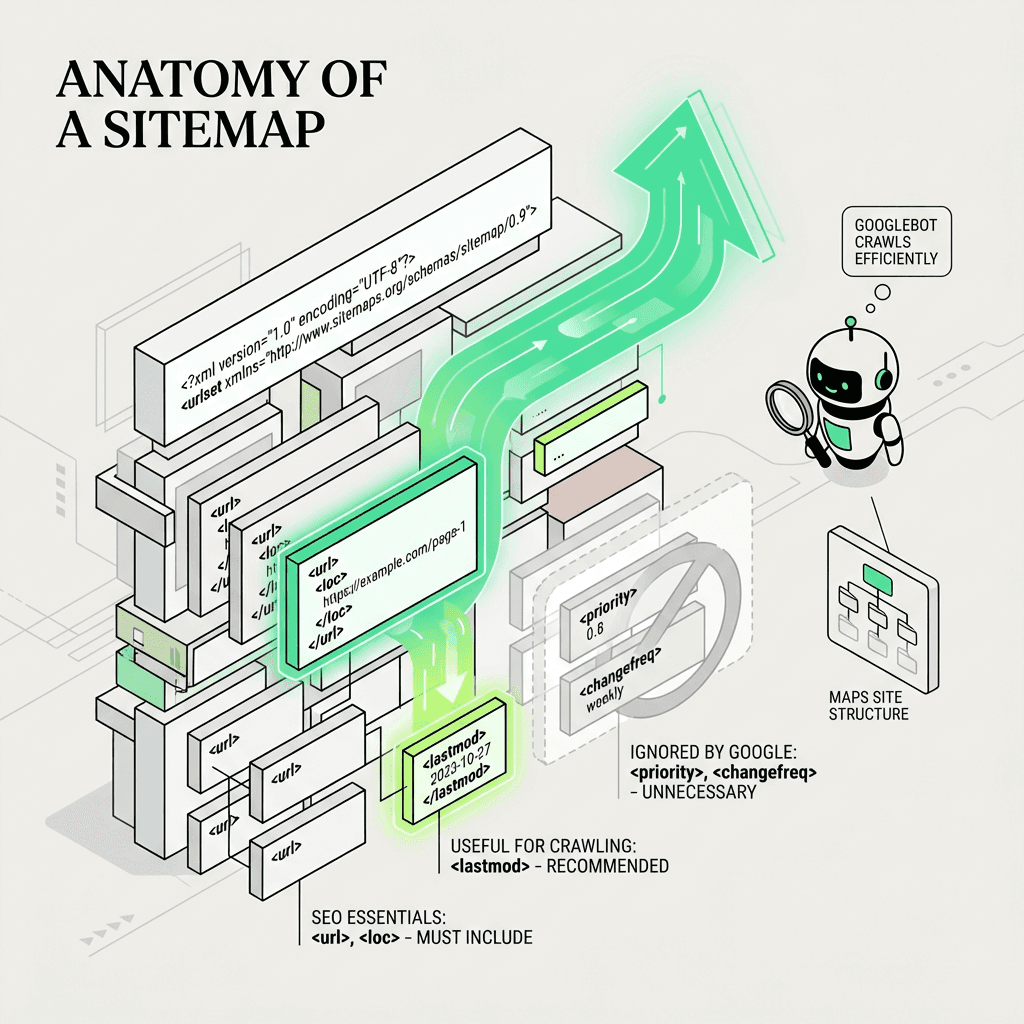

Focus on `` and `` — Google ignores `` and ``.

Monitor indexing coverage in Search Console to ensure your sitemap is driving real results.

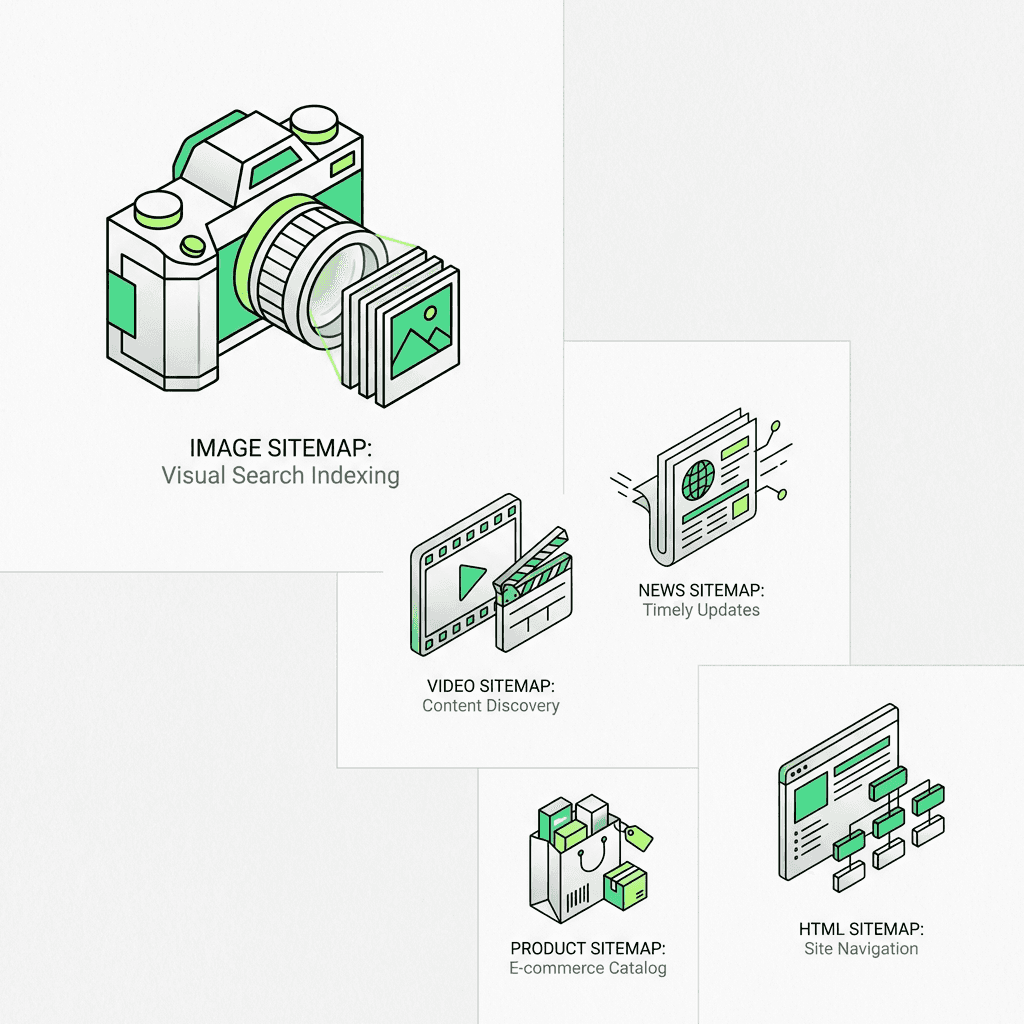

Use specialized formats like image sitemap, video sitemap, news sitemap, and HTML sitemap for rich content types.

If you've ever wondered whether search engines are finding all your important pages — or why some URLs never seem to get indexed — the answer often lies in your sitemap. This structured file acts as a roadmap for search crawlers, helping them discover, understand, and prioritize your content. But here's the catch: most teams treat sitemaps as a "set it and forget it" task, leading to bloated files, stale URLs, and missed indexing opportunities.

In this tutorial guide, you'll learn how to build accurate sitemaps, handle size limits, use index files for structure, understand the role of `lastmod` tags, and automate the entire process so your sitemap stays fresh as your content evolves. Whether you're managing a small blog or a sprawling e-commerce site, mastering XML sitemaps is one of the highest-leverage technical SEO investments you can make, especially when using an ai marketing automation platform for efficiency.

What Is an XML Sitemap and Why Does It Matter?

An XML sitemap is a structured file — typically named `sitemap.xml` — that lists all the URLs you want search engines to crawl and index. Think of it as your website's table of contents, written in a language that Googlebot, Bingbot, and other crawlers understand instantly.

But here's what many people get wrong: submitting a sitemap is a hint, not a guarantee. Google doesn't promise every URL you include will be indexed. Instead, your sitemap helps search engines:

Discover new or updated pages faster, especially on large or deeply nested sites

Understand your preferred canonical URLs when duplicate content exists

Prioritize crawl budget by surfacing your most important inventory

Interpret freshness signals through `lastmod` timestamps

In short, a well-maintained sitemap doesn't force indexing — it makes indexing easier and more accurate.

The Anatomy of a Sitemap Example

Here's a simple example showing the core structure:

Each `` block contains:

``: The canonical URL (required)

``: Last modification date in W3C datetime format (optional but recommended)

`` and ``: Officially supported but ignored by Google — don't waste time on these

How to Generate a Sitemap (The Right Way)

Building a sitemap isn't just about listing every URL on your website. It's about curating a high-quality inventory that reflects your canonical architecture and strategic priorities.

Step 1: Generate from Your Database

The most reliable approach is to generate your sitemap dynamically from your CMS or database. This ensures:

Only published, live content is included

Canonical URLs are enforced (no duplicates, no parameters)

`lastmod` values are accurate and reflect real content changes

For example, if you're running WordPress, a plugin like Yoast SEO or RankMath can auto-generate sitemaps. For custom-built sites, write a script that queries your database and outputs the proper format.

Step 2: Enforce Canonical-Only URLs

Your sitemap should include only canonical URLs — the definitive version of each page. Exclude:

Duplicate content (e.g., paginated URLs, sort/filter parameters)

Redirected URLs (301s or 302s)

Noindexed pages

Low-value pages (thank-you pages, results, archives)

This discipline keeps your sitemap lean and signals to crawlers which URLs deserve crawl budget.

Step 3: Use a Sitemap Generator for Speed

If you're managing a small website or need a quick solution, a sitemap generator can help. Popular options include:

Screaming Frog SEO Spider (crawls your website and exports a sitemap)

XML-sitemaps.com (online generator for sites under 500 pages)

Sitebulb (advanced crawler with export)

However, manual generators require re-running every time your content changes — which is why automation (more on this later) is the gold standard.

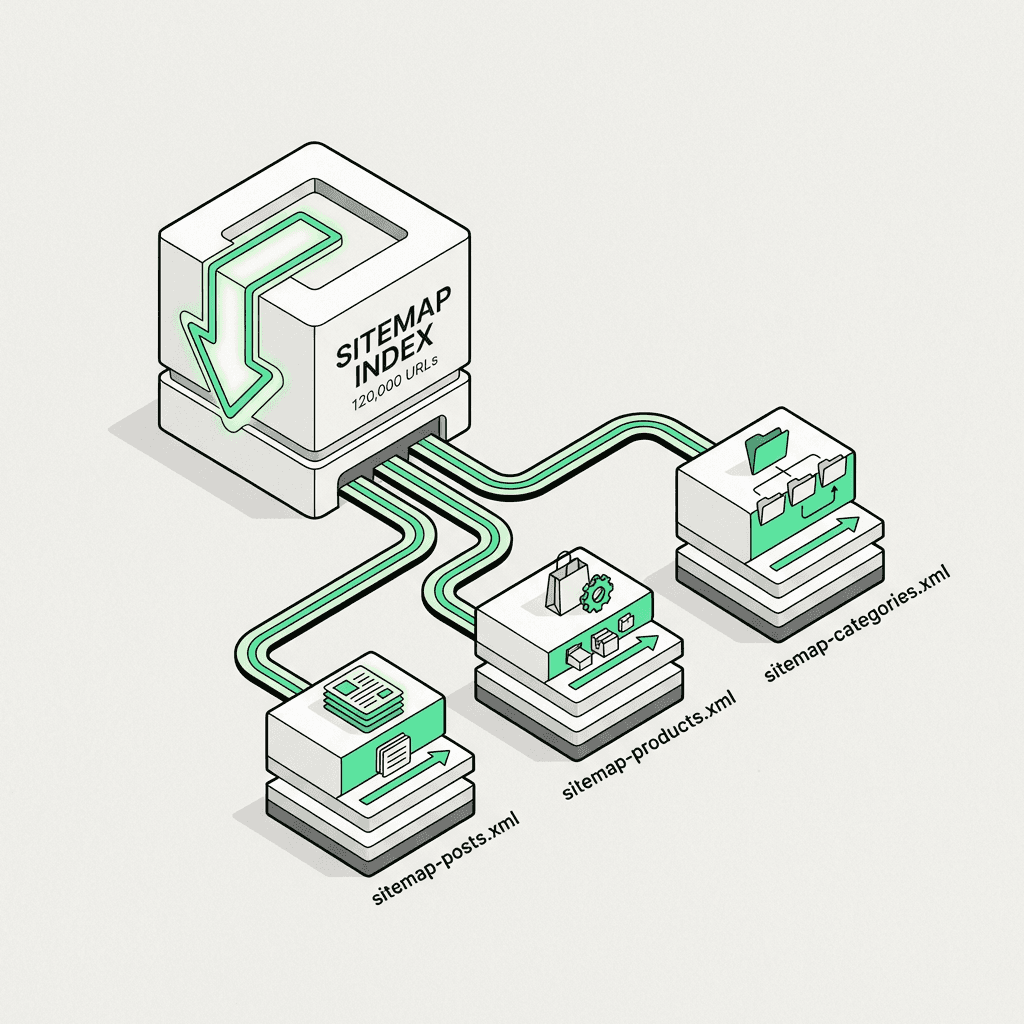

Understanding Size Limits and Sitemap Index Files

Google and Bing enforce strict size limits:

Maximum 50,000 URLs per file

Maximum 50 MB uncompressed

If your website exceeds these thresholds, you'll need a sitemap index file — a master sitemap that links to multiple child sitemaps.

How to Structure a Sitemap Index File

Here's an example:

Each child sitemap can contain up to 50,000 URLs. Common segmentation strategies:

By content type (blog posts, product pages, categories)

By date (monthly or yearly archives)

By language or region (for international sites)

This approach keeps your structure organized, speeds up crawling, and makes it easier to track indexing performance per segment.

The Importance of Lastmod (And How to Get It Right)

The lastmod tag tells crawlers when a page was last modified. When used correctly, it:

Helps crawlers prioritize fresh content

Reduces wasted crawl budget on unchanged pages

Signals authority and relevance (especially for news articles and time-sensitive content)

Common Lastmod Mistakes

Setting lastmod to the current date for every page — this trains crawlers to ignore your timestamps

Omitting lastmod entirely — you lose a valuable freshness signal

Using lastmod for trivial changes (e.g., sidebar updates) — only update when core content changes

Best practice: Pull `lastmod` directly from your CMS's `updated_at` or `modified_date` field, and only update it when the page's primary content is edited.

How to Submit a Sitemap to Google and Bing

Once your sitemap is live, you need to submit it to search engines so they know where to find it.

Method 1: Submit via Search Console

Log in to Google Search Console

Navigate to Sitemaps in the left sidebar

Enter your sitemap location (e.g., `https://example.com/sitemap.xml`)

Click Submit

Repeat the process in Bing Webmaster Tools.

Method 2: Reference Sitemap in Robots.txt

Add this line to your `robots.txt` file:

This allows any crawler to discover your sitemap automatically, without manual submission.

Method 3: Programmatic Submission via API

For large or frequently updated sites, use the Google Search Console API or Bing URL Submission API to automatically resubmit your sitemap whenever content changes. This is where automation tools and AI SEO tools shine.

Validating Your Sitemap with a Checker Tool

Before you submit, always validate your sitemap to catch errors that could block indexing.

Top Sitemap Checker Tools

Google Search Console Report — shows indexing status and errors

Screaming Frog SEO Spider — validates structure and checks for broken URLs

Online Validator — checks syntax and compliance with the sitemap protocol

Bing Webmaster Tools Report — similar to Google's, with Bing-specific insights

Common errors to watch for:

URLs returning 404 or 500 status codes

Non-canonical URLs (duplicates, redirects)

Incorrect date format in `lastmod`

Exceeding 50k URL or 50MB size limits

Fix these issues before resubmitting to avoid wasting crawl budget.

Advanced Strategies for Large Sites

If you're managing thousands or millions of pages, standard practices won't scale. Here's how to level up.

1. Dynamic Generation

Instead of static files, serve sitemaps dynamically from your application or CMS. This ensures:

Real-time accuracy (no manual regeneration)

Automatic enforcement of canonical rules

Instant updates when content is published or deleted

2. Segment by Priority and Freshness

Not all pages deserve equal crawl attention. Create separate sitemaps for:

High-priority pages (product landing pages, pillar content)

Frequently updated pages (blog posts, news articles)

Archived or low-priority pages (old blog posts, seasonal content)

This segmentation helps search engines allocate crawl budget more effectively. Using a no-code ai workflow builder can make it easier to automate and manage these processes without technical debt.

3. Monitor Indexing Coverage

Use Google Search Console's Coverage Report to track:

How many URLs from your sitemap are indexed

Which URLs are excluded (and why)

Crawl errors or validation issues

If large portions of your sitemap aren't getting indexed, it's a signal to audit your content quality, canonical strategy, or crawl budget allocation.

How AI Is Changing Sitemap Management

As AI-powered search engines and large language models (LLMs) increasingly rely on indexed content for training and retrieval, sitemaps are becoming a critical signal for both Googlebot and AI crawlers. Here's why:

AI systems prioritize fresh, authoritative content — accurate `lastmod` values help them identify the most current information

LLM training crawlers (like those used by ChatGPT and Perplexity) often respect the sitemap protocol to discover high-quality content

Semantic search and entity-based indexing benefit from clean, canonical URL structures that sitemaps enforce

In other words, a well-maintained sitemap doesn't just help traditional web search — it positions your content for the next generation of AI-driven discovery. Leveraging ai tools for content marketing can further enhance your approach to technical SEO.

The Role of SEO Automation Tools and AI SEO Tools

Manual sitemap management doesn't scale in 2026. Modern SEO automation tools and AI SEO tools can:

Auto-generate sitemaps from your CMS or database

Validate URLs in real time (checking for 404s, redirects, and canonicalization issues)

Trigger resubmission via API when content changes

Monitor indexing status and alert you to coverage drops

This is where platforms like Metaflow AI become invaluable.

Automate Sitemap Management with Metaflow AI

Imagine this: every time you publish a new blog post, update a product listing, or archive old content, your sitemap automatically regenerates, validates itself, and resubmits to Google and Bing — without you lifting a finger.

That's the power of Metaflow AI, an advanced AI automation platform and no-code agent builder designed for growth teams who need to move fast without sacrificing precision.

How Metaflow Automates Sitemap Workflows

With Metaflow, you can design a natural language agent that:

Pulls fresh content from your CMS database (WordPress, Contentful, Shopify, etc.)

Enforces canonical-only URL rules (excludes duplicates, redirects, and noindexed pages)

Validates against size limits (automatically splits into child sitemaps and generates an index file if needed)

Outputs clean, compliant format (with accurate `lastmod` timestamps)

Triggers resubmission via API whenever content changes

All of this happens in a unified ai marketing workspace — no fragmented connectors, no scattered prompts, no manual oversight.

Why Metaflow Stands Out Among AI Growth Marketing Tools

Unlike traditional automation stacks that require stitching together Zapier workflows, custom scripts, and brittle integrations, Metaflow brings ideation and execution into one platform. You can:

Design workflows in natural language (no coding required)

Experiment with logic and rules before deploying

Codify insights into durable, scalable agents that run autonomously

For growth teams managing complex content operations, Metaflow reclaims cognitive bandwidth so you can focus on strategy, not maintenance.

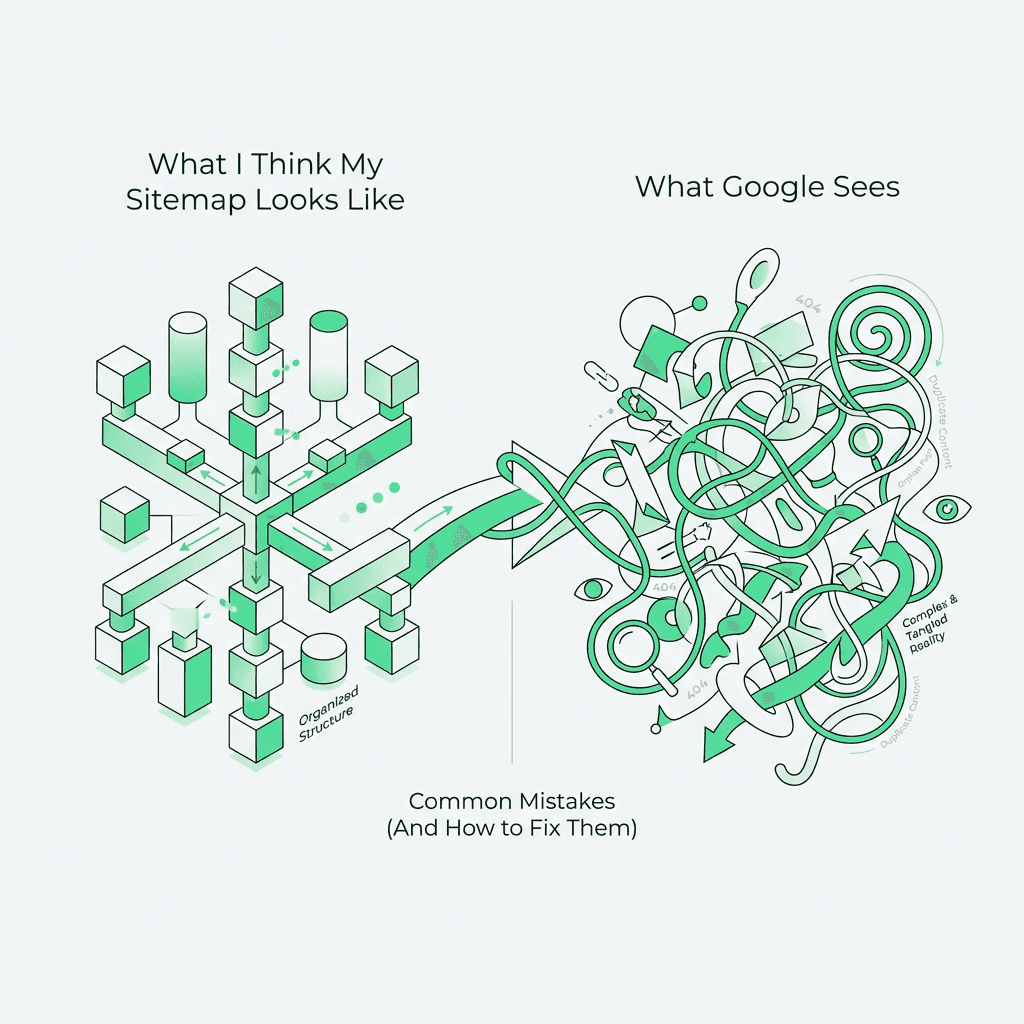

Common Mistakes (And How to Fix Them)

Even experienced SEOs make errors. Here are the most common pitfalls:

1. Including Non-Canonical URLs

Problem: Duplicate URLs (e.g., `?utm_source=email` or paginated pages) clutter your sitemap and confuse crawlers.

Fix: Only include the canonical version of each page. Use `rel="canonical"` tags to consolidate duplicate signals.

2. Ignoring Size Limits

Problem: Submitting a sitemap with 100,000 URLs or a 75 MB file violates protocol limits and may cause indexing issues.

Fix: Split into multiple sitemaps and use an index file.

3. Letting Sitemaps Go Stale

Problem: Your sitemap includes deleted pages, outdated URLs, or missing new content.

Fix: Automate generation so it stays in sync with your CMS.

4. Misusing `` and ``

Problem: Spending time setting these tags when Google ignores them.

Fix: Focus on `` and `` only.

5. Forgetting to Resubmit After Major Changes

Problem: You launch a new section of your website, but Google doesn't discover it for weeks.

Fix: Resubmit via Search Console or use API-based automation.

Best Practices Checklist

To wrap up, here's a tactical checklist for building and maintaining a high-performance sitemap:

✅ Generate sitemaps dynamically from your database (not static files)

✅ Include only canonical URLs (no duplicates, redirects, or noindexed pages)

✅ Use accurate `lastmod` timestamps (pulled from your CMS)

✅ Respect size limits (50k URLs, 50 MB per file)

✅ Use index files for large sites

✅ Reference sitemap in robots.txt for automatic discovery

✅ Submit to Google and Bing via Search Console or webmaster tools

✅ Validate with a checker before submission

✅ Monitor indexing coverage in Search Console

✅ Automate regeneration and resubmission when content changes

Follow these principles, and your sitemap will become a reliable asset in your technical SEO toolkit.

The Future of Sitemaps in an AI-First Landscape

As web search evolves beyond traditional blue links — with AI overviews, conversational agents, and entity-based retrieval taking center stage — the role of sitemaps is shifting from "nice to have" to "mission-critical."

Why? Because AI systems need structured signals to understand your content architecture. A clean sitemap helps LLMs and semantic search engines:

Identify authoritative, canonical sources

Prioritize fresh, high-quality content

Map relationships between pages and topics

In this context, maintaining an accurate, up-to-date sitemap isn't just about pleasing Googlebot — it's about ensuring your content is discoverable, citable, and retrievable in the age of AI-powered online search.

And with tools like Metaflow AI, you can automate the entire process — from generation to validation to submission — so your sitemap stays accurate as your content evolves, without adding manual overhead.

Specialized Sitemap Types for Rich Content

Beyond standard web page listings, specialized sitemap types help search engines understand specific content formats:

Image Sitemap

An image sitemap helps Google discover images embedded in your content, especially those loaded via JavaScript or housed in a directory. Include image-specific tags like ``, ``, and `` to improve visibility in Google Images.

Video Sitemap

A video sitemap provides metadata about video content on your website — including title, description, duration, thumbnail location, and schema markup. This helps videos appear in video search results and rich snippets.

News Sitemap

For publishers and news sites, a news sitemap follows a specialized protocol that includes publication date, article type, and keywords. This ensures timely indexing in Google News and helps with discovery of time-sensitive content.

HTML Sitemap

An HTML sitemap is a human-readable web page that lists important links on your website, often organized by category or page type. While not a replacement for an XML version, it improves user experience and provides internal link structure benefits.

Ecommerce Product Sitemaps

For ecommerce sites, creating a dedicated product sitemap helps search engines discover and index product pages efficiently. Include only in-stock, canonical product URLs and segment by category or product type for better organization.

FAQs

What is an XML sitemap?

An XML sitemap is a machine-readable file (usually sitemap.xml) that lists the canonical URLs you want search engines to discover and crawl. It helps Googlebot and Bingbot find important pages, especially on large sites or sites with deep internal architecture. Submitting a sitemap is a discovery hint—not an indexing guarantee.

Does submitting an XML sitemap guarantee Google will index my pages?

No. An XML sitemap can speed up discovery and recrawling, but Google still decides what to index based on quality, canonicalization, duplication, and crawlability. Use Google Search Console’s indexing and coverage reports to see whether sitemap URLs are actually being indexed.

What URLs should be included in an XML sitemap (and what should be excluded)?

Include only canonical, indexable URLs that return a 200 status and are intended to appear in search results. Exclude redirects (301/302), 404/5xx URLs, noindex pages, parameterized duplicates (like UTM URLs), and low-value utility pages (e.g., thank-you pages). This keeps your XML sitemap aligned with crawl budget and canonical signals.

What are the XML sitemap size limits, and when do you need a sitemap index file?

A single sitemap file is limited to 50,000 URLs or 50 MB uncompressed. If you exceed either limit, split URLs into multiple sitemap files and reference them from a sitemap index file, which acts as a directory of child sitemaps. Segmenting by content type (posts/products) or freshness often makes troubleshooting easier in Search Console.

How important is the tag in an XML sitemap?

is one of the most useful sitemap fields because it signals when the main content of a page actually changed, helping crawlers prioritize recrawls. It should reflect meaningful edits, not template or sidebar changes, and it should not be set to “today” for every URL (that pattern can cause search engines to discount it). Use W3C datetime or ISO-style dates pulled from your CMS/database updated_at field.

Do and matter for Google sitemaps?

For Google, they’re effectively ignored in practice compared with and . They won’t hurt if used correctly, but they rarely justify the effort or complexity. Focus on canonical URLs, valid responses, and accurate modification times.

How do you submit a sitemap to Google Search Console and Bing Webmaster Tools?

In Google Search Console, open the Sitemaps report for your verified property and submit your sitemap URL (for example, /sitemap.xml) so Google can fetch it. Do the same in Bing Webmaster Tools to help Bing discover and monitor sitemap processing. You can also reference the sitemap in robots.txt for automatic crawler discovery.

How often should you validate a sitemap, and what errors should you look for?

Validate any time you ship major site changes and on a recurring schedule (weekly/monthly for active sites). Common sitemap errors include 404s/500s, redirected URLs, non-canonical duplicates, invalid lastmod formats, and exceeding size limits. A crawler/validator (plus Search Console’s sitemap report) will surface protocol and fetch issues quickly.

Why do AI and LLM crawlers care about sitemaps in 2026?

Many AI-driven retrieval systems and web crawlers use sitemaps as an efficient discovery layer for fresh, authoritative URLs, especially when internal linking is complex or content changes frequently. Clean canonical URLs and trustworthy values improve the odds your newest pages are found and reprocessed. An accurate XML sitemap increasingly supports both traditional indexing and AI-first discovery.

How can Metaflow AI help automate XML sitemap management?

You can automate sitemap generation from your CMS/database, enforce canonical-only rules, validate URL status/canonicalization, and resubmit sitemaps when content changes—reducing drift and stale URLs over time. Metaflow AI can sit on top of those workflows so the sitemap stays accurate without manual rebuilds, after you’ve defined the core rules and triggers.