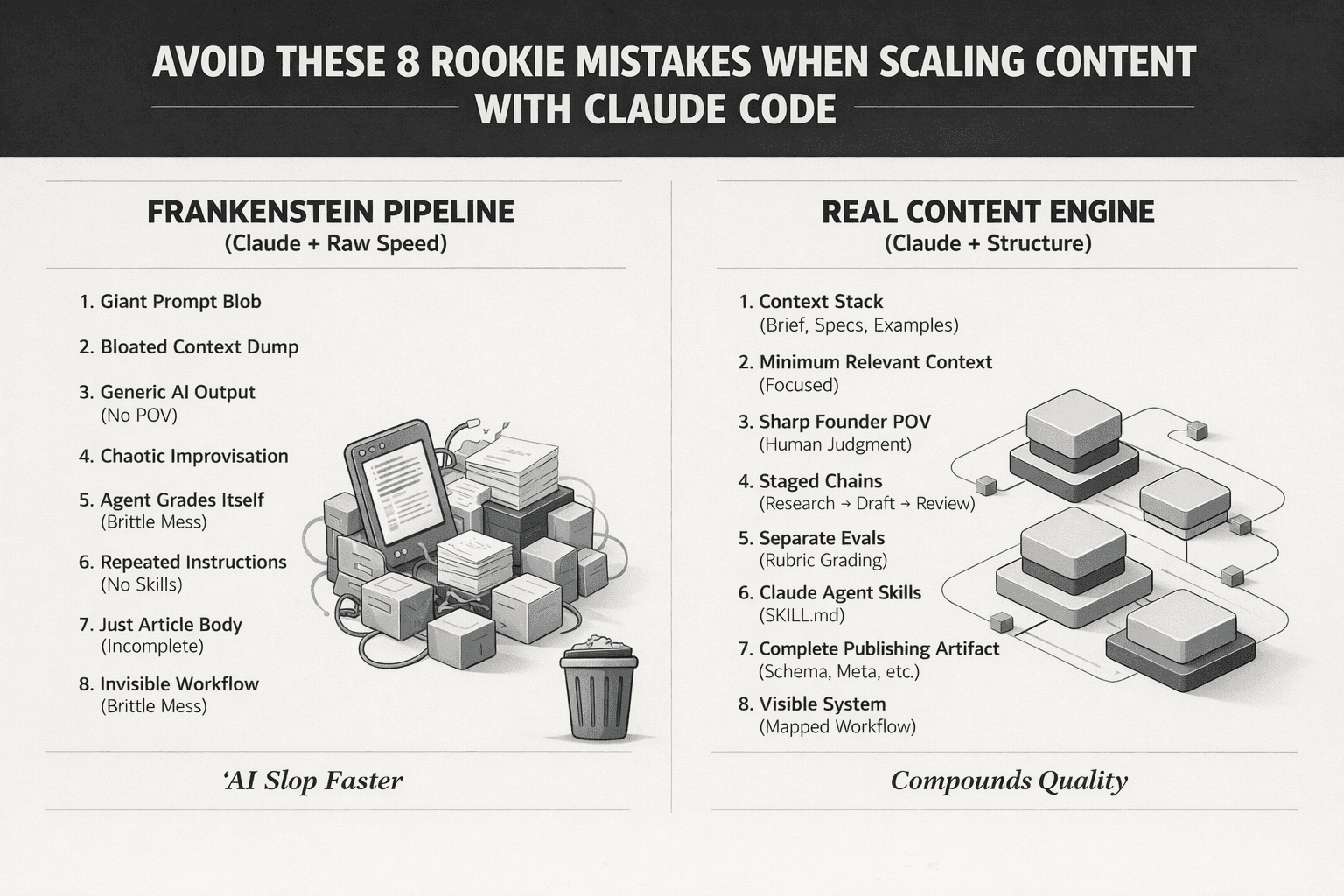

A lot of founders are using Claude Code to move faster on content. That part makes sense.

The mistake is assuming Claude Code + raw speed = a real content engine. It doesn't.

Without judgment, structure, and clean workflows, Claude Code won't scale quality — even for ai marketing agents. It will just help you produce AI slop faster.

I've made these mistakes myself. If you're building with Claude Code, Claude agent skills, and SKILL.md, avoid these 8 rookie errors:

1. Treating prompts as the system

With Claude Code, the prompt is not the whole game. The real game is the context stack you give the agent. Anthropic explicitly frames this as context engineering, not just prompt wording.

If your Claude agent does not have:

Positioning

Audience

Offer

Examples

Source material

SEO targets

Brand voice constraints

…it will fill the gaps with generic filler. A clever prompt cannot rescue weak inputs.

✅ What to do instead: Design the full context deliberately — brief, source docs, examples, output spec, review rubric. That is how Claude Code stops sounding generic.

2. Dumping too much context into the agent

With Claude Code, more context is not automatically better. Anthropic's guidance is that context is a finite resource, and bloated context can reduce focus and recall.

A lot of people throw everything into the window:

Old notes

Random strategy docs

Conflicting positioning

Stale examples

Half-written landing pages

Now the Claude agent has plenty of tokens, but no clarity. That is how you get averaged, mushy output.

✅ What to do instead: Give Claude only the minimum relevant context for the current stage — research context for research, brief for drafting, eval rubric for review, metadata/schema requirements for publishing.

3. Not encoding a sharp POV before the agent writes

With Claude Code, the agent can structure beautifully. But it cannot invent a credible founder POV out of thin air.

This is where most AI-assisted content dies:

No conviction

No lived experience

No strong angle

No tension

No "we believe X because we've seen Y"

The result looks polished, but says nothing.

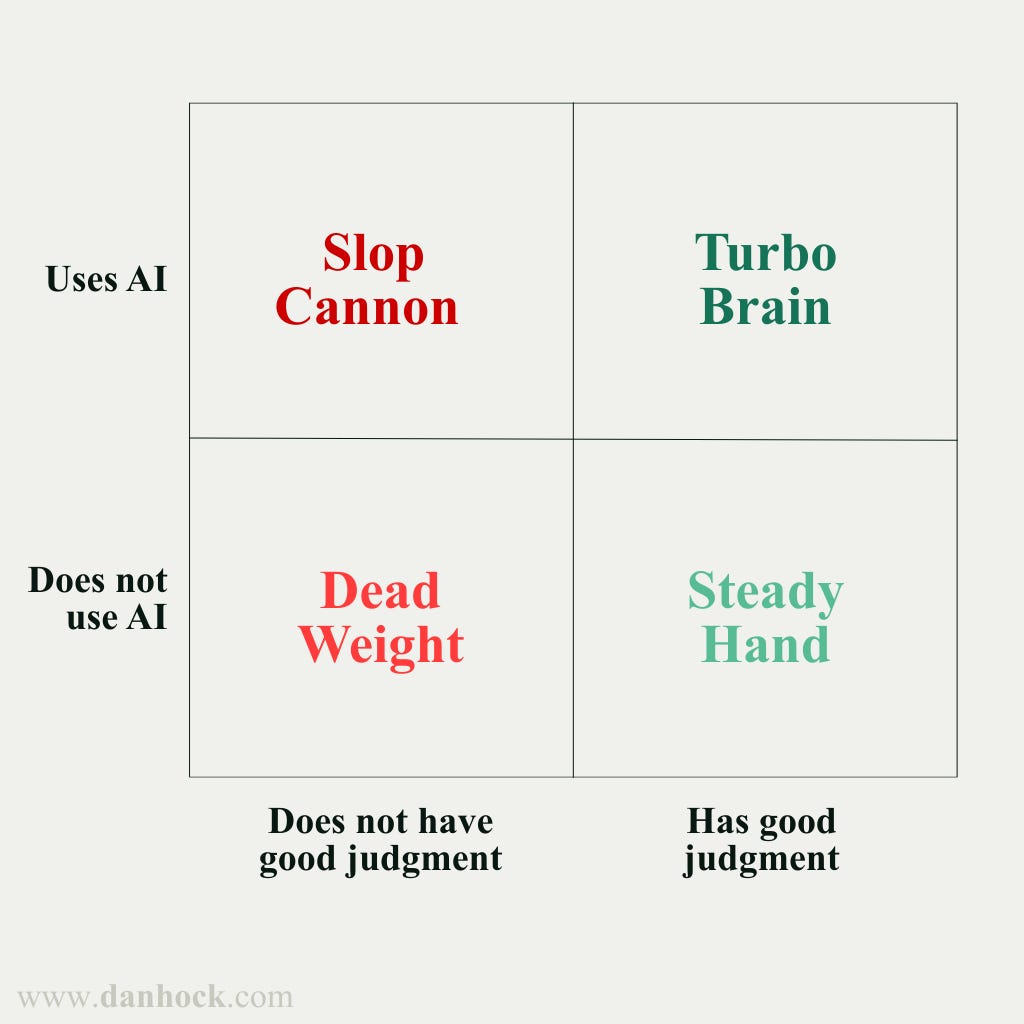

Dan Hockenmaier's broader point is the right lens here: as AI makes output cheaper, taste and judgment become more valuable, not less.

✅ What to do instead: Before Claude writes, define your stance, what you disagree with, what you've learned the hard way, and what a generic AI answer would miss. Your POV is the part the agent should amplify — not replace. If keyboarding is slow, try Wispr Flow or similar voice-to-text app to record your POV

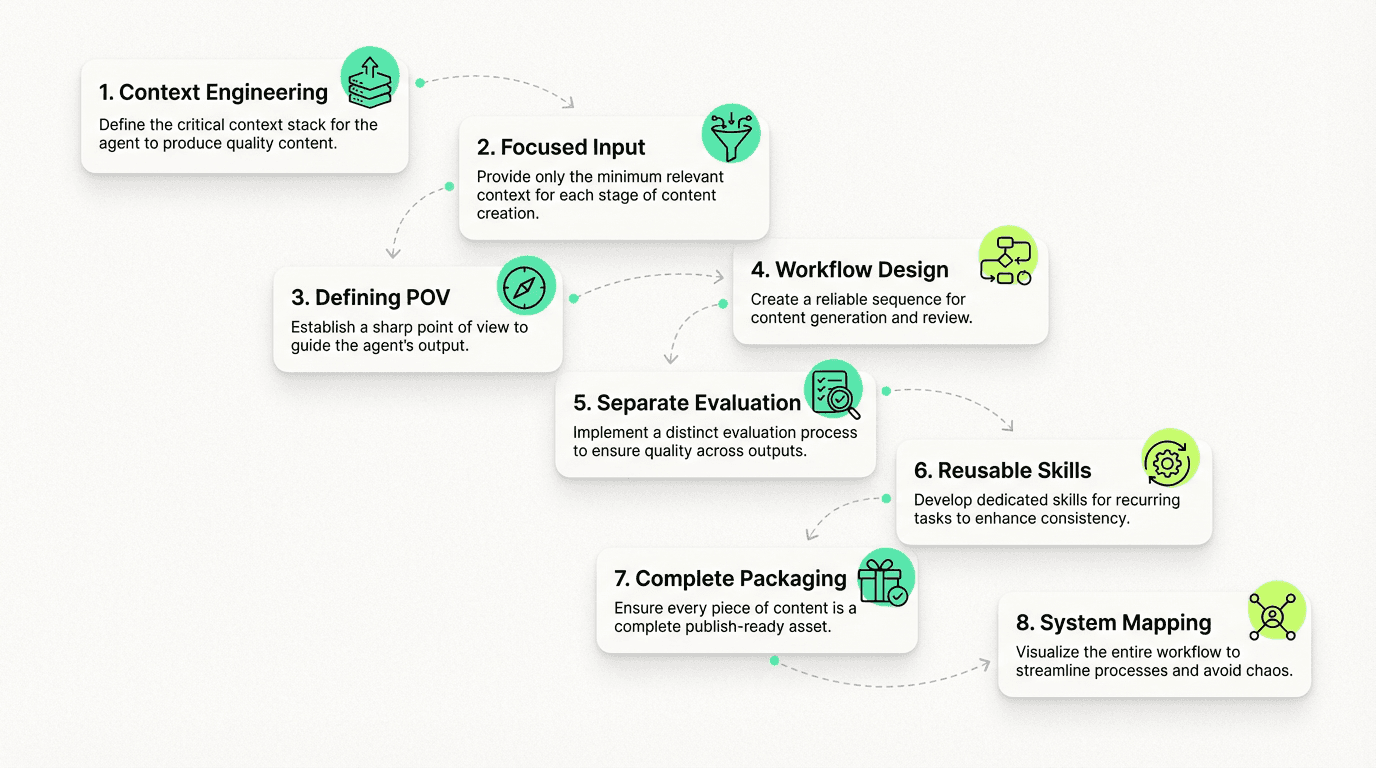

4. Using one giant prompt instead of a real workflow

With Claude Code, one-shot prompting is fine for a demo. It is not how you build a reliable production workflow. Anthropic's prompt guidance favors staged chains: generate, review, refine.

If you ask one agent call to do research, outline, draft, optimize, add SEO, and package for CMS — you usually get something that looks complete but is structurally fragile.

✅ What to do instead: Build a clean sequence: Research → Outline → Draft → Evaluate → Revise → Package → Publish. That is the difference between a workflow and a prompt blob.

5. Skipping content evals and letting the same agent grade itself

With Claude Code, this is one of the biggest rookie mistakes.

If the same Claude agent writes the draft and then casually says "looks good," that is not QA. That is autocomplete with self-esteem.

Anthropic's engineering guidance on agent evals is clear: multi-step systems need explicit grading logic because errors compound.

What every content workflow needs to evaluate

Factual accuracy

Unsupported claims

Brand alignment

Positioning consistency

SEO completeness

Readability

Publish readiness

✅ What to do instead: Separate generation from evaluation. One Claude Code step writes. Another step — or another Claude agent skill — checks it against a rubric. That one change alone can save you from industrialized slop.

6. Not turning recurring standards into Claude agent skills

With Claude Code, if you keep repeating the same instructions in chat, you do not have a system. You have fragile operator memory.

Anthropic's Skills docs are useful here: Skills are reusable task modules — concise, structured, and built to be discoverable and reusable. They are centered on a SKILL.md file, with optional supporting files and scripts.

✅ What to do instead: Create dedicated skills — content brief → outline, brand voice checker, SEO completeness checker, internal linking assistant, publish packager. Put the logic in SKILL.md, not in your head. That is how you scale consistency without re-explaining yourself every time.

7. Shipping the article body but skipping the rest of the package

With Claude Code, many founders think the job is done once the blog draft exists. It is not.

A publish-ready content asset is more than body copy. It also needs:

Meta title

Meta description

Schema

Internal link plan

Image plan

CTA

Source grounding

Distribution cutdowns

If you skip those and promise to "patch it later," you create content debt immediately. Later, every post becomes a manual cleanup job.

✅ What to do instead: Define "done" as a complete publishing artifact, not just "article generated." If it is missing schema, metadata, image logic, or linking — it is not done.

8. Building a Frankenstein pipeline instead of a visible system

With Claude Code, this is the late-stage failure mode.

You start simple: "Let's just use an agent to generate posts."

Then later you bolt on images, schema, CMS formatting, internal links, QA, snippets, review layers, repurposing. Now you have a content workflow nobody can clearly see, debug, or trust.

Anthropic's Claude Code best-practices emphasize planning, structure, and reusable patterns over chaotic improvisation.

What to do instead

Map the full workflow first. Then decide:

Which steps belong in the main Claude Code flow

Which steps should live in Claude agent skills

Which rules should be enforced by scripts / hooks

Which parts still require human judgment

That is how you avoid building a fast, brittle mess.

The real lesson

Claude Code is powerful. But it does not magically create leverage on its own — not even for ai marketing agents.

It amplifies the quality of the system you build around it:

The context you pass

The standards you encode

The skills you package in SKILL.md

The evals you run

The guardrails you enforce

The judgment you keep human

If your only axis is velocity, Claude Code will help you scale mistakes.

If you pair it with real taste, clean workflows, and strong Claude agent skills, it becomes an unfair advantage.

That is the difference between shipping content faster and building a content engine that actually compounds.

Curious how others here are using Claude Code, agents, and SKILL.md to build reliable ai marketing agents that scale content without letting quality collapse.