TL;DR:

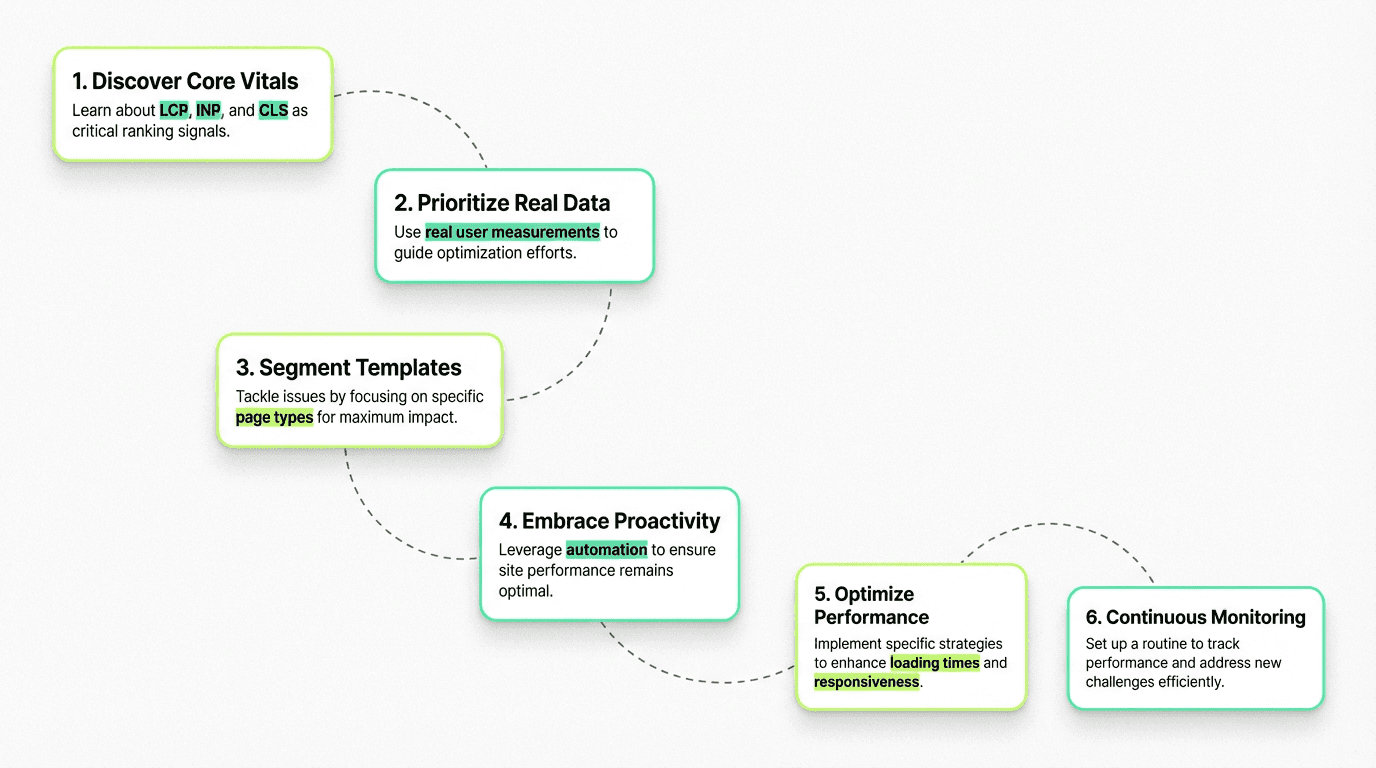

Core Web Vitals (LCP, INP, CLS) are ranking signals measuring real user experience—LCP ≤2.5s, INP <200ms, CLS <0.1 are passing thresholds

Prioritize field data over lab scores: Real user measurements from Chrome User Experience Report determine rankings; synthetic lab tests are for diagnosis, not prioritization

Segment by template type: Fix issues at the template level (homepage, product pages, blog) rather than URL-by-URL for maximum efficiency

AI has made CWV more critical: With AI-generated content flooding search results, website speed is now a primary competitive differentiator and tiebreaker

Proactive monitoring beats reactive fixes: Workflow automation tools can pull data daily, detect regressions from deploys, and alert before failures hurt rankings

Common ways to improve core web vitals: Preload LCP images, break up long JavaScript tasks for better interaction to next paint, always specify image dimensions to prevent layout shift

Platform-specific optimization: WordPress users should check plugins like WP Rocket for caching, image optimization tools, and proper HTML structure

Avoid these mistakes: Don't chase perfect lab scores, neglect mobile, or treat CWV as one-time—continuous monitoring and budgets are essential

Use the right tools: Search Console vitals report for overview, PageSpeed Insights for diagnosis, URL inspection for verification, and RUM tools for ongoing assessment

If you've noticed your perfectly optimized content slipping in search rankings despite strong backlinks and keywords, the culprit might be hiding in plain sight: Core Web Vitals. Google's user experience metrics have evolved from nice-to-have indicators to critical ranking factors that can make or break your SEO strategy.

In an era where AI-generated content floods search results and hundreds of pages compete for the same queries, CWV SEO has become the differentiator that separates winners from also-rans. Fast websites don't just rank better—they convert better, especially for users arriving via AI-referred traffic who expect instant, seamless answers.

This comprehensive guide will walk you through everything you need to know about Google's web vitals: what they measure, why they matter for your website, how to test and check them properly using the right tools, and most importantly, how to prioritize fixes using real-world field data instead of chasing perfect lab scores.

What Are Core Web Vitals?

CWV are a set of standardized metrics that search engines use to evaluate the real-world user experience of your websites. Unlike traditional speed metrics that focus on technical load times, these vitals measure what users actually experience when they interact with your site.

Introduced as part of the page experience signals in 2020, these metrics have become increasingly important ranking factors. Search engines use them as tiebreakers when content quality is comparable—and in today's landscape of AI-generated content saturation, that tiebreaker role has never been more critical.

The beauty of web vitals is that they're not arbitrary technical benchmarks. They represent real user frustrations: websites that take forever to show content, buttons that move just as you're about to click them, and interfaces that freeze when you try to interact.

The Three Core Web Vitals Metrics Explained

Largest Contentful Paint (LCP): Loading Performance

LCP measures how long it takes for the largest visible content element to render on screen. This could be a hero image, a video thumbnail, or a large text block—whatever dominates the viewport when your website loads.

Target threshold: LCP ≤ 2.5 seconds

A good LCP score means users see meaningful content quickly. They're not staring at blank screens or loading spinners wondering if your website is broken. When LCP exceeds 4 seconds, you're in "poor" territory, and users are likely bouncing before they even see what you have to offer.

Common LCP elements include:

Hero images and banner graphics

Video thumbnails above the fold

Large heading text blocks

Full-width carousel images

Interaction to Next Paint (INP): Responsiveness

INP replaced First Input Delay (FID) in March 2024 as the official responsiveness metric. While FID only measured the first interaction, INP evaluates the responsiveness of all user interactions throughout the entire lifecycle.

Target threshold: INP < 200 milliseconds

INP captures the delay between when a user clicks, taps, or types and when the browser actually responds with visual feedback. Poor INP creates the frustrating experience of clicking a button and wondering if anything happened—that split-second uncertainty that makes interfaces feel broken.

INP is particularly challenging because it measures real user interactions across diverse devices and network conditions. A website might feel snappy on your high-end development machine but lag painfully on a mid-range mobile phone with a crowded CPU.

Cumulative Layout Shift (CLS): Visual Stability

CLS quantifies how much unexpected layout shift occurs during the entire lifespan of a URL. Every time an element shifts position without user input—an ad loads and pushes content down, a font swaps and reflows text, or an image appears without dimensions—it contributes to your CLS score.

Target threshold: CLS < 0.1

Layout shifts are more than annoying—they're actively harmful. Users accidentally click the wrong links, submit forms prematurely, or lose their reading position. High CLS is the digital equivalent of trying to read a book while someone keeps moving the pages.

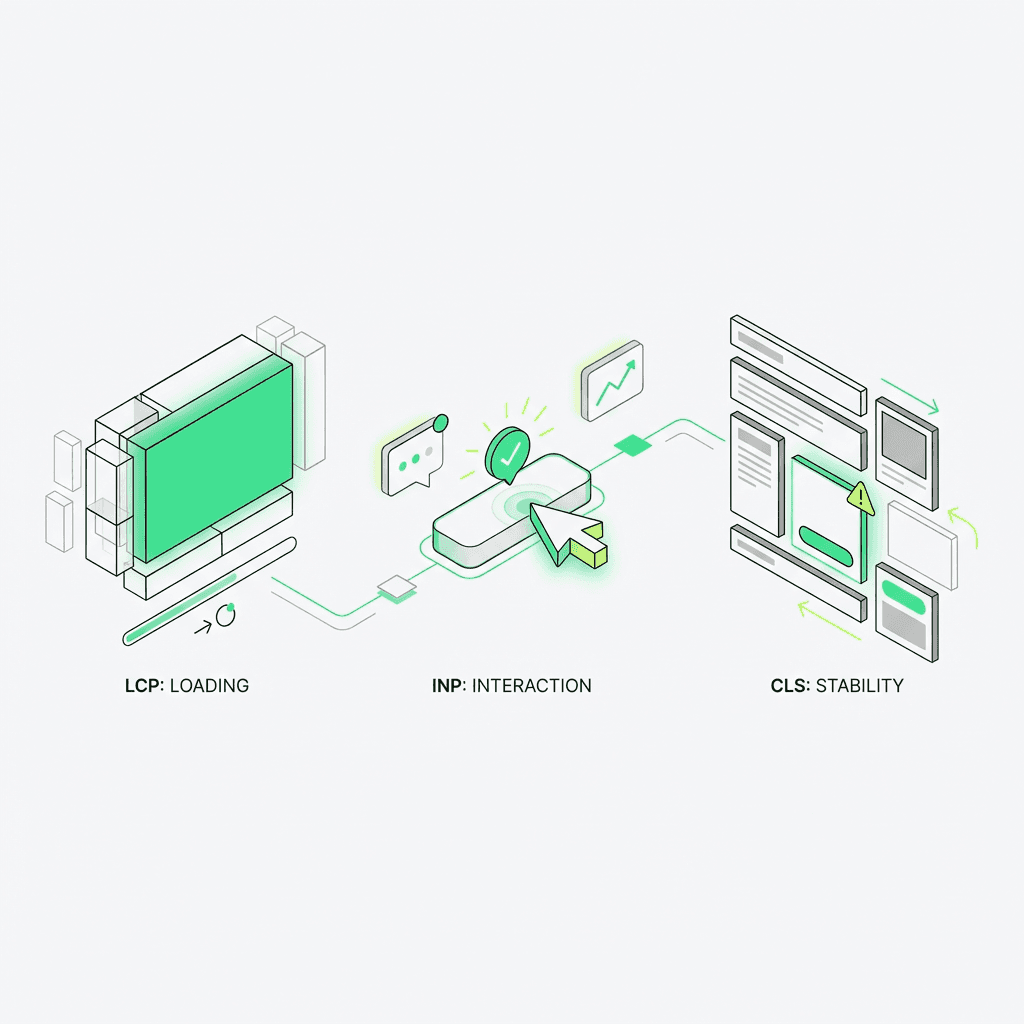

Field Data vs Lab Data: Understanding the Difference

One of the most critical concepts in web vitals testing methodology is understanding the distinction between field and lab measurements. This difference fundamentally changes how you should prioritize optimization work.

Field Data: Real User Monitoring (RUM)

Field data comes from actual users visiting your website in the wild. The Chrome User Experience Report (CrUX) aggregates anonymized metrics from real Chrome users who have opted into usage statistics.

Field data reflects:

Real-world network conditions (3G, 4G, 5G, WiFi)

Diverse device capabilities (flagship phones to budget tablets)

Actual user behavior patterns

Geographic variations in connectivity

Time-of-day server load differences

CrUX is what search engines use for ranking decisions. It's the ground truth of user experience. When you check PageSpeed Insights or the vitals report in Search Console, the field section shows you what real users are experiencing.

Lab Data: Controlled Testing

Lab measurements come from synthetic tests run in controlled environments—tools like Lighthouse, WebPageTest, or the lab section of PageSpeed Insights. These tests use standardized devices, network throttling, and consistent conditions.

Lab testing is excellent for:

Diagnosing specific technical issues

Testing changes before deployment

Comparing different optimization approaches

Debugging in development environments

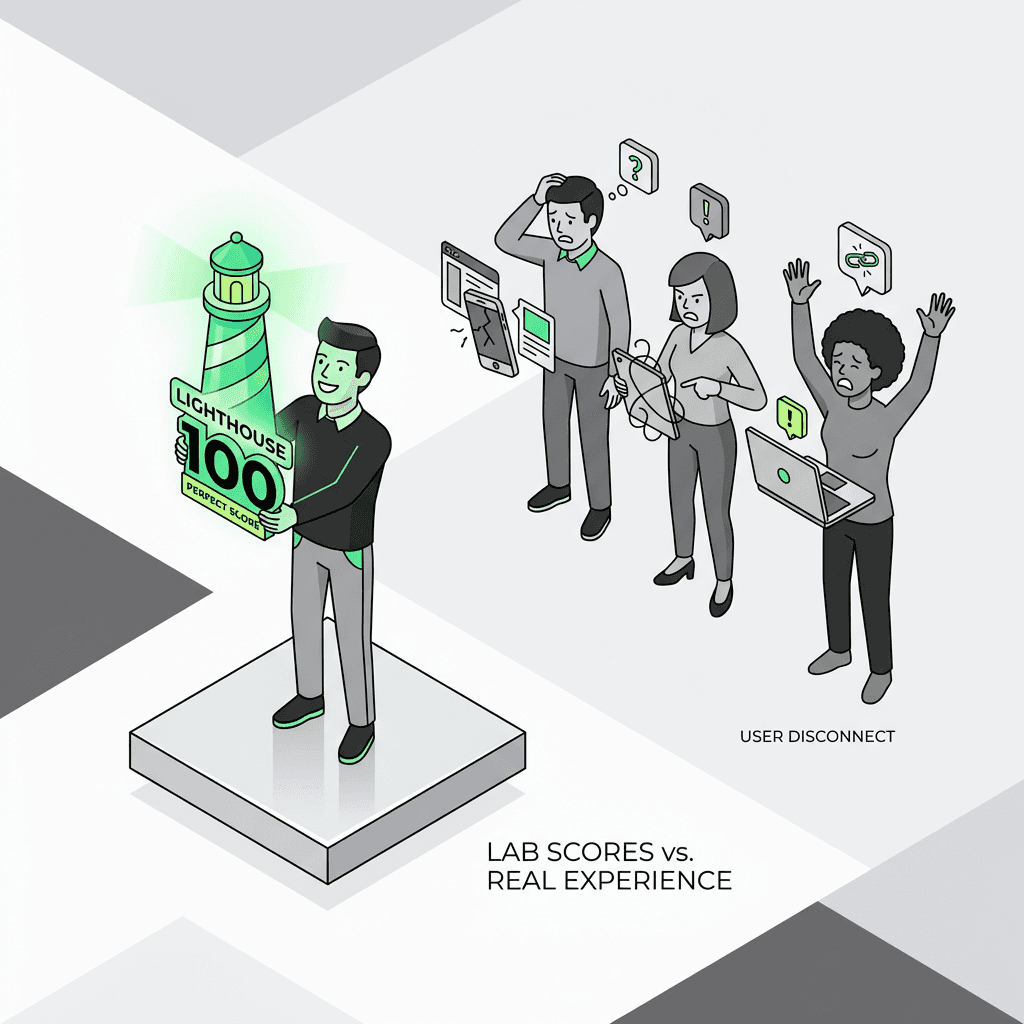

However, lab scores can be misleading. A perfect 100 Lighthouse score doesn't guarantee good vitals in the field. Your users aren't browsing on a simulated Moto G4 with 4G throttling—they're on everything from the latest iPhone to a three-year-old Android device on spotty subway WiFi.

The Golden Rule: Prioritize Field Data

Use field measurements for prioritization, not just lab scores. If your lab tests show perfect results but field data reveals poor CWV, trust the field. Real users are struggling, even if your synthetic tests aren't capturing why.

Conversely, if field results show good vitals but lab scores are mediocre, don't obsess over achieving perfect lab numbers. Your actual users are having good experiences—that's what matters for both rankings and conversions.

How to Measure Core Web Vitals

Google Search Console: Core Web Vitals Report

The vitals report in Search Console is your starting point. It shows which URLs are passing or failing thresholds based on field data, grouped by similar websites.

This report segments URLs into:

Good: Meeting all three thresholds

Needs improvement: Between good and poor threshold

Poor: Failing one or more thresholds

The grouping mechanism is powerful—the search console identifies patterns across similar types (product websites, blog posts, category pages) so you can fix issues at the template level rather than URL-by-URL.

PageSpeed Insights: Deep Diagnostics

PageSpeed Insights (PSI) combines both field data from the Chrome User Experience Report and lab measurements from Lighthouse. Enter any URL and you'll see:

Field data (if available): Real user CWV over the past 28 days

Lab data: Synthetic test results with specific diagnostic recommendations

Opportunities: Prioritized suggestions for optimization

Diagnostics: Technical issues affecting speed

PSI is invaluable for diagnosing why a website fails vitals. The lab measurements may not perfectly match field conditions, but the diagnostic insights—render-blocking resources, oversized images, excessive JavaScript—point you toward solutions.

CrUX Dashboard and API

For more granular assessment, the CrUX dashboard (available in Looker Studio) and API provide historical trends and device-specific breakdowns. You can see how vitals vary between desktop and mobile, track improvements over time, and identify regression patterns.

The API is particularly useful for integrating monitoring into your development workflow—more on that in the AI and automation section below, especially when leveraging an ai marketing automation platform for streamlined processes.

Diagnosing and Fixing Core Web Vitals Issues

Optimizing LCP (Largest Contentful Paint)

Common LCP problems:

Slow server response times: If your TTFB (Time to First Byte) exceeds 600ms, the browser can't even start rendering quickly

Render-blocking resources: CSS and JavaScript that must load before the website can paint

Slow resource load times: Massive unoptimized images or videos

Client-side rendering: JavaScript frameworks that render content after initial load

Ways to improve LCP:

Optimize your server: Use CDNs, implement caching, upgrade hosting if necessary

Preload critical resources: Use `` for LCP images and fonts

Optimize images: Compress, use modern formats (WebP, AVIF), implement responsive images with `srcset`

Eliminate render-blocking resources: Defer non-critical CSS and JavaScript, inline critical CSS

Use server-side rendering: For JavaScript frameworks, render initial content on the server

Improving INP (Interaction to Next Paint)

Common INP problems:

Long JavaScript tasks: Heavy scripts that block the main thread

Excessive event handlers: Too many listeners competing for processing time

Large DOM sizes: Thousands of elements that slow down rendering updates

Unoptimized third-party scripts: Analytics, ads, and widgets that hog resources

Ways to improve INP:

Break up long tasks: Split JavaScript work into smaller chunks using `setTimeout` or `requestIdleCallback`

Debounce and throttle: Limit how often event handlers fire for scroll, resize, and input events

Optimize DOM updates: Batch changes, use virtual scrolling for long lists

Lazy-load third-party scripts: Defer non-essential widgets until after user interaction

Use web workers: Move heavy computations off the main thread

Reducing CLS (Cumulative Layout Shift)

Common CLS problems:

Images without dimensions: Browser can't reserve space before image loads

Ads and embeds: Dynamic content that injects without reserved space

Web fonts: Font swapping that causes text reflow

Dynamic content injection: Content that appears and pushes existing elements

Ways to fix CLS:

Always specify image dimensions: Use `width` and `height` attributes or CSS aspect-ratio

Reserve space for ads: Define fixed containers for ad slots

Optimize font loading: Use `font-display: optional` or `font-display: swap` with fallback fonts that match dimensions

Avoid inserting content above existing content: Add new elements below the fold or use overlays

Preload fonts: Use `` for critical web fonts

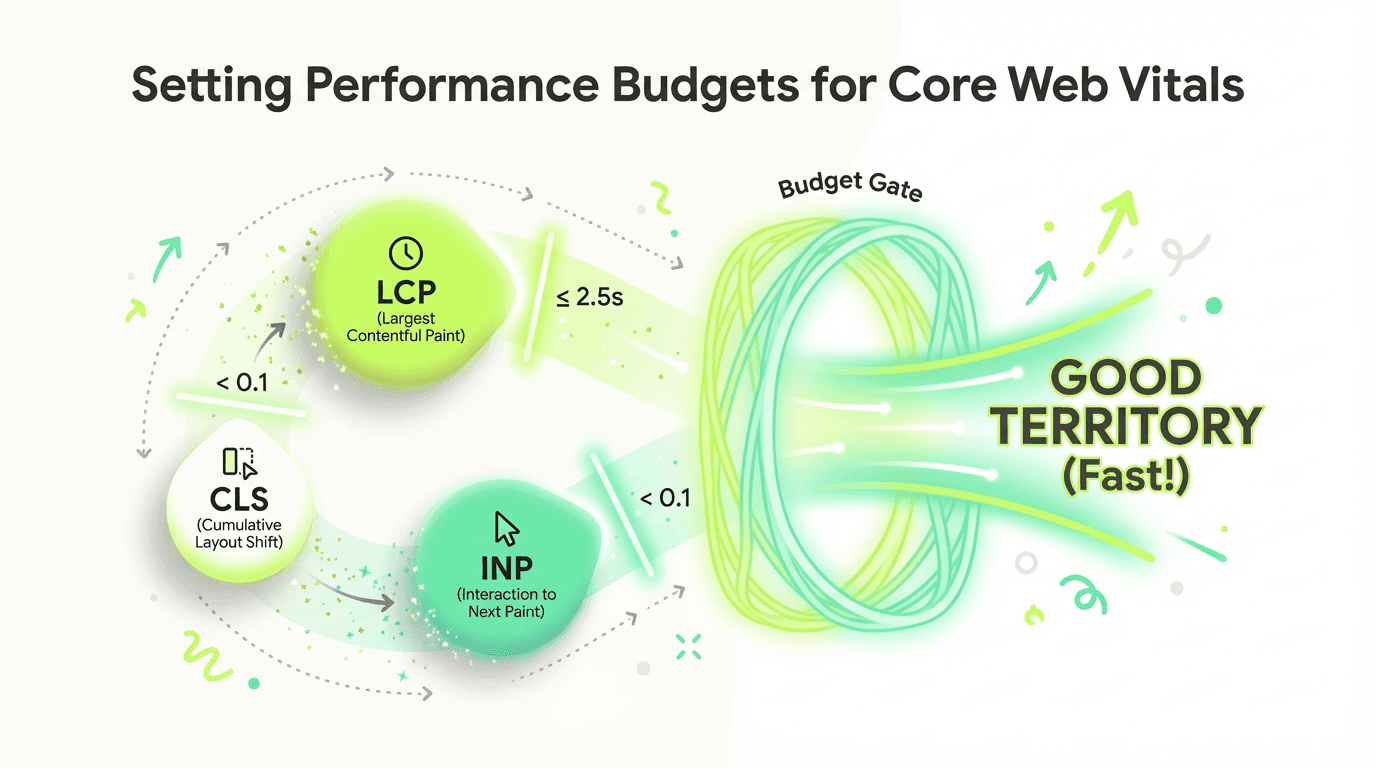

Setting Performance Budgets for Core Web Vitals

A budget is a set of limits you impose on factors that affect website speed. For CWV, your budgets should reflect the thresholds that keep you in "good" territory:

Web vitals budgets:

LCP ≤ 2.5 seconds (aim for 2.0s to build in a safety margin)

INP < 200 milliseconds (aim for 150ms buffer)

CLS < 0.1 (aim for 0.05 for critical templates)

Segment Budgets by Template Type

Different templates have different characteristics and constraints:

Homepage: Strictest budgets—this is your first impression

Product/service pages: Balance rich media with speed

Blog posts: Optimize for reading experience, watch for ad-related shift issues

Category/listing pages: Manage large DOM sizes and infinite scroll

Checkout/conversion pages: Prioritize INP—responsiveness is critical

Enforce Budgets in Your Workflow

Budgets only work if you enforce them. Integrate CWV checks into:

Development: Run Lighthouse CI in pull requests

Staging: Test representative pages before production deployment

Production: Monitor field data continuously and alert on regressions

How AI Is Changing Core Web Vitals Strategy

The rise of AI-generated content has fundamentally shifted the SEO landscape—and made web vitals more important than ever. Here's why:

Performance as a Competitive Differentiator

When dozens of AI-generated articles target the same query with similar keyword optimization, search engines need tiebreakers. CWV serve exactly this purpose. Two pages with comparable content quality? The faster website wins.

As AI tools for content marketing democratize content creation, the playing field for written quality has leveled. Speed optimization is now a primary way to stand out. Websites that invest in these vitals gain a measurable edge in rankings and click-through rates.

AI-Referred Traffic Expects Speed

Users arriving from AI assistants, chatbots, and search agents have elevated expectations. They've just received an instant answer from an AI—when they click through to your website, they expect the same responsiveness. Slow loading creates jarring cognitive dissonance.

Fast websites correlate strongly with better conversion rates on AI-referred traffic. Users who experience good vitals are more likely to engage, convert, and return. Poor CWV creates friction precisely when you need to deliver on the AI's promise.

AI SEO Tools for Performance Monitoring

Traditional monitoring is reactive: you discover problems after they've already hurt rankings. Modern ai productivity tools for marketing and automation platforms enable proactive management.

The Metaflow Agent Opportunity: Proactive Performance Monitoring

This is where advanced AI for SEO platforms transform your approach to web vitals management.

Traditional workflows are fragmented: you check Search Console weekly, manually run PageSpeed tests, export CSV files, and try to correlate changes with code deployments. By the time you notice a regression, it's already affecting rankings.

Metaflow AI offers a fundamentally different paradigm: a no-code ai agent builder that lets you design monitoring flows without code. Here's how a performance monitoring flow works:

Automated Daily CrUX Monitoring

A workflow can pull CrUX data daily via API, tracking vitals trends across all your critical templates. Instead of waiting for Search Console to update weekly, you get real-time visibility into field shifts.

Template-Level Segmentation

The system automatically segments data by template type—homepage, product pages, blog posts, category pages. When a regression occurs, you immediately know which template is affected, dramatically reducing diagnosis time.

Deploy-Triggered Performance Gates

Integrate the workflow into your CI/CD pipeline. Before code reaches production, the system runs comparative tests: "Will this deploy degrade LCP on product pages?" If projected CWV drops below your budget, the system alerts the team or even blocks the deployment automatically.

Intelligent Alerting

Not all changes matter equally. A smart workflow can apply intelligent thresholds: alert when LCP increases by more than 10% on high-traffic templates, but ignore minor fluctuations on low-volume pages. This signal-to-noise optimization prevents alert fatigue.

From Reactive to Proactive

The transformation is profound: instead of discovering failures after they've hurt rankings, you catch regressions before they reach users. Speed optimization shifts from a reactive firefighting exercise to a proactive CI/CD gate.

This is the power of the approach to no-code ai workflow builder—it's not about replacing human judgment, but about reclaiming cognitive bandwidth. Your team stops manually checking dashboards and exporting reports, and starts focusing on high-impact optimization work. The system handles the monitoring, segmentation, and alerting, freeing you to actually fix issues.

Unlike rigid automation stacks that require custom coding and connector management, the natural language builder lets growth teams design these flows in minutes, experiment with different monitoring strategies, and iterate based on what actually moves the needle.

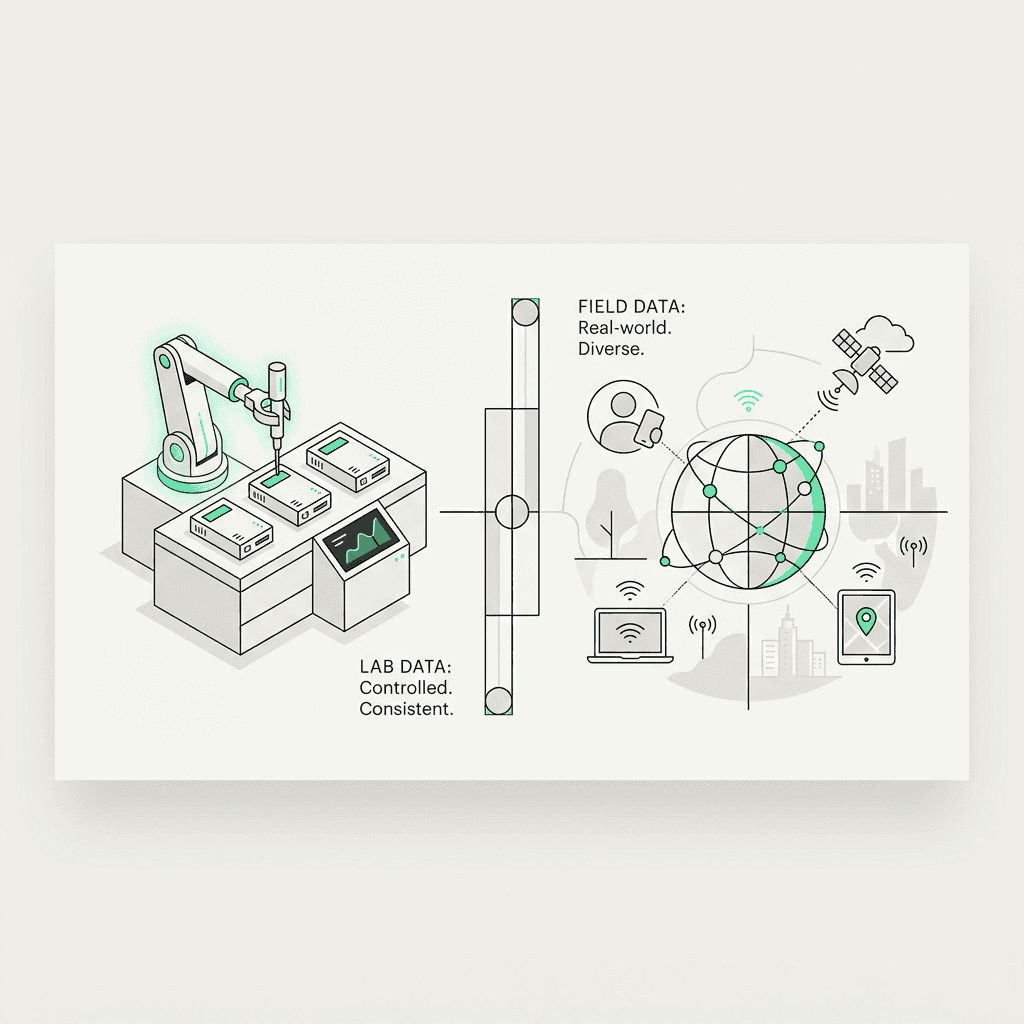

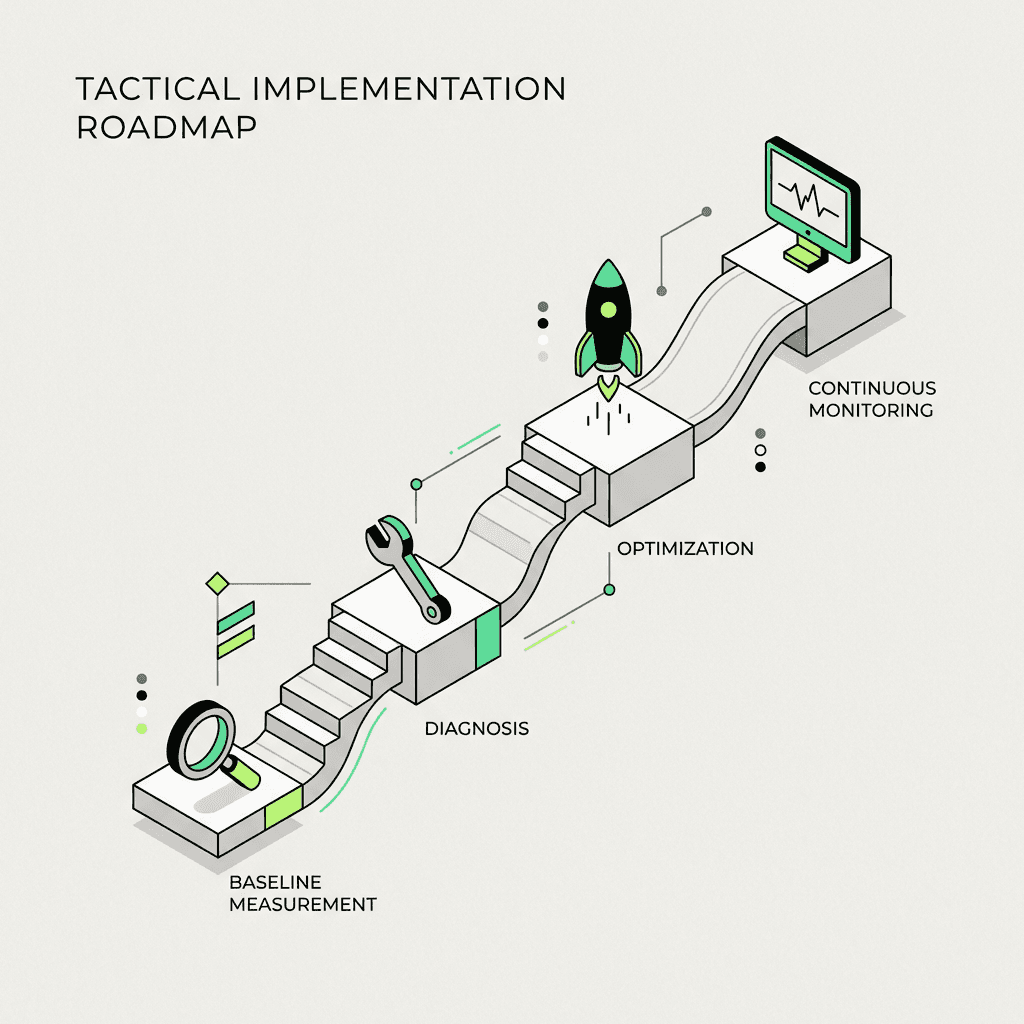

Tactical Implementation Roadmap

Ready to improve core web vitals? Follow this systematic approach:

Phase 1: Baseline Measurement (Week 1)

Audit current state: Check the vitals report in Search Console

Identify problem templates: Which types are failing thresholds?

Gather field data: Use the dashboard to understand device and geography breakdowns

Run lab tests: Use PageSpeed Insights on representative pages from each template

Phase 2: Diagnosis (Week 2)

Analyze LCP sources: What element is the LCP? What's slowing its load?

Profile INP patterns: Use Chrome DevTools to identify long tasks and slow interactions

Map CLS culprits: Which elements are shifting? When do shifts occur?

Prioritize by impact: Focus on templates with the most traffic and worst vitals

Phase 3: Optimization (Weeks 3-6)

Implement fixes template by template: Don't try to fix everything at once

Test in staging: Verify improvements with lab measurements before deploying

Deploy and monitor: Push changes and watch field data for confirmation

Iterate: Some fixes work better than others—be ready to adjust

Phase 4: Continuous Monitoring (Ongoing)

Set up automated monitoring: Use the API or workflow automation tools

Establish budgets: Define acceptable thresholds for each template

Integrate into CI/CD: Make CWV checks part of your deployment process

Review monthly: Track trends and identify new optimization opportunities

Common Core Web Vitals Mistakes to Avoid

Mistake 1: Chasing Perfect Lab Scores

A Lighthouse score of 100 looks impressive in screenshots, but it doesn't guarantee good field results. Real users face conditions your lab tests can't simulate. Optimize for field CWV, not vanity numbers.

Mistake 2: Ignoring Mobile Performance

Most traffic is mobile, and mobile devices have less processing power and slower networks. If you only test on desktop, you're missing the majority of your users' experience.

Mistake 3: Fixing Symptoms, Not Causes

Adding a loading spinner doesn't improve LCP—it just makes users more aware they're waiting. Address root causes: slow servers, bloated resources, render-blocking scripts.

Mistake 4: Treating CWV as a One-Time Project

Website speed degrades over time as you add features, content, and third-party scripts. Web vitals require ongoing monitoring and maintenance, not a one-and-done optimization sprint.

Mistake 5: Neglecting Third-Party Scripts

Analytics, ads, social widgets, and chat tools often contribute disproportionately to issues. Audit third-party impact regularly and be ruthless about removing scripts that don't justify their speed cost.

Platform-Specific Optimization: WordPress and Beyond

WordPress Core Web Vitals Optimization

WordPress powers over 40% of websites, making it crucial to understand platform-specific optimization strategies. Common WordPress issues include:

Theme bloat: Many themes load excessive CSS and JavaScript

Plugin conflicts: Multiple plugins can cause slow interactions and layout shifts

Unoptimized images: WordPress doesn't automatically serve modern formats

Database queries: Slow database performance affects server response time

Best WordPress plugins for CWV:

WP Rocket: Comprehensive caching and optimization plugin

Imagify or ShortPixel: Automatic image compression and WebP conversion

Perfmatters: Disable unnecessary features and scripts

Flying Scripts: Delay JavaScript execution until user interaction

Example WordPress optimization workflow:

Install a caching plugin to improve server response time

Use an image optimization plugin to reduce LCP element size

Defer non-critical JavaScript to improve interaction to next paint

Reserve space for dynamic elements to prevent cumulative layout shift

Use the URL inspection tool in Search Console to check results

HTML Optimization Fundamentals

Regardless of platform, clean HTML structure is foundational:

Use semantic HTML5 elements for better rendering

Minimize DOM depth and node count

Specify dimensions for all images and video elements

Preconnect to required third-party domains

Inline critical CSS for above-the-fold content

Advanced Monitoring and Assessment Strategies

Real User Monitoring (RUM) Tools

Beyond the Chrome User Experience Report, consider implementing dedicated RUM tools:

SpeedCurve: Visualize trends and set up custom alerts

Calibre: Automated testing with historical tracking

DebugBear: Detailed vitals monitoring with root cause analysis

WebPageTest: Advanced testing with multiple locations and devices

Creating Custom Dashboards

Build custom dashboards that help your team monitor what matters:

Executive dashboard: High-level pass/fail rates by template

Developer dashboard: Detailed diagnostic data and regression tracking

Content team dashboard: Impact of content changes on loading speed

Mobile-specific view: Separate mobile and desktop tracking

Setting Up Automated Alerts

Configure alerts that notify you when important changes occur:

LCP increases by more than 500ms on any template

INP exceeds 200ms on checkout or conversion pages

CLS spikes above 0.15 on blog posts (indicating ad issues)

Overall pass rate drops below 80% for any template category

2024 Updates and What's Next

The transition from FID to INP in March 2024 represented a significant shift in how responsiveness is measured. This change means:

More comprehensive: INP captures all interactions, not just the first

More realistic: Better reflects actual user experience throughout the session

More challenging: Harder to pass because it measures more interactions

Preparing for Future Updates

While no major changes are announced for 2025-2026, you should:

Stay informed: Follow official announcements and industry research

Build flexibility: Design monitoring systems that can adapt to new metrics

Focus on fundamentals: Fast servers, optimized resources, and clean code will always matter

Monitor beta channels: Watch for experimental metrics in Chrome and developer tools

Getting Help and Resources

Official Documentation

Web.dev: Comprehensive guides on each metric and optimization technique

Chrome DevTools: Built-in profiling and diagnostic tools

Search Console Help: Documentation on interpreting vitals reports

PageSpeed Insights: Detailed recommendations for each URL

Community Resources

Web Performance Slack: Active community of speed optimization experts

Reddit /r/webdev: Regular discussions on vitals and optimization

Stack Overflow: Technical troubleshooting for specific issues

Twitter #WebPerf: Real-time updates and case studies

When to Hire Experts

Consider bringing in specialists when:

You've failed to pass thresholds after multiple optimization attempts

Complex technical architecture requires deep expertise

You need to optimize at scale across thousands of pages

Third-party integrations are causing persistent issues