Every few years, the industry rediscovers a new gospel and starts acting like the old interface religion has finally died.

First it was the command line. Then the GUI. Then search. Then mobile. Then chat. Then voice. Now it is agents. And every time, a familiar chorus appears: this changes everything; the old way is over; the screen is melting; software is dead; the prompt box ate the product.

I build in this space, so I get why people say it. The new thing really does feel magical. Talk to an LLM for five minutes and you can feel your brain trying to rewrite its own assumptions about software. Voice is intoxicating because it removes the handbrake. Chat is intoxicating because it lets you negotiate with a machine in plain language. Agents are intoxicating because they hint at delegated labor.

But after the adrenaline wears off, something stubborn remains.

You still want to point at things.

You still want to see the options.

You still want to drag the block, move the card, tap the right choice, accept the diff, reject the bad one, inspect the draft, adjust the slider, and keep your bearings while the machine does its little magic trick.

That is not nostalgia. That is anthropology.

Ben Shneiderman’s classic argument for direct manipulation was not “people like pretty interfaces.”

Shneiderman was one of the key thinkers behind “direct manipulation” — the idea that computers feel more natural when you can act on visible things, get instant feedback, and avoid translating every intention into abstract commands. Strongly arguing interfaces with these elements radically lowers the user’s translation burden.

It was that visible objects, incremental actions, and immediate feedback make computers feel transparent enough that users can focus on the task instead of translating their intent into machine ceremony. Hutchins, Hollan, and Norman pushed this further: direct manipulation feels powerful because it reduces the information-processing distance between what the user wants and what the system affords. In other words, fewer mental hops, fewer symbolic detours, less friction between intention and action.

Hutchins, Hollan, and Norman — Cognitive distance in interfaces: Their paper gives a more precise cognitive explanation for why some interfaces feel “direct.” They argue that one source of directness is reduced information-processing distance between the user’s goals and the actions the system affords.

That old HCI insight lands even harder in the AI era.

Because the thing we keep calling “friction” is actually several different phenomena wearing the same coat.

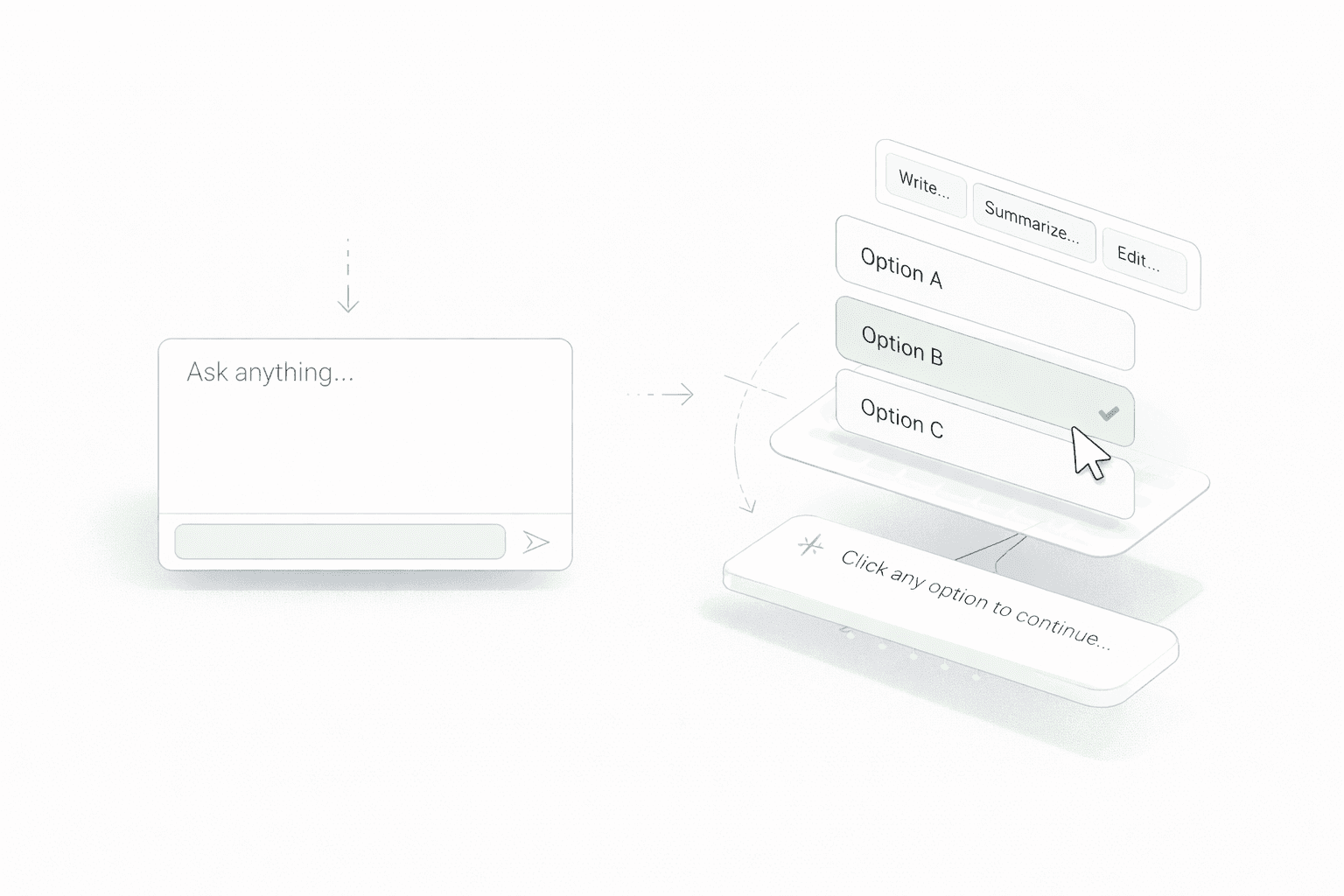

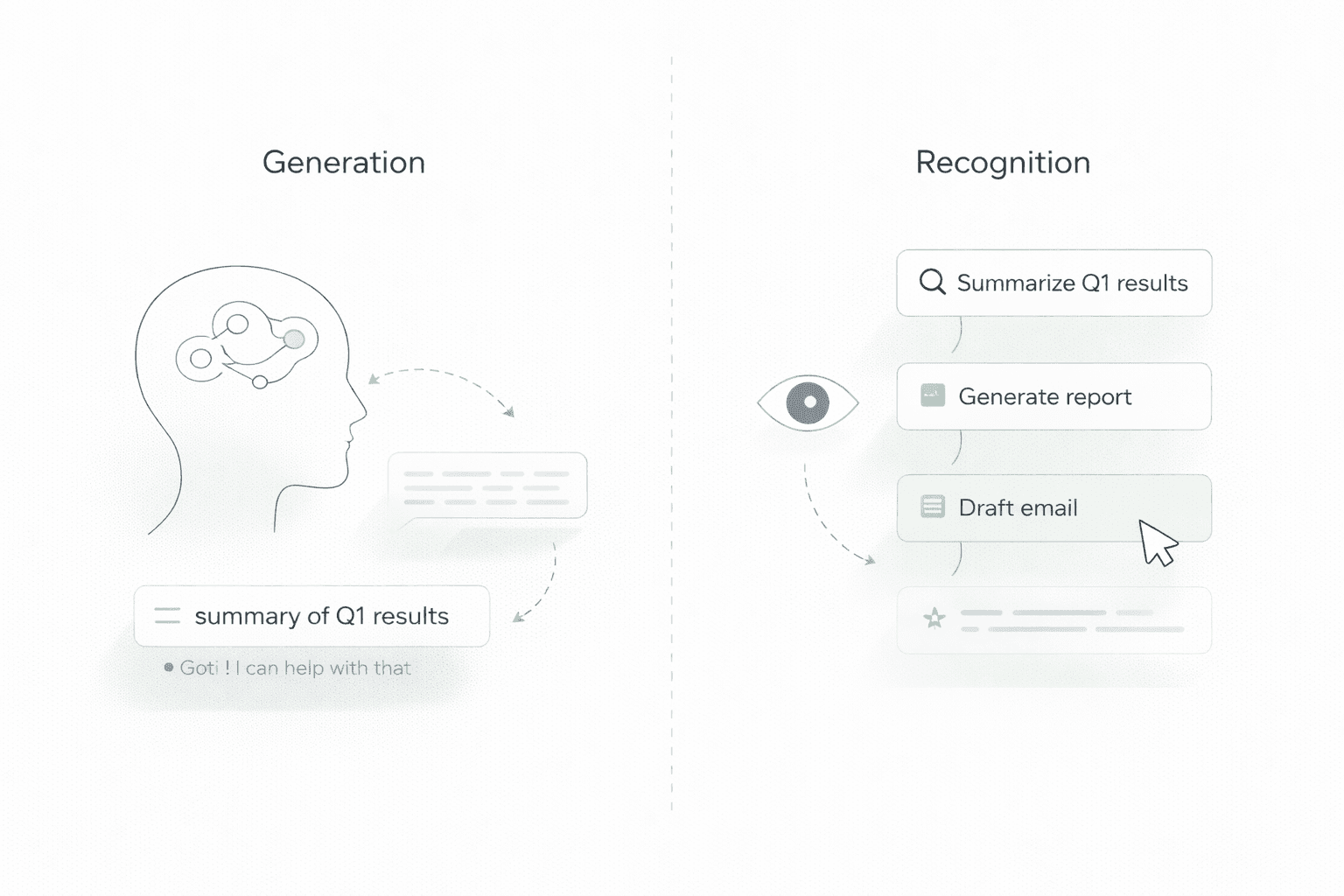

Typing asks me to generate.

Voice asks me to perform.

Point-and-click asks me to recognize.

And recognition is often much cheaper than generation.

Psychology has been telling us some version of this for decades. Working memory is limited, and higher-order cognition draws on constrained attentional resources. Sweller’s work on cognitive load argued that when a task consumes too much processing capacity, less is available for the actual learning or judgment we care about. Dual-process research, for all its simplifications, still gives us a useful distinction here: some judgments are fast, cue-driven, low-effort; others are slower, more effortful, and dependent on working-memory resources. That is not a moral hierarchy. It is a bandwidth map.

Sweller’s framework says that working memory is limited, and tasks differ in how much they burden it. This is a real scientific basis for saying that open-ended composition can feel heavier than recognition or selection: when a task demands more simultaneous formulation, recall, and monitoring, it consumes more cognitive resources.

A blank chat box is, cognitively speaking, a rude object.

It walks up to your prefrontal cortex and says, “Invent the next move.”

That is sometimes exactly what you want. If I am reasoning through a thorny idea, writing a difficult paragraph, or dumping context into an LLM, open text is glorious. It gives thought room to breathe. It preserves nuance. It lets language do what language does best: stretch.

But that is not the only thing humans do at work. Much of modern knowledge work is not pure creation. It is steering. Reviewing. narrowing. triaging. sequencing. picking the least-wrong option. deciding which draft is closest. telling the machine, “more like this, less like that.”

And in those moments, point-and-click wins because it lets me send an index instead of a monologue.

That is the deepest version of the bandwidth argument. Not raw channel bandwidth. Semantic bandwidth.

Claude Shannon taught us to think about communication as information transmitted through a channel. Hick showed that reaction time rises with the amount of information in the choice set. Fitts showed that aimed movement can be modeled as an information problem too: target size and distance determine how hard it is to get there. The miracle of a good interface is that it lets a tiny physical action carry a surprisingly large semantic payload. A click is only a tiny motor event. But if the system has already externalized the codebook — these three paths, these five actions, this visible state — then that one click can stand in for a paragraph of intent.

That is why point-and-click feels “intuitive.” Not because it is primitive. Because it is compressed.

The system does part of the representational work up front. The human does not have to haul the whole thought into language every single time.

This is where anthropology and ethnography get unreasonably helpful. Zhang and Norman’s work on distributed cognition made a beautifully simple point: cognition is not trapped inside the skull. People think with external representations. Put structure into the environment and the task itself changes. A board, a canvas, a timeline, a set of cards, a visible hierarchy — these are not decorative wrappers around thought. They are parts of the thinking system.

That is why creative and operational work keeps crawling back toward visible workspaces. Writers want outlines. Marketers want boards. Designers want canvases. Operators want dashboards. Strategists want to see multiple moving parts at once. People do not just want answers; they want a surface area on which judgment can happen.

This is also why the “everything collapses into chat” thesis has always felt a little too developer-brained for the rest of civilization.

Developers already had a cultural tolerance for terse symbolic interfaces. Many actually enjoy them. A good command line feels like a violin bow in practiced hands. But the rest of the economy does not live inside terse symbolic control surfaces all day. A marketer planning a campaign, a writer shaping a narrative, or a founder juggling product, hiring, GTM, and investor notes is not trying to compress their whole mental world into a single-line prompt forever. They need a field of vision, not just a spell box.

And the market has started quietly admitting this.

OpenAI did not stop at a chat window. It introduced Canvas, which opens a side-by-side workspace for longer-form writing and coding, and it folded Operator into ChatGPT’s agent mode so the system can interact with the web by clicking, typing, and scrolling on the user’s behalf. In plain English: even the flagship chat product had to grow a visible workspace and a browser-based action layer.

Anthropic moved in the same direction from a different angle. Artifacts gave Claude a dedicated surface where documents, apps, and outputs can persist outside the chat stream. Then, in January 2026, Claude expanded into interactive apps with tools like Slack, Figma, and Canva embedded into the experience — a tacit admission that analyzing, designing, and managing work often goes better with a dedicated visual interface than with plain text alone. And just days ago, Claude started generating charts and diagrams inline in the conversation, because sometimes the right response is not another paragraph — it is a thing you can point at.

Even the most “agentic” tools keep smuggling selection back into the loop. Cursor’s interface language revolves around accepting suggestions, reviewing changes, and managing agent output with explicit controls; its own changelog makes clear that precision and focus in agent workflows depend on how context menus, review flows, and action affordances are designed. The industry keeps pretending the future is pure autonomy, then quietly rebuilding approval buttons because real users do not want mystical intelligence; they want steerable intelligence.

That last point matters more than the others.

The popular fantasy of AI is that you say a thing, the machine disappears into the fog, and returns with the finished answer like a well-trained valet. Nice story. Bad default.

Real work is messier. You often do not know the final answer when you start. You know what smells wrong. You know which version is warmer, sharper, more conservative, more on-brand, more likely to survive legal, less likely to embarrass you in front of a customer. Human judgment often arrives first as a felt discrimination, not a fully articulated sentence. We are better at saying “that one” than at explaining why that one.

Point-and-click honors that fact about human beings.

Voice, by contrast, has a different superpower. It is phenomenal for unloading context. It is fast. Under good conditions, speech recognition can beat touchscreen typing by a wide margin for transcription-style tasks. But speed in raw words per minute is not the same thing as usefulness in collaborative work. Audio is linear. Text is skimmable. Visual state is inspectable. Clark and Brennan’s work on grounding reminds us that media impose different coordination costs. Spoken language is quick to emit, but written and visible artifacts are often better for review, revision, reference, and mutual alignment. In business contexts, those properties are not side benefits. They are the job.

So no, voice does not kill typing. And typing does not kill clicking. And chat does not kill software.

They stack.

They divide labor.

They each win in different regions of the cognitive map.

Typing remains the tool of precision authorship. Voice remains the tool of rapid capture and embodied ease. Point-and-click remains the tool of fast recognition, low-friction steering, and cognitively economical control over visible state.

That last phrase — visible state — is where the SaaS panic starts to look overcaffeinated.

Software was never valuable only because it stored records or executed commands. Good software encodes domain judgment into form: what should be visible, what should be grouped, what should be editable, what should be constrained, what should be compared, what should be reversible, what deserves a button instead of a sentence. That is not clerical wrapping. That is accumulated interface wisdom.

So when people say “AI kills SaaS,” what they often mean is “raw generation can replace some shallow product surfaces.” Fair enough. The flimsiest wrapper products are in danger. They should be. But the next generation of serious software will not be a dumb skin over a model, and it will not be a naked prompt box either. It will be an opinionated collaboration environment: part model, part workflow, part memory, part control surface.

In other words: more like a cockpit, less like a séance.

And cockpits are made of instruments.

Buttons. Menus. Handles. Panels. Previews. Diffs. Canvases. Cards. Maps. Timelines. Things the eye can sweep and the hand can act on before language has fully finished getting dressed.

That is why point-and-click is here to stay.

Not because humans are conservative.

Because humans are embodied.

Because attention is scarce.

Because recognition is cheap.

Because external representations help us think.

Because visible options compress intent.

Because collaboration with machines gets better when the machine proposes and the human steers.

Because the future of AI is not less interface. It is better interface.

And because, for all the industry’s periodic attempts to vaporize the GUI into pure chat, we keep rediscovering the same ancient truth:

Sometimes the smartest thing a person can say to a computer is not a sentence.

It is: that one.

Sources

Clark, Herbert H., and Susan E. Brennan. “Grounding in Communication.” In Perspectives on Socially Shared Cognition, edited by Lauren B. Resnick, John M. Levine, and Stephanie D. Teasley, 127–149. Washington, DC: American Psychological Association, 1991.

Fitts, Paul M. “The Information Capacity of the Human Motor System in Controlling the Amplitude of Movement.” Journal of Experimental Psychology 47, no. 6 (1954): 381–391.

https://www.interaction-design.org/literature/topics/fitts-law

Hick, W. E. “On the Rate of Gain of Information.” Quarterly Journal of Experimental Psychology 4, no. 1 (1952): 11–26.

https://www.interaction-design.org/literature/topics/hick-s-law

Hutchins, Edwin L., James D. Hollan, and Donald A. Norman. “Direct Manipulation Interfaces.” In Human–Computer Interaction, 87–124. Norwood, NJ: Ablex, 1985.

https://www.researchgate.net/publication/250890525_Direct_Manipulation_Interfaces

Shannon, Claude E. “A Mathematical Theory of Communication.” Bell System Technical Journal 27, no. 3 (1948): 379–423; no. 4 (1948): 623–656.

https://reach.ieee.org/primary-sources/a-mathematical-theory-of-communication/

https://www.britannica.com/science/information-theory/Classical-information-theory

Shneiderman, Ben. “Direct Manipulation: A Step Beyond Programming Languages.” Computer 16, no. 8 (1983): 57–69.

https://www.cs.umd.edu/~ben/publications.html

Sweller, John, Paul Ayres, and Slava Kalyuga. Cognitive Load Theory. New York: Springer, 2011.

https://link.springer.com/book/10.1007/978-1-4419-8126-4

Sweller, John, Jeroen J. G. van Merriënboer, and Fred Paas. “Cognitive Architecture and Instructional Design: 20 Years Later.” Educational Psychology Review 31 (2019): 261–292.

https://link.springer.com/article/10.1007/s10648-019-09465-5

Zhang, Jiajie, and Donald A. Norman. “Representations in Distributed Cognitive Tasks.” Cognitive Science 18, no. 1 (1994): 87–122.

https://www.sciencedirect.com/science/article/pii/0364021394900213