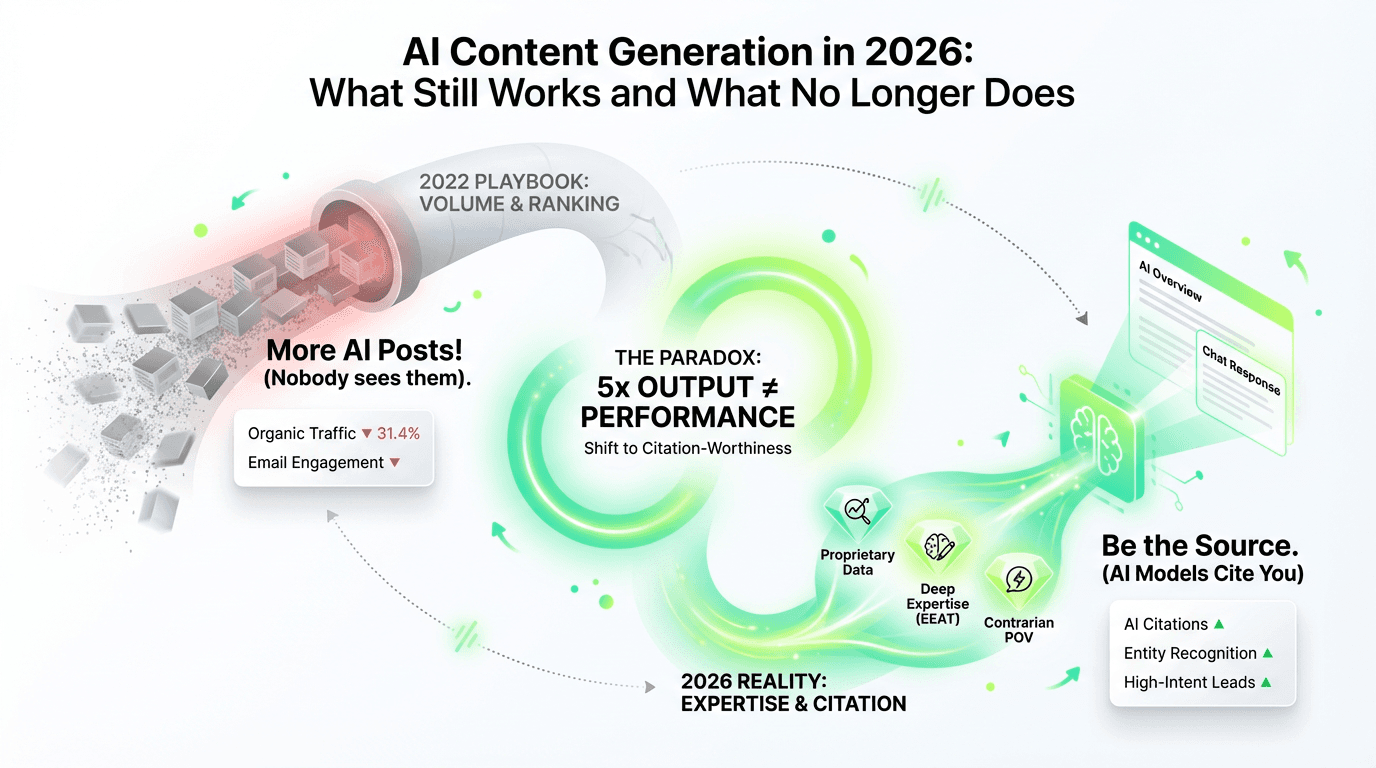

TL;DR: AI content generation has created a paradox: marketing teams are producing 3-5x more content but seeing performance decline across their highest-ROI channels (organic search down 31.4%, email engagement falling). The issue isn't artificial intelligence—it's that most organizations are optimizing for volume when the game has shifted to expertise, citation-worthiness, and answer ownership—reflecting the ai generated content seo impact seen across channels. This article breaks down what's broken, what still works, and how to shift from content production to training AI models to cite you as the definitive source.

The content marketing industry is experiencing a productivity explosion and a performance crisis.

> 75% of content professionals report AI has increased their production volume yet only 6% say it significantly improved content performance. (Canto/Ascend2, Nov 2025)

The gap between output and impact has never been wider.

The problem runs deeper than measurement. CoSchedule's "After the AI Shift" study reveals that 31.4% of marketers report their biggest performance decline is in organic search and SEO optimization—the channel that historically delivered the strongest ROI. Meanwhile, 10Fold Communications reports that 91% of marketing employees plan to increase content output, with 46% expecting to produce 3-5x more this year.

The organizations struggling aren't the ones producing less. They're the ones who haven't realized the game changed. Content marketing in 2026 trains language models, not pages. Google's AI Overviews answer queries without sending clicks. ChatGPT synthesizes responses from sources it trusts. Perplexity cites the definitive answer, not the tenth variation of it. In other words, ai search seo answer engine optimization (AEO) is now the game.

The constraint has shifted from production capacity to differentiation and signal strength. AI content generation hasn't made the content creation process easier. It's made undifferentiated content pieces invisible. The playbook that worked in 2022 is actively hurting performance in 2026.

This article breaks down what's broken, what still works, and how to shift from content production to answer ownership.

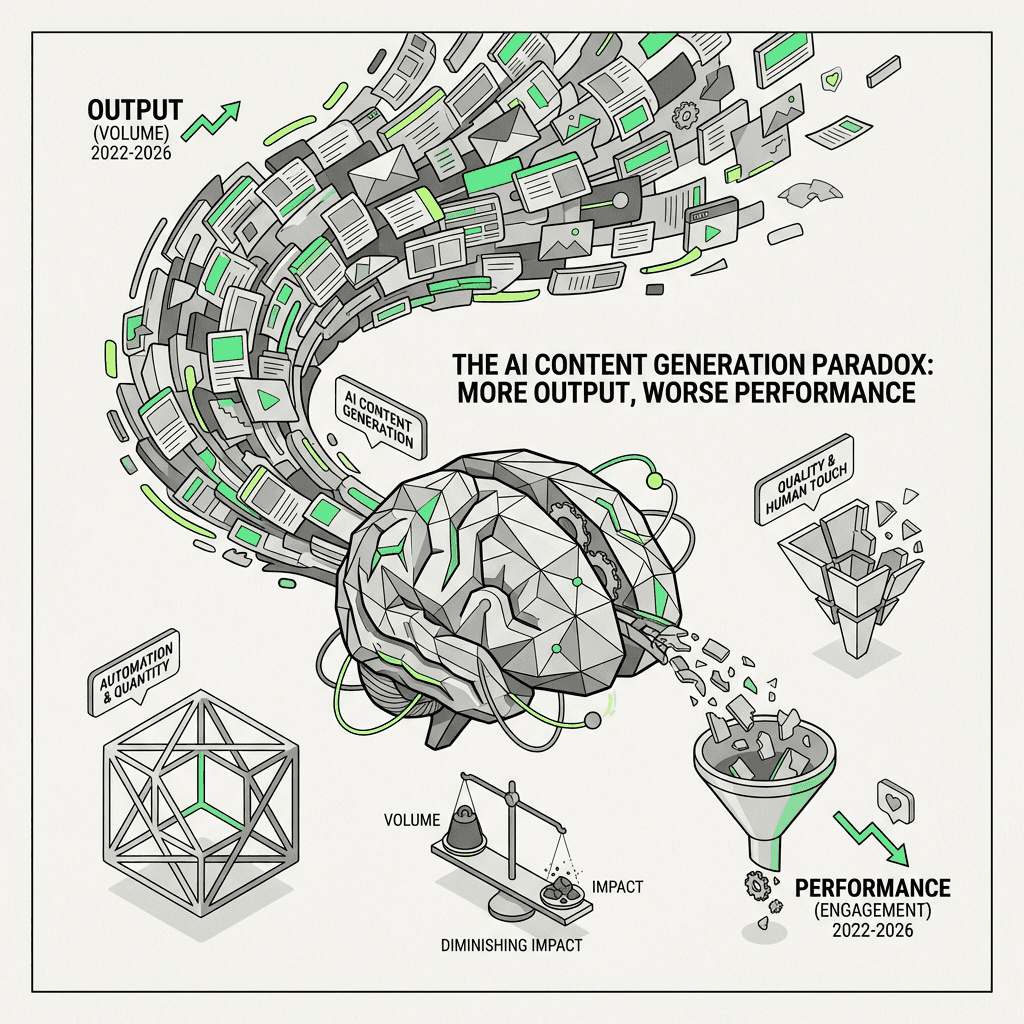

The AI Content Generation Paradox: More Output, Worse Performance

Production is faster and cheaper than ever. eMarketer's April 2026 research shows that 73% of organizations using AI agents have cut content creation costs. Yet productivity gains aren't translating to business outcomes.

The core tension:

67% of global marketing employees use AI for content creation frequently or all the time (10Fold, 2025)

Only 6% report significant performance improvements (MarketingProfs, 2025)

31.4% report their biggest decline in organic search and search engine optimization (CoSchedule, 2025)

This is supply-demand imbalance at scale. When everyone can produce 5x more using AI tools, the value of individual content pieces approaches zero. Google's AI Overviews synthesize answers from multiple sources without sending traffic. Email inboxes are flooded with AI generated content in newsletters. Reddit and LinkedIn feeds are saturated with material that reads like it came from the same template—because it did.

The channels with the highest ROI are the most exposed. SEO and email, which historically delivered measurable pipeline, are now the battlegrounds where AI saturation is most visible. CoSchedule's research shows these aren't just lagging. They're actively declining.

Most organizations are using AI wrong. They treat it as a content production tool—another ai content pipeline—when it should be a content intelligence tool. They're optimizing for volume. The game has shifted to expertise and citation-worthiness.

What No Longer Works: The Old Playbook Is Dead

AI content generation didn't kill SEO. It killed the shortcuts. The content strategies that worked when production was expensive and distribution was predictable are now actively counterproductive.

Why do organizations keep running these plays? Because the playbook worked for a decade. Volume-based search engine optimization delivered measurable pipeline from 2015-2022. Generic thought leadership built brand awareness when production was expensive. The shift happened faster than most could adapt. The AI tools that should help are instead accelerating the wrong content strategy.

1. Volume-Based SEO

Publishing 50 blog posts targeting long-tail keywords used to work. Now it's a liability.

Why it fails:

Google AI Overviews synthesize answers without sending clicks (zero-click search occurs when Google AI Overviews answer the query directly on the SERP, eliminating the need for users to click through to your site)

AI generated content is the baseline, not the differentiator

Thin material optimized for keyword density has no EEAT (Experience, Expertise, Authoritativeness, Trustworthiness)—Google's framework for evaluating quality content—signals

Search engines prioritize depth and expertise over keyword matching and reward entity based seo

Organizations still running "content hub" strategies—dozens of low-value pages targeting keyword variations—are seeing traffic decline even as they publish more. The material exists, but it's not being surfaced because it has no reason to be cited.

2. Generic "Thought Leadership"

Listicles, trend commentary, and rephrased competitor material are now commodities.

Why it fails:

AI writing tools can generate "10 AI Tools for Marketers" in 30 seconds

No original research or POV means no citation value

Readers can tell it's AI-generated and they're tuning it out

The Canto/Ascend2 research shows that 4% of content professionals are not using AI at all. Everyone else is using the same AI writing assistant, following the same prompts, and producing indistinguishable output. Generic thought leadership is no longer a differentiator. It's noise.

3. AI-First, Human-Last Workflows

The "prompt ChatGPT → light edit → publish" workflow is optimizing for speed at the expense of performance—classic ai writing workflow automation without expertise.

Why it fails:

No domain expertise or hands-on experience

No entity authority (entity authority is how search engines and AI models recognize you as a credible source on specific topics, built through consistent authorship, topical depth, and citations from trusted sources)—who wrote this? why should I trust them?

Indistinguishable from millions of other AI-generated pieces

I've seen organizations triple their output using this workflow and watch their organic traffic drop 40% year-over-year. The material exists, but search engines and generative AI have no reason to prioritize it.

4. Batch-and-Blast Email

AI-generated newsletters with no personalization or signal are driving inbox fatigue.

Why it fails:

High frequency, low value

Readers recognize AI generated content and engagement drops

Email, once a high-ROI channel, is now saturated

The pattern is consistent: AI makes it easy to produce more, but "more" without differentiation destroys performance.

What Still Works: The Content Differentiation Stack

High-quality content that performs in 2026 is what AI can't replicate. Here's the framework I use with growth organizations to audit and rebuild their content strategy.

Level 1: Proprietary Data & Original Research

First-party data, customer surveys, and performance benchmarks create citation-worthy assets.

Why it works:

Artificial intelligence can't fabricate original research

Creates citations and entity recognition for an ai powered content strategy

Gets referenced by competitors, media, and AI models

Example: Annual industry reports with proprietary data become reference materials. They drive traffic, citations, and brand recognition. They can't be commoditized.

Level 2: Deep Domain Expertise (EEAT Signals)

Author bylines with real credentials, cited by credible sources, demonstrating hands-on experience.

Why it works:

Google's EEAT framework prioritizes authoritative sources

AI models cite sources with established entity recognition

Readers trust material from people who have actually executed

This isn't about adding an author bio. It's about consistent authorship, credible citations, and demonstrated expertise over time. The writing should feel like it was created by someone who has done the work, not summarized it.

Level 3: Contrarian or Nuanced POV

Material that challenges prevailing narratives or adds context AI summaries miss.

Why it works:

Can't be synthesized from existing sources

Creates answer ownership (answer ownership means becoming the definitive source AI models cite when answering specific high-value questions in your domain)

Drives engagement and sharing

This article is an example: "AI hasn't failed—the way organizations are using it has" is a contrarian take backed by evidence. It can't be generated by prompting ChatGPT because it requires judgment, pattern recognition, and a clear stance.

Level 4: Multimedia & Interactive Formats

Video breakdowns, interactive tools, calculators, and visual data storytelling.

Why it works:

Harder to commoditize

Higher engagement and better user signals

AI models pull from diverse content formats, not just text

Example: A B2B SaaS company's "Cost of Poor Data Quality Calculator" that uses proprietary benchmarks from their customer data drove 47 citations in 6 months. Competitors couldn't replicate it because it relied on real industry data they didn't have access to. ROI calculators and benchmarking tools drive engagement and citations that are impossible for artificial intelligence to generate without domain expertise.

Level 5: Distribution Beyond Google

Reddit, Quora, niche communities, LinkedIn thought leadership, podcasts, and video.

Why it works:

Less saturated than search

AI models pull from diverse sources

Builds brand recognition and entity signals

Example: Founder-led LinkedIn posts that share specific metrics (not vanity stats) and contrarian takes get cited by industry publications. A post analyzing why your organic traffic dropped 30% despite publishing 5x more becomes a reference point others link to. One founder's breakdown of their failed AI content experiment (with real numbers) generated 127 comments, 43 shares, and 8 citations from marketing blogs within two weeks.

The organizations winning aren't publishing more. They're publishing what AI can't replicate. They're building recognition across the platforms where their audience actually engages. Reddit AMAs, podcast appearances, and founder-led material create entity signals that search engines and AI models recognize.

The Content Differentiation Stack (Summary):

Level 1: Proprietary data & original research → Citation magnets

Level 2: Deep domain expertise (EEAT signals) → Recognition

Level 3: Contrarian or nuanced POV → Answer ownership

Level 4: Multimedia & interactive formats → Engagement + citations

Level 5: Distribution beyond Google → Entity signals across platforms

The filter: Can AI generate this? If yes, it's not differentiated. If no, you have a moat.

The Shift from SEO to AEO (Answer Engine Optimization)

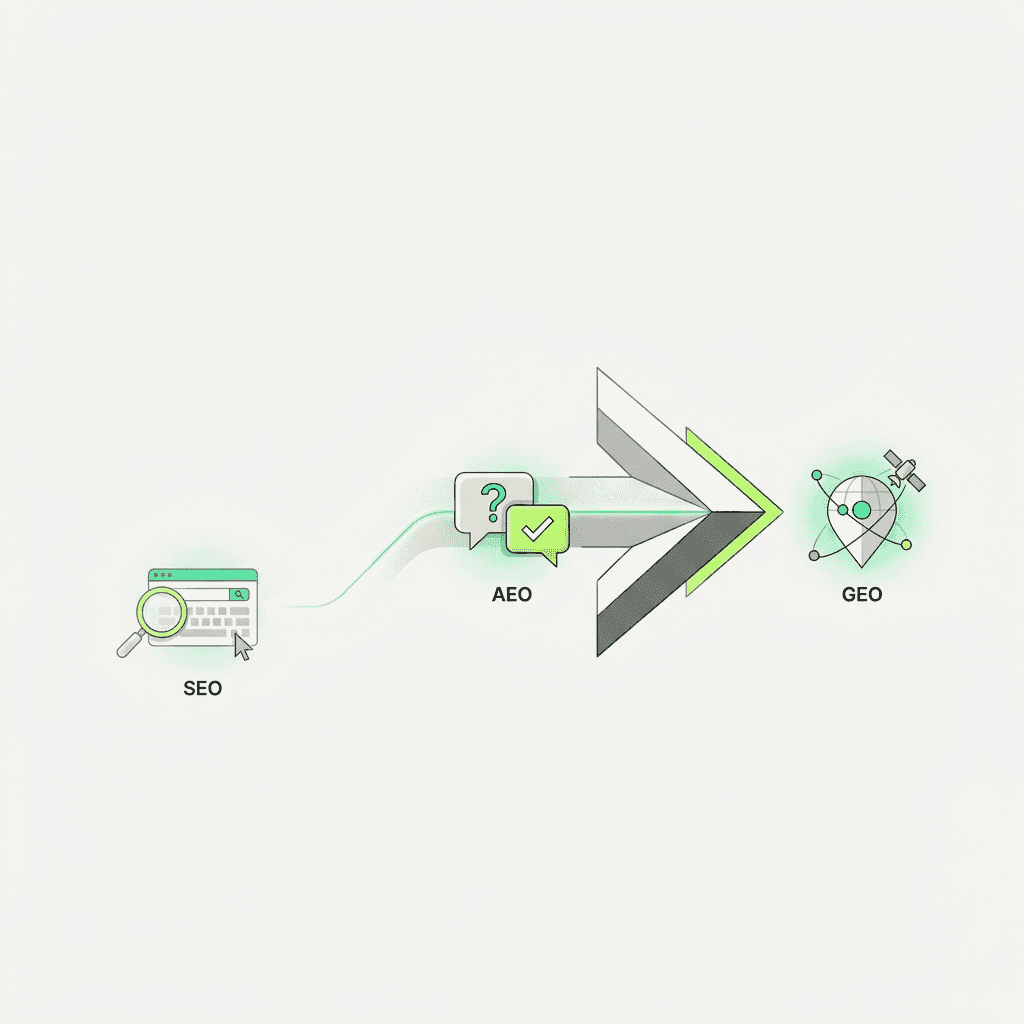

The strategic evolution is clear:

The Evolution of Search Optimization:

SEO (2000-2023): Optimize to rank in search results → Goal: #1 position on Google

AEO (2024-2026): Optimize to be cited by AI models → Goal: Featured in AI Overviews, ChatGPT, Perplexity

GEO (2026+): Optimize for Generative Engine Optimization → Goal: Train large language models to reference you as the trusted source

Ranking #1 used to mean you won. Now it means you might get cited in an AI Overview—if you're lucky. The real win is becoming the source AI models trust.

Answer Engine Optimization (AEO) means optimizing to be cited by AI powered tools like ChatGPT, Perplexity, and Google AI Overviews, not just ranked in search results, acknowledging how search engines work is evolving.

What this means tactically:

1. Structure to be citation-friendly

Clear claims, data, sources, and a structured data strategy. AI models extract structured information, not prose. Use explicit definitions for core concepts. Make your key insights extractable in single sentences.

2. Build entity recognition

Consistent authorship, topical depth, citations from credible sources. AI models prioritize recognized entities. This isn't built by publishing more. It's built by being recognized as a credible source over time.

3. Optimize for answer ownership

Identify high-value questions in your domain. Create definitive, citation-worthy answers. Be the source AI models pull from. Not the tenth variation—the original.

4. Track new metrics

AI citations, references from credible sources, entity mentions. Deprioritize keyword rankings and raw traffic (increasingly zero-click).

Google AI Overviews are reducing click-through rates. ChatGPT, Perplexity, and Claude now cite sources. The game isn't ranking. It's being the cited source.

At Metaflow, we've seen organizations shift from "how do we rank for X keyword" to "how do we become the definitive source on X topic." The latter is harder, but it's the only content strategy that compounds over time.

AI Content Generation as Intelligence, Not Production

The 75% productivity gain is real—but only if you're using AI correctly. Organizations seeing performance improvements aren't using AI to write. They're using it to analyze, optimize, and identify opportunities.

What AI should do:

Research & competitive analysis: Analyze SERP gaps, identify content ideas

Optimization: A/B test headlines, refine CTAs, improve readability

Performance analysis: Use ai content evaluation to surface what's working, identify patterns across hundreds of pieces

Ideation & structure: Generate outlines, suggest angles, challenge assumptions

What human writers should do:

Strategic positioning: Define POV, contrarian insights, unique framing

Original research: Conduct interviews, analyze proprietary data

Domain expertise: Add nuance, context, real-world experience

Quality control: Ensure EEAT signals, verify claims, refine voice

The Canto/Ascend2 research shows the top AI use cases are performance analysis (41%) and optimization (40%)—not just creation (38%). Organizations treating AI as an analyst, not a writer, are the ones seeing results.

Think of AI as a co-pilot for content intelligence. It can summarize research, identify patterns, and suggest optimizations. But it can't write the definitive piece on a topic it has no experience with. That requires human judgment, domain expertise, and strategic positioning.

Case Study Layer: What High-Performing Organizations Are Doing Differently

Example 1: From Volume to Expertise

Company: Mid-market B2B SaaS (marketing automation)

Old strategy: Publishing 40 blog posts per month targeting long-tail keywords. Traffic was flat. Conversions declining.

New strategy: Cut publishing frequency to 8 pieces per month. Focused on:

Original research (annual "State of Marketing Automation" report with 1,200+ survey responses)

Deep domain expertise (all material written or co-authored by VP of Marketing with 15 years experience)

Proprietary data (benchmarks from their customer base)

Results after 6 months:

Organic traffic down 12% (expected)

Citations up 340%

AI references (tracked via Brand24 and mentions in ChatGPT/Perplexity) up 8x

Demo requests from organic up 67%

Cost per acquisition down 31%

The traffic drop didn't matter. The traffic they got was higher intent. The citations and AI references compounded recognition. Their annual report became the reference source competitors cited.

Example 2: Distribution Beyond Google

Company: Early-stage developer tools startup

Old strategy: SEO-optimized docs and blog content. Minimal traction.

New strategy: Founder-led distribution:

Weekly LinkedIn posts sharing specific learnings (with metrics, not vanity stats)

Monthly deep-dive posts on Reddit (r/webdev, r/programming) answering common questions

Quarterly podcast appearances on developer-focused shows

Open-source contributions with detailed documentation

Results after 9 months:

Search traffic: minimal (still early)

LinkedIn followers: 4,200 → 23,000

Reddit karma: 890 → 12,400 (high-quality contributions)

Citations: 12 → 94 (mostly from developer blogs citing founder's insights)

Developer signups: 3x increase, 70% attributed to "saw founder on platform"

They built entity recognition before they had search visibility. When they eventually ranked, they ranked as a recognized name, not an unknown brand.

Example 3: Interactive Tools as Content

Company: Enterprise analytics platform

Old strategy: Whitepapers and case studies. Low engagement.

New strategy: Built three interactive tools using AI technology:

ROI calculator using proprietary industry benchmarks

Data maturity assessment (scores your org, gives personalized recommendations)

Free data visualization tool (lightweight version of their paid product)

Results after 12 months:

14,000+ calculator uses

267 citations (from industry blogs, comparison sites, Reddit threads)

Featured in 3 industry roundups as "best free tool"

890 demo requests directly attributed to tools

Tools cited in ChatGPT responses when users ask "how to calculate analytics ROI"

The tools became citation magnets. They provided value AI couldn't replicate. They built citations and entity recognition that compounded over time.

Strategic Implications: How to Compete in the AI Content Era

Here's the playbook I use with growth organizations to rebuild their content strategy:

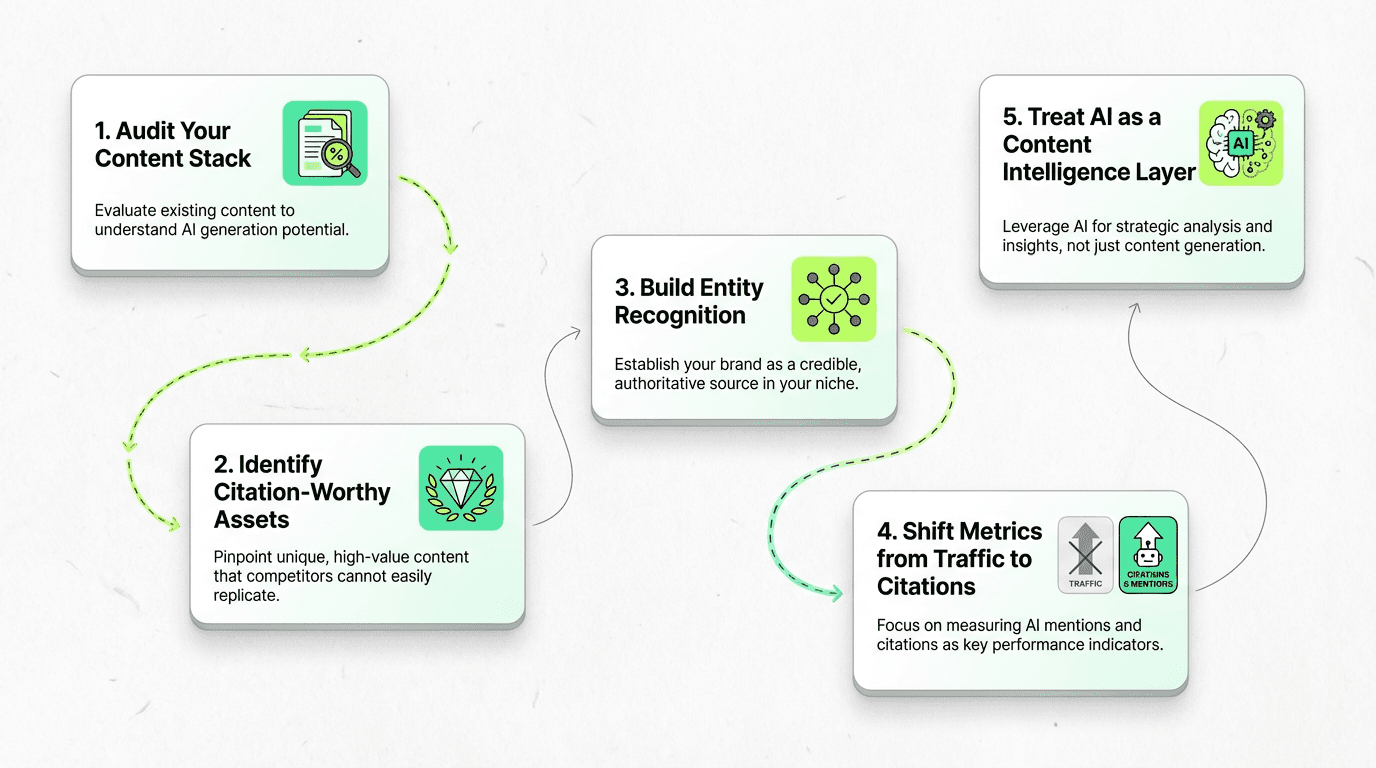

1. Audit Your Content Stack

Question: What percentage could AI generate?

If the answer is >70%, you have a differentiation problem. The material exists, but it has no moat. It won't be cited, linked to, or prioritized by search engines or AI models.

Action steps:

Review your last 50 published pieces

Tag each as: Proprietary Data, Deep Expertise, Contrarian POV, Interactive, or Generic

Calculate the percentage that falls into "Generic"

Prioritize updating or consolidating generic pieces into fewer, more differentiated assets

2. Identify Your Citation-Worthy Assets

Question: What could you publish that competitors can't replicate?

This is your moat. Focus here.

Potential assets:

Original research: Customer surveys, industry benchmarks, proprietary performance data

Domain expertise: Founder insights, practitioner breakdowns, lessons from execution

Interactive tools: Calculators, assessments, free versions of paid products

Contrarian analysis: Challenge prevailing narratives with evidence

The goal isn't more content pieces. It's creating content at scale that AI models and search engines have a reason to cite.

3. Build Entity Recognition Systematically

Question: Are you recognized as a credible source on your core topics?

Entity recognition isn't built overnight. It requires consistent authorship, topical depth, and citations from credible sources over time.

Tactics:

Consistent authorship: Publish under real names with credentials, not "Marketing Team"

Topical depth: Own 2-3 core topics rather than covering everything

Earn citations: Create research others reference, contribute to industry conversations

Cross-platform presence: LinkedIn, podcasts, Reddit, Quora—build recognition beyond your blog

At Metaflow, we track entity recognition through AI citations (mentions in ChatGPT, Perplexity), brand mentions in industry publications, and inbound links from credible sources. These are leading indicators that compound over time.

4. Shift Metrics from Traffic to Citations

Question: Are you measuring what matters in 2026?

Keyword rankings and raw traffic are lagging indicators; evolve your seo kpis framework to prioritize leading signals. AI citations, entity mentions, and high-intent conversions are leading indicators.

New metrics to track:

AI citations: How often are you cited in ChatGPT, Perplexity, Claude responses? (Use Brand24, Mention, or manual testing)

Entity mentions: Are you mentioned in industry roundups, competitor comparisons, social media conversations?

Referral quality: Are inbound links from credible sources or spam?

Content engagement: Time on page, scroll depth, return visitors (signals of value)

Pipeline attribution: Which pieces drive demo requests, not just traffic?

The shift from SEO to AEO means shifting from optimizing for rankings to optimizing for citation-worthiness. Traffic without citations is vanity. Citations without traffic still build long-term recognition.

5. Treat AI as a Content Intelligence Layer

Question: Are you using AI to write or to analyze?

The organizations seeing the 75% productivity gain and performance improvement are using AI correctly—as an ai powered content strategy layer, not a writer.

AI as intelligence:

Content gaps: Analyze competitor material, identify underserved questions

Performance patterns: Surface which content types, formats, and topics drive results

Optimization: Test headlines, refine CTAs, improve readability

Research synthesis: Summarize customer interviews, extract insights from data

Humans as creators:

Strategic positioning: Define your contrarian POV

Original research: Conduct surveys, analyze proprietary data

Domain expertise: Add context, nuance, real-world experience from the writing process

Quality control: Ensure EEAT signals, verify claims

The teams treating AI content tools as a replacement for human expertise are the ones seeing performance decline. The ones treating AI as a content intelligence layer are the ones seeing 67% increases in demo requests.

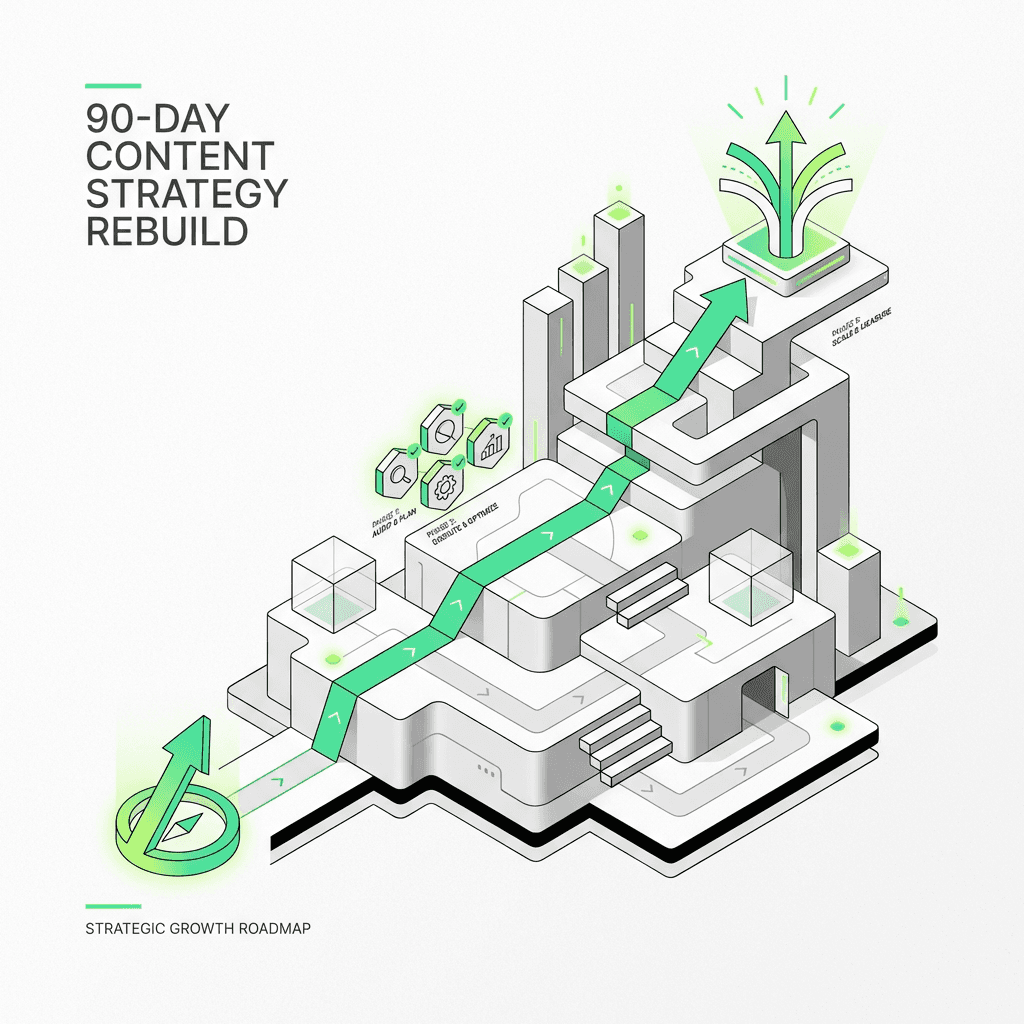

The Content Marketing Strategy Rebuild: A 90-Day Framework

Here's the tactical roadmap I use with B2B SaaS and enterprise marketing teams to shift from volume to citation-worthiness:

Month 1: Audit & Strategy

Week 1-2: Content audit

Tag all published material as: Proprietary, Expert, Contrarian, Interactive, or Generic

Identify your top 10 performers (traffic, conversions, citations)

Analyze what made them work: Was it data, expertise, POV, or format?

Week 3-4: Competitive analysis using [ai search competitor analysis tools](https://metaflow.life/blog/ai-search-competitor-analysis-tools)

What are competitors publishing? (Likely generic, AI-generated material)

What gaps exist? (Questions no one is answering definitively)

What can you create that they can't? (Your proprietary data, domain expertise, contrarian insights)

Output: Content strategy document defining your 2-3 core topics, differentiation moat, and content types to prioritize

Month 2: Pilot & Build

Week 5-6: Create 1-2 citation-worthy assets

Original research: Survey customers, analyze performance data, publish findings (informed by ai content ideation tools, but grounded in your data)

Interactive tool: Build a calculator, assessment, or free tool using AI technology

Contrarian analysis: Challenge a prevailing narrative with evidence

Week 7-8: Distribution beyond search

Founder-led LinkedIn: Share insights with metrics, not vanity stats

Reddit/Quora: Answer high-value questions in your domain

Podcast outreach: Pitch your contrarian POV to relevant shows

Email outreach: Share your research with journalists, influencers, industry publications

Output: 1-2 published citation-worthy assets, distributed across 3+ platforms

Month 3: Measure & Scale

Week 9-10: Track new metrics

AI citations: Manual testing (ask ChatGPT, Perplexity questions in your domain—are you cited?)

Entity mentions: Set up Brand24 or Mention—ai visibility tools—to track brand references

Referral quality: Analyze inbound link sources (credible or spam?)

Pipeline attribution: Which pieces drove demo requests?

Week 11-12: Scale what works

Double down on content types that drove citations and pipeline

Consolidate or archive generic material that isn't performing

Build a content calendar around citation-worthy assets, not volume

Output: Performance dashboard tracking AI citations, entity mentions, and pipeline attribution. Roadmap for Q2 content production focused on quality over quantity.

The Hard Truth: Most Organizations Won't Make This Shift

Here's why:

1. Volume is easier to measure than citation-worthiness

"We published 40 blog posts this month" is a clear output. "We became the cited source on X topic" is harder to quantify and takes longer to materialize.

2. AI makes bad content strategy easier to execute

AI content tools let you publish 5x more generic material. It feels like progress. It's not.

3. The incentive structure rewards activity, not outcomes

Content marketers are often measured on output (posts published, traffic generated), not business outcomes (pipeline, revenue, entity recognition). The teams that shift to citation-worthiness are the ones whose leadership understands the game has changed.

4. The shift requires domain expertise, not just production capacity

You can't outsource citation-worthiness to an AI writing assistant. It requires human writers with real experience, proprietary data, and a willingness to take a contrarian stance. That's harder and more expensive than prompting ChatGPT.

The organizations that make this shift will dominate their categories. The ones that don't will produce more and more content that fewer and fewer people see.

Final Thoughts: The Content Moat in the Age of AI

AI content generation hasn't democratized content marketing. It's made differentiation the only moat that matters.

The playbook that worked from 2015-2022—publish more, optimize for keywords, distribute via search and email—is dead. The new playbook is harder: Create what AI can't replicate. Build entity recognition. Optimize for citation-worthiness, not rankings.

The 75% productivity gain is real. But only if you're using AI content tools to analyze, optimize, and identify opportunities—not to write generic material that gets lost in the noise.

The teams winning in 2026 aren't publishing more blog posts. They're publishing original research, building interactive tools, sharing contrarian insights, and distributing across platforms where their audience engages. They're training AI models to cite them as the definitive source.

The shift from content production to answer ownership is the strategic inflection point. The organizations that make it will own their categories. The ones that don't will keep publishing into the void.

The question isn't whether AI will replace content creators. It's whether content creators will adapt to a world where AI is the primary distribution channel.

The game has changed. Have you?

FAQs

What is AI content generation in 2026?

AI content generation in 2026 is the use of generative AI (and increasingly AI agents) to draft, edit, repurpose, and ship marketing assets across channels. The differentiator is no longer "can you produce content," but whether the content contains expertise, proprietary signals, and citation-worthy claims that search engines and AI assistants will reuse.

Why is AI increasing content output but reducing performance?

Because AI removes production constraints, it creates a supply glut of similar "good enough" pages and newsletters. When content is undifferentiated, Google surfaces fewer clicks via AI Overviews and users tune out repetitive messaging—so volume rises while organic search, email engagement, and conversion efficiency decline.

Does AI-generated content hurt SEO?

AI-generated content doesn't inherently hurt SEO—Google's guidance focuses on whether content is helpful and not made primarily to manipulate rankings (see Google Search's guidance on AI-generated content in the Google Search Central Blog). The risk is publishing "raw" AI pages that are generic, inaccurate, or thin on real Experience/Expertise (E-E-A-T), which can underperform even if it's well-formatted.

What "no longer works" for SEO and content marketing in 2026?

Volume-based SEO (lots of long-tail variations), generic thought leadership, and AI-first/human-last publishing workflows are the biggest losers. These approaches produce content that's easy for models to synthesize and hard for algorithms (or readers) to trust, cite, or differentiate.

What still works for AI content generation and SEO in 2026?

Content that AI can't cheaply replicate: proprietary data and original research, practitioner-led expertise, contrarian/nuanced POV backed by evidence, interactive tools (calculators/assessments), and distribution in communities beyond Google. This "differentiation stack" creates reasons to cite you, link to you, and remember you.

What is Answer Engine Optimization (AEO), and how is it different from SEO?

SEO optimizes for rankings and clicks; AEO optimizes for being the direct answer in AI and zero-click surfaces (AI Overviews, assistants, voice, summaries). Practically, AEO prioritizes clarity, explicit definitions, structured claims, and trust signals so your content can be extracted and cited.

How do you make content more "citation-worthy" for AI models?

Make your key claims explicit, support them with verifiable data or named sources, and structure the page so answers are easy to extract (tight paragraphs, definitions, labeled sections, and consistent terminology). Also build entity recognition over time through consistent authorship, topical depth, and mentions/citations from credible publications.

What metrics should teams track if organic traffic is becoming more zero-click?

Track AI citations/mentions, entity mentions, and qualified conversions—not just rankings and sessions. Pair that with referral quality (who's linking), engagement depth (scroll/time), and pipeline attribution to see which assets create "answer ownership" rather than temporary traffic spikes.

How should marketers use AI tools without falling into the volume trap?

Use AI as a content intelligence layer—research, SERP gap analysis, outline generation, performance analysis, and optimization—then inject human expertise, original data, and accountability before publishing. Metaflow should show up in this workflow as the system that helps operationalize and standardize the intelligence-to-output pipeline once you've defined what "citation-worthy" means for your brand.